Why Standards-Based Development Practices Matter—Even When Not Mandatory

A dedicated industry around verification and validation—driven by standards such as IEC 61508, ISO 26262, IEC 62304, MISRA C, and CWE—has emerged to ensure software is reliable, secure, and of high quality. Even if your product does not fall under the strict regulatory requirements of these standards, adopting their proven best‑practice processes can dramatically reduce bugs, shorten time‑to‑market, and cut maintenance costs.

Types of Errors and Tools to Address Them

Software defects fall into two broad categories, each requiring distinct mitigation techniques:

- Coding errors—e.g., out‑of‑bounds array accesses—can be detected early with static analysis.

- Application errors arise when the code fails to meet its specifications; these are uncovered only through requirements‑driven testing.

Coding Errors and Code Review

Static analysis is a powerful first line of defense, especially when integrated at the start of a project. It identifies violations of coding rules that could otherwise become hard‑to‑debug bugs later. Complementary to static analysis, a rigorous code review checks that the code complies with a chosen coding standard, such as MISRA C:2012.

In theory, a language designed for safety—Ada, for instance—would eliminate many pitfalls through strong typing and explicit constructs. In practice, most embedded teams rely on C or C++, which offer flexibility but also expose developers to subtle traps like undefined, unspecified, and implementation‑defined behaviors. By restricting the language features used and forbidding risky constructs (e.g., goto, malloc, mixing signed and unsigned arithmetic), a coding standard can raise the bar for defect prevention.

Here are a few common pitfalls illustrated in the C/C++ world:

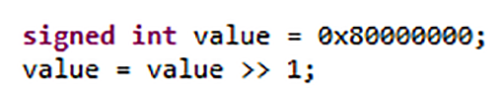

- Implementation‑defined shift of a signed integer: does a right shift fill the high‑order bit with zeros or ones?

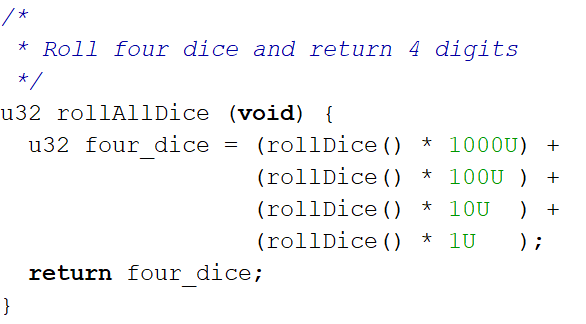

- Unspecified order of function argument evaluation, which can alter the result of a simple routine like

rollDice(). - Mixing signed and unsigned values, which may lead to subtle overflows.

Figures 1 and 2 below demonstrate how the same code can behave differently across compilers, underscoring the importance of a disciplined coding approach.

Figure 1: The behavior of some C and C++ constructs depends on the compiler used. (Source: LDRA)

Figure 2: The behavior of some C and C++ constructs is unspecified by the languages. (Source: LDRA)

Adopting a well‑established coding standard such as MISRA C:2012 helps avoid these pitfalls. The standard’s rules cover over 300 specific coding practices, each backed by explanations that reference the language’s undefined and unspecified behaviors. Most guidelines are “decidable,” meaning a static analysis tool can automatically detect violations; a few are “undecidable,” where the tool must flag potential issues and developers decide whether they are real problems.

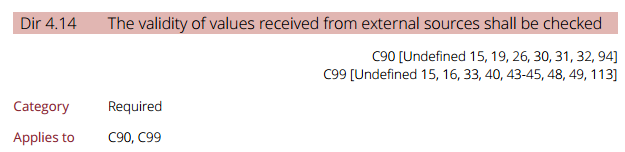

In April 2016, MISRA expanded its scope to address security‑critical software by adding 14 new guidelines. Directive 4.14, for example, explicitly targets undefined behavior, providing an extra layer of protection for security‑oriented projects. Figure 4 shows how MISRA’s security considerations align with the broader safety framework.

Figure 4: MISRA and security considerations. (Source: LDRA)

Application Errors and Requirements Testing

While coding standards guard against low‑level bugs, application bugs arise when the software fails to meet its intended behavior. Detecting these requires a solid requirements base and traceable linkage between requirements, design, and code.

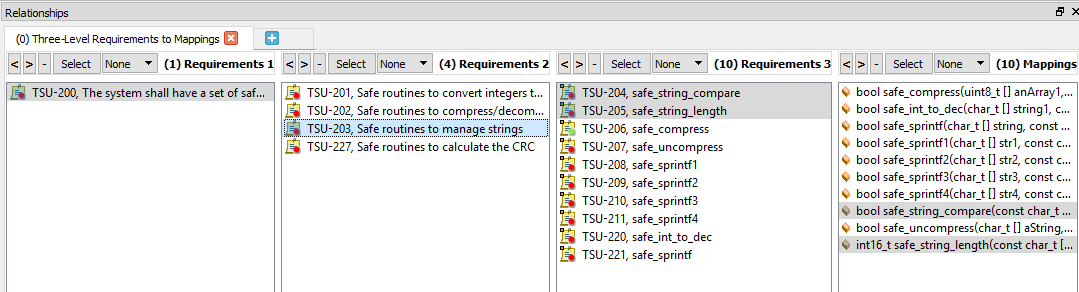

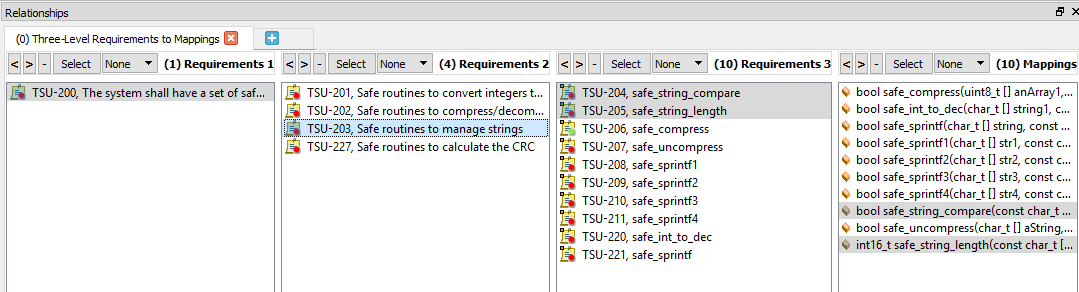

Bidirectional traceability ensures every requirement is implemented and every implementation can be traced back to a requirement. Figures 5 and 6 illustrate this two‑way mapping, revealing missing or redundant functionality early in the lifecycle.

Figure 5: Bidirectional traceability, with function selected. (Source: LDRA)

click for larger image

Figure 6: Bidirectional traceability, with requirement selected. (Source: LDRA)

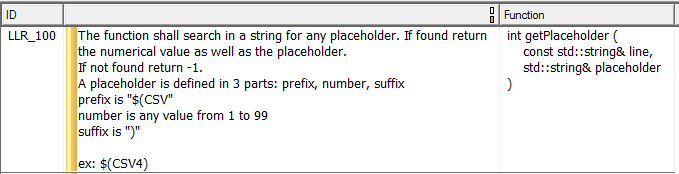

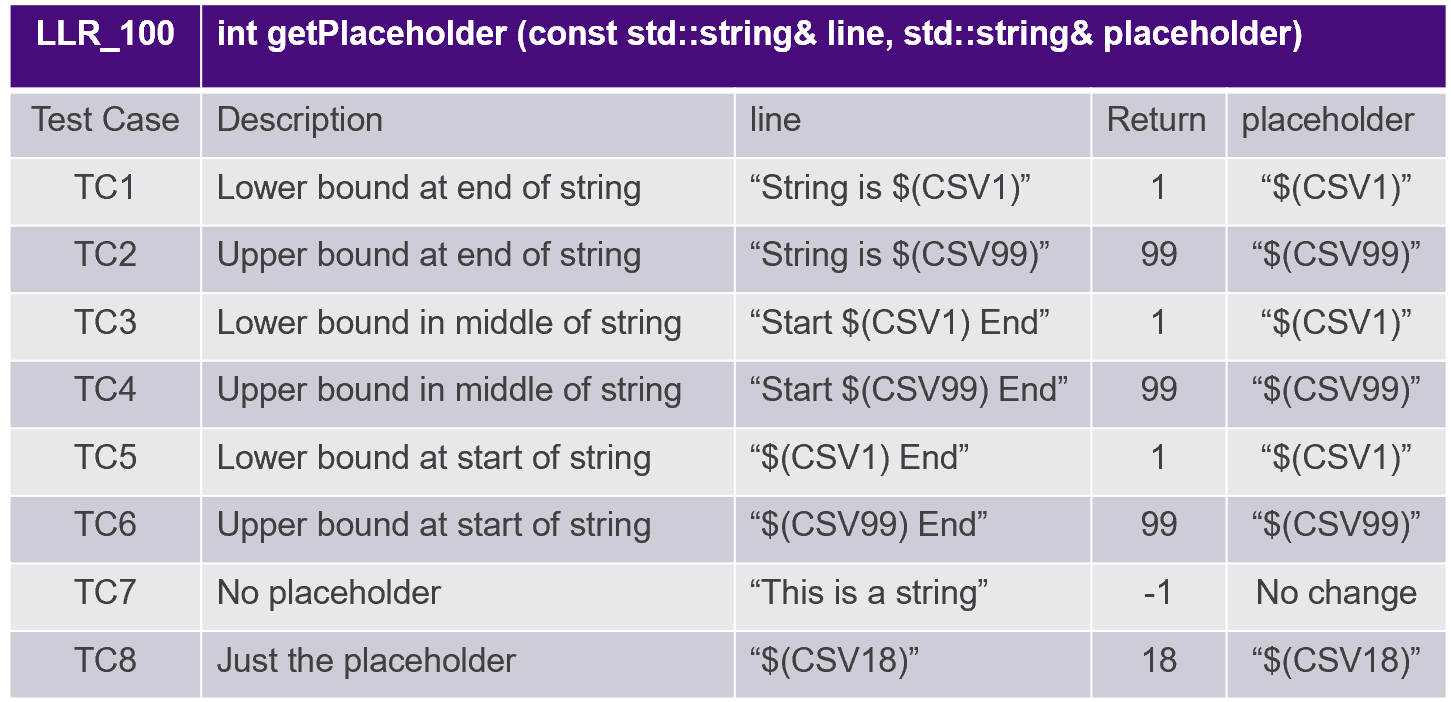

Once traceability is in place, test cases can be derived from low‑level requirements. Figure 7 shows a typical low‑level requirement that fully specifies a single function’s behavior, and Table 1 demonstrates how test cases map to those requirements.

Figure 7: Example low‑level requirement. (Source: LDRA)

Table 1: Test cases derived from low‑level requirements. (Source: LDRA)

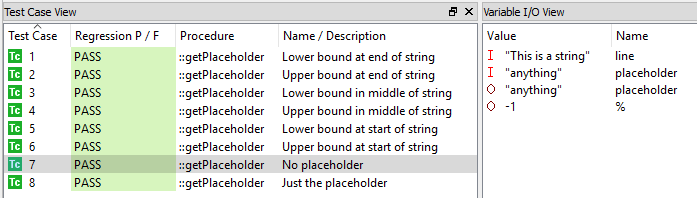

Executing these tests with a unit‑testing framework on both host and target confirms that the implementation matches the requirement. Figure 8 shows a regression suite where all test cases pass. After test execution, structural coverage metrics verify that the entire code base has been exercised; any gaps prompt additional tests or code refactoring.

Figure 8: Performing unit tests. (Source: LDRA)

Conclusion

Software complexity is rising, and so is the risk of defects. Best‑practice development—grounded in a modern coding standard like MISRA C:2012, rigorous metrics, traceable requirements, and requirements‑based testing—provides a proven path to high‑quality, reliable, and robust code. Whether or not your project is bound by formal safety regulations, the discipline and transparency these practices bring are a net benefit to any development team.

Embedded

- Why D.E.F.I. Is the Premier Choice for Houston Fiberglass Solutions

- Integrating Simulation with 3D Printing: A Proven Path to Faster, Cost‑Effective Design

- Protect Your Motors: The Essential Benefits of Line Reactors

- 7 Compelling Reasons to Adopt Fusion 360’s Additive Manufacturing Workspace

- Unlock Peak Efficiency: Plastic CNC Machining for Part Production

- Maximize Efficiency with Remote Expert Solutions in Industry 4.0

- Why Regular Crane Inspections Are Crucial—Beyond Compliance

- NylonX vs. CarbonX: Choosing the Right Material for Your 3D Printing Projects

- Why a DC Motor Controller Is Essential: 7 Key Benefits

- Maximize Returns: The Benefits of Consignment Sales for Your Used Machinery