EEMBC Launches ADASMark: A Real-World Benchmark for Advanced Driver Assistance SoCs

Automotive chip makers regularly tout their system‑on‑chips (SoCs) built for Advanced Driver Assistance Systems (ADAS). Yet, without objective metrics, stakeholders—reporters, analysts, and most critically, automakers—find it nearly impossible to distinguish one ADAS SoC from another.

In practice, the market relies on vendor claims or crude metrics such as trillion operations per second (TOPS). Comparing Intel/Mobileye’s EyeQ5 to Nvidia’s Xavier with TOPS alone is misleading and offers little actionable insight.

To address this gap, the embedded‑hardware consortium EEMBC recently launched ADASMark, a suite of autonomous‑driving benchmarks now available for licensing. The toolset is engineered to help tier‑one suppliers and automakers optimize compute resources—from CPUs to GPUs and dedicated accelerators—when designing their ADAS stacks.

Mike Demler, senior analyst at The Linley Group, praised ADASMark: “It’s refreshing that this benchmark is grounded in real workloads, not just abstract numbers.” He highlighted participation from AU‑Zone Technologies, NXP Semiconductors, and Texas Instruments, which together give the suite a practical edge over generic tests like Baidu’s DeepBench.

Framework‑centric Design

EE Times interviewed Peter Torelli, EEMBC’s president and CTO, about the challenges automakers face as vehicles become increasingly automated. He noted that while many embedded systems now deploy multiple cores, “there are still very few frameworks that can fully leverage asymmetric compute resources.” Without a robust framework, each benchmark instance would vary dramatically across hardware, complicating cross‑platform comparisons.

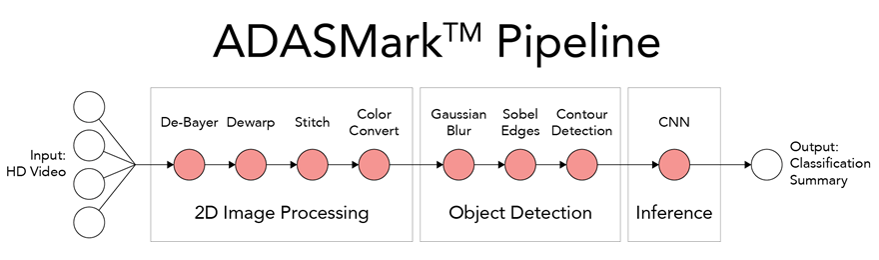

Illustrated by the ADASMark pipeline diagram below, the baseline performance uses a single CPU for all stages. However, a developer might wish to plug in a custom neural‑net chip for the final inference stage or a dedicated DSP for color‑space conversion.

In such scenarios, a framework simplifies the retargeting of compute devices, eliminating the need for developers to write bespoke interface code—a process that is time‑consuming, error‑prone, and can distort benchmark results.

“AMP and OpenAMP are designed for symmetric multicore systems and don’t address our needs,” Torelli explained. “OpenCV and OpenVX offer partial support, but manufacturer adoption is inconsistent.” This analysis led EEMBC to create ADASMark around a custom framework tailored to real-world workloads.

Imaging‑Pipeline Focus

Key features of ADASMark include an OpenCL 1.2 Embedded Profile API to ensure consistency across implementations; a series of micro‑benchmarks that quantify performance for SoCs handling computer‑vision, autonomous‑driving, and mobile‑imaging tasks; and a traffic‑sign‑recognition CNN inference engine developed by AU‑Zone Technologies.

Because ADAS requires intensive object‑detection and visual‑classification capabilities, ADASMark zeroes in on the imaging pipeline. It models highly parallel workloads such as surround‑view stitching, contour detection, and CNN‑based traffic‑sign classification—exactly the tasks that underpin modern ADAS functionality.

Internet of Things Technology

- Advancing Microelectronics to Meet AI's Evolving Demands

- Leti Innovation Days Highlights Pioneering Edge AI Projects

- Unlocking AI Value with Unlabeled Data: How Hologram Stress‑Tests Autonomous Perception

- Demystifying Custom Extensions in RISC‑V SoC Design

- 21 Expert Guides: How to Maximize Industrial Remote Access for Industry 4.0 Success

- IoT Enhances Workplace Safety as Employees Return to Offices

- Telcos Can 'Roar Out of Recession' With Strategic Balance of Cost Cuts and Investment

- Why SQL Remains the Benchmark for Reliable Data Integration

- Relayr Eliminates Risk in Industrial IoT Deployments

- Feasibility Study & HACCP Plan Enable Gardener’s Orchard to Expand and Thrive