Unlocking AI Value with Unlabeled Data: How Hologram Stress‑Tests Autonomous Perception

Data is the lifeblood of autonomous vehicle (AV) development, powering the deep‑learning models that enable self‑driving systems.

To stay competitive, AV vendors log millions of miles on public roads, storing petabytes of raw footage. Waymo, for instance, has surpassed 10 million real‑world miles and 10 billion simulated miles of recorded data.

Yet the industry rarely asks a critical question: How much of that vast archive has actually been labeled, and how accurate are those labels?

Phil Koopman, co‑founder and CTO of Edge Case Research, warned in a recent EE Times interview that “nobody can afford to label all of it.”

Data labeling is both time‑consuming and costly

Annotation demands expert human reviewers to watch short video clips and draw bounding boxes around every vehicle, pedestrian, sign, and traffic light relevant to an autonomous driving algorithm. The process is laborious and expensive.

A Medium article titled “Data Annotation: The Billion Dollar Business Behind AI Breakthroughs” highlights the rise of managed labeling services that deliver domain‑specific, high‑quality datasets. The piece notes that some self‑driving startups are paying these firms up to millions of dollars per month.

IEEE Spectrum reported that Carol Reiley, co‑founder and president of Drive.ai, said, “Thousands of people label boxes around objects. For every hour of driving, it takes roughly 800 human hours to label.” These teams struggle to keep pace with the data influx.

Companies like Drive.ai are exploring deep‑learning‑based annotation assistants to accelerate the tedious labeling process.

Leveraging unlabeled data

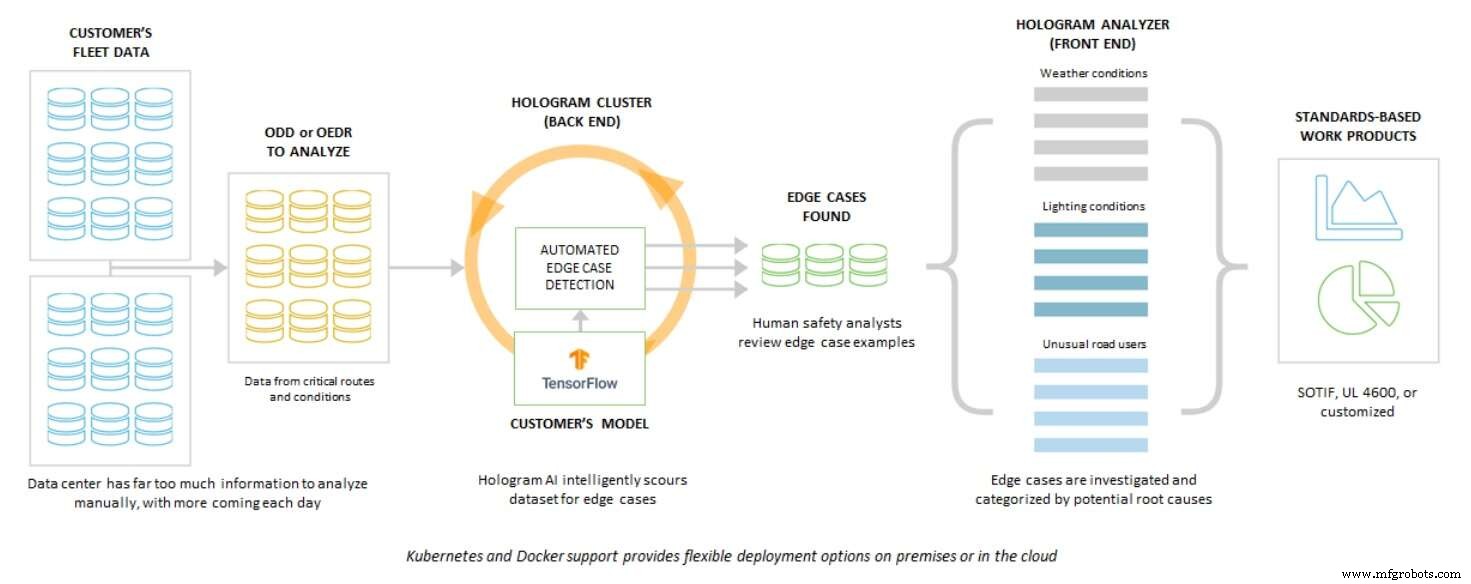

Koopman believes there’s an alternative to labeling petabytes of footage: harnessing the data itself. Edge Case Research developed “Hologram,” an AI perception stress‑testing and risk‑analysis system designed to accelerate perception software development without exhaustive annotation.

Hologram processes the same unlabeled footage twice. First, it runs the footage through a baseline perception engine. Then it re‑processes the clip with a subtle noise perturbation, creating a “stressed” scenario. The noise exposes hidden weaknesses in the AI’s decision boundaries.

While a human might see a blurred object and hesitate, the AI may either miss it entirely or misclassify it. By revealing such failures, Hologram helps developers pinpoint the exact conditions that trigger misbehavior.

Because the system operates on unlabeled data, it bypasses the labeling bottleneck while still providing actionable insights. Hologram flags problematic scenes with color‑coded bars: orange indicates a near‑miss that suggests retraining, and red marks a complete failure that invites deeper investigation into triggering factors such as background noise or poor contrast.

Koopman emphasized that Hologram’s “secret sauces” — advanced engineering and proprietary algorithms — enable it to surface only the most critical anomalies, making human review far more efficient.

He also cautioned that the publicly available datasets used by Hologram are restricted to the research community and should be paired with the same perception engine that generated the data for meaningful analysis.

Visualizing Hologram’s impact

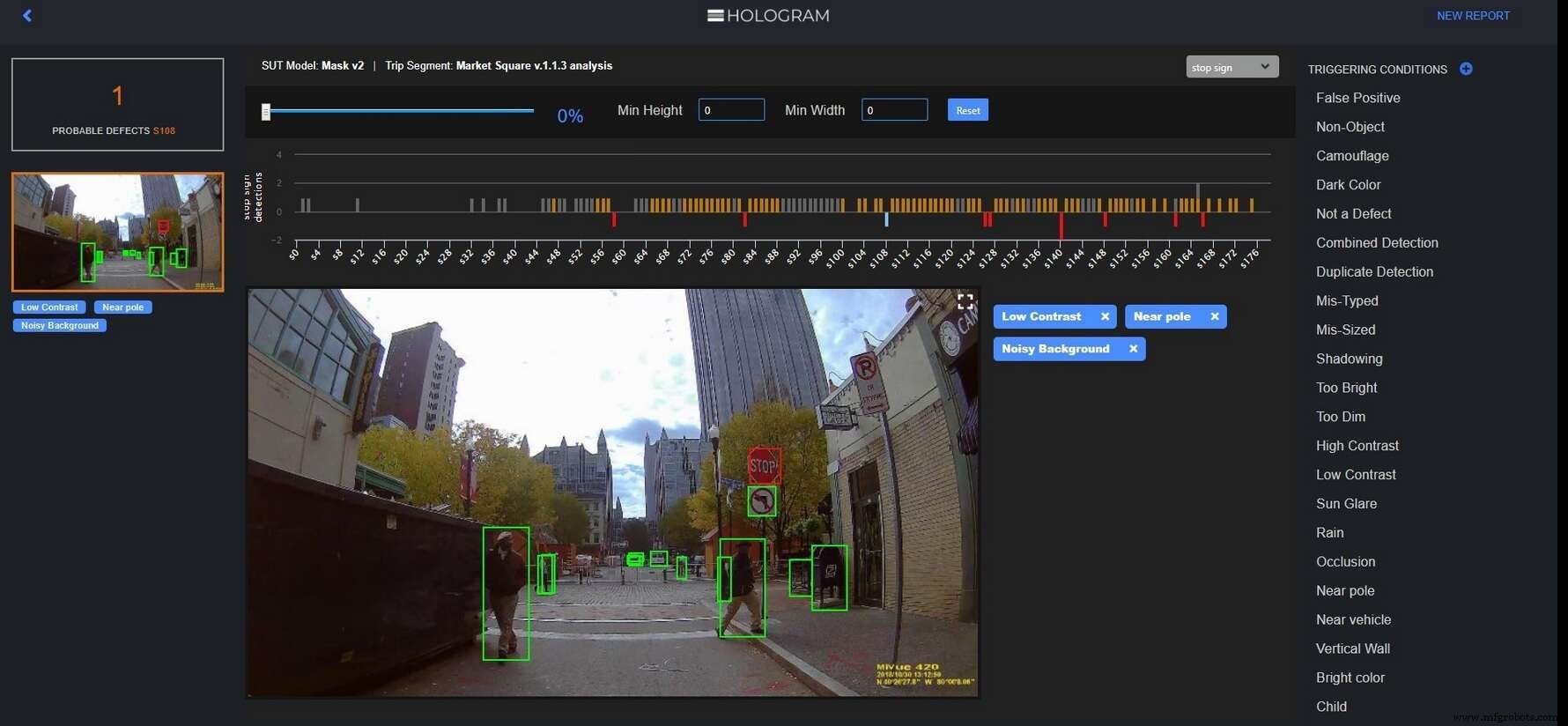

The Hologram Engine highlights where a perception system fails to detect a stop sign, revealing triggering conditions such as noisy backgrounds. (Source: Edge Case Research)

Hologram’s interface marks near‑misses with orange bars, prompting algorithm retraining, and red bars that flag complete detection failures, enabling designers to trace causes like proximity to a pole or low contrast.

Hologram helps AV designers uncover edge cases where perception software behaves oddly, potentially jeopardizing safety. (Source: Edge Case Research)

Hologram helps AV designers uncover edge cases where perception software behaves oddly, potentially jeopardizing safety. (Source: Edge Case Research)

Strategic partnership with Ansys

Ansys recently announced a collaboration with Edge Case Research to embed Hologram within its simulation platform. The goal is to build the industry’s first comprehensive simulation pipeline for autonomous vehicle development. Ansys is also working with BMW, which aims to deliver its first AV by 2021.

ANSYS and BMW create simulation tool chain for Autonomous Driving. (Source: Ansys)

— Junko Yoshida, Global Co‑Editor‑In‑Chief, AspenCore Media, Chief International Correspondent, EE Times

>> This article was originally published on our sister site, EE Times: “Use Unlabeled Data to See If AI Is Just Faking It.”

Internet of Things Technology

- Data for All: How Democratizing Patient Data Shapes the Future of Healthcare

- Industrial Automation: A Strategic Guide for OEMs and Equipment Vendors

- Why Data Is the Cornerstone of Reliability Engineering

- 3 Keys to Successful Industrial IoT Deployment

- Unlocking the Value of IoT Data: Secure, Insight‑Driven Strategies

- Why Analog Measurements Drive Plant Performance

- DataOps: Streamlining Data Pipelines for Faster, Reliable Analytics

- DataOps: Revolutionizing Healthcare Automation for Cost Efficiency and Revenue Growth

- Unlocking CMMS Success: How Machine Data Drives Maintenance Efficiency

- Unlocking the Power of Machine Data in Modern MES