Accelerating Deep Learning with FPGAs: Speed, Flexibility, and Energy Efficiency

Accelerating Deep Learning with FPGAs: Speed, Flexibility, and Energy Efficiency

I recently attended the 2018 Xilinx Development Forum in Silicon Valley, where I discovered Mipsology, a startup that claims to have solved the AI‑related challenges of deploying neural networks on field‑programmable gate arrays (FPGAs). Founded with a bold vision, Mipsology demonstrates how FPGAs can accelerate any neural network to the highest performance possible, unencumbered by traditional deployment constraints.

Using Xilinx’s new Alveo boards, Mipsology achieved more than 20,000 images per second across a suite of popular networks, including ResNet‑50, Inception‑V3, and VGG‑19.

What Are Neural Networks and Deep Learning?

Neural networks, inspired by the human brain’s web of neurons, form the backbone of deep learning (DL). DL is a mathematical framework that learns to perform tasks autonomously by exposing the network to large volumes of data. The process of training a neural network—presenting it with millions of labeled samples—is followed by inference, where the trained model predicts outcomes for new data.

For example, a speech‑recognition network might be trained on thousands of voice recordings and then deployed to transcribe spoken words in real time.

Why Inference Demands Extreme Performance

Inference requires billions or trillions of additions and multiplications per second—far more than training, which occurs only once. Consequently, inference is the primary driver of computational demand in production AI systems.

Four Hardware Options for Deep‑Learning Inference

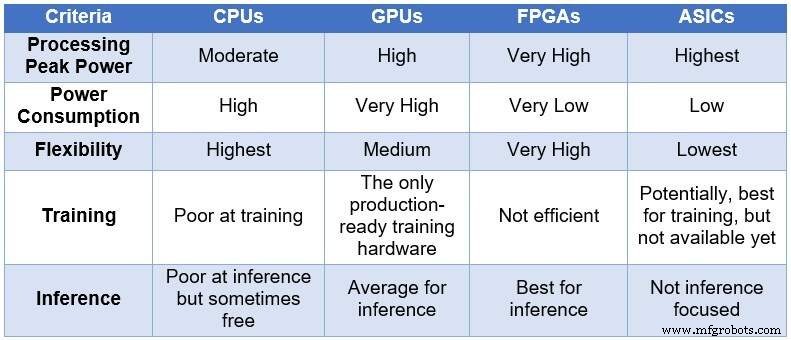

Engineers have traditionally relied on four categories of processors, ranked from most to least power‑hungry and least flexible: CPUs → GPUs → FPGAs → ASICs. The table below summarizes key differences.

Comparison of CPUs, GPUs, FPGAs, and ASICs for DL computing (Source: Lauro Rizzatti)

- CPUs offer maximum flexibility but suffer from high latency and low throughput for DL workloads.

- GPUs deliver massive parallelism but consume significant power and require robust cooling.

- ASICs can be extremely efficient for a narrow task, yet their long development cycle and fixed architecture make them ill‑suited for rapidly evolving models.

- FPGAs combine high throughput, low power consumption, and reconfigurability, making them ideal for dynamic inference workloads.

Why FPGAs Excel at Inference

Modern FPGAs contain millions of logic elements, thousands of DSP blocks, and embedded memory and ARM cores—all operating in parallel. A single clock cycle can trigger millions of concurrent operations, enabling trillions of calculations per second that map naturally onto DL workloads.

Key advantages of FPGAs over CPUs and GPUs include:

- Support for arbitrary data precision, allowing low‑precision arithmetic that boosts throughput.

- Power envelopes five to ten times lower than comparable CPU or GPU solutions for identical workloads.

- Reconfigurability: the same device can be updated to support new models without fabricating new silicon.

- Device scalability: from high‑end data‑center cards to compact edge modules, FPGAs fit a broad range of deployment scenarios.

Challenges and Trade‑Offs

Despite their strengths, FPGAs present a steep learning curve. Developing high‑performance designs requires specialized hardware‑description languages and a deep understanding of parallelism, which can be a barrier for teams accustomed to software‑centric workflows.

Nonetheless, for organizations that demand the combination of speed, flexibility, and energy efficiency, FPGAs represent the most compelling platform for deep‑learning inference today.

Internet of Things Technology

- ETSI Unveils First AI Security Standard Blueprint

- Leveraging DSPs for Real‑Time Audio AI at the Edge

- ICP Mustang‑F100: FPGA Accelerator for Real‑Time Deep Learning Inference

- AI’s Role in Driving Exponential Growth in the High‑Tech Sector

- Integrating Dual Deep Learning Models for Enhanced Industrial Automation

- Deep Learning vs. Machine Learning: Understanding the Key Differences

- Deep Learning Explained: The Key to Modern AI

- Enhancing End‑of‑Arm Tooling with 3D Printing: Precision, Speed, and Customization

- Maximizing B2B Success with Strategic Omni‑Channel Marketing

- Deep Learning: Revolutionizing Inspection Automation in Life Sciences