ETSI Unveils First AI Security Standard Blueprint

ETSI, the European body that sets standards for telecom, broadcasting and electronic communications, has released a 24‑page report that lays the groundwork for a unified AI security standard.

The report, titled ETSI GR SAI 004 and produced by the Securing Artificial Intelligence Industry Specification Group (SAI ISG), begins by articulating the core problem: how to secure AI‑driven systems. It focuses on machine learning and examines confidentiality, integrity and availability throughout the entire ML lifecycle. The document also highlights broader issues such as bias, ethics and explainability, and catalogs common attack vectors with real‑world examples.

To frame the discussion, the SAI ISG defines artificial intelligence as a system’s ability to process explicit or implicit representations and perform tasks that would be deemed intelligent if carried out by a human. While this definition covers a wide spectrum, the report concentrates on the most practical subset—machine learning—driven by advances in deep learning, abundant data and growing computational power.

Common ML paradigms covered include:

- Supervised learning – all training data is labelled, allowing the model to learn a mapping from inputs to outputs.

- Semi‑supervised learning – only a portion of the data is labelled, enabling the model to improve by leveraging both labelled and unlabelled samples.

- Unsupervised learning – the model discovers structure in unlabelled data, such as clusters or groupings.

- Reinforcement learning – agents learn a policy through interaction with an environment, optimizing a reward signal.

Deep neural networks dominate the landscape, with layers that emulate human brain circuitry. Training techniques such as adversarial learning—where the dataset contains both genuine and deliberately crafted adversarial samples—are also explored.

"There are a lot of discussions around AI ethics but none on standards around securing AI. Yet, they are becoming critical to ensure security of AI‑based automated networks," says Alex Leadbeater, Chair of the ETSI SAI ISG. "This first report is meant to provide a comprehensive definition of the challenges faced when securing AI."

Leadbeater added to embedded.com, "A reasonable estimate for the technical specifications is another 12 months. We will publish additional reports in the coming quarters—AI Threat Ontology, Data Supply Chain Report, and the SAI Mitigation Strategy report. The first security‑testing specification should appear by the end of Q2/Q3."

Report outline

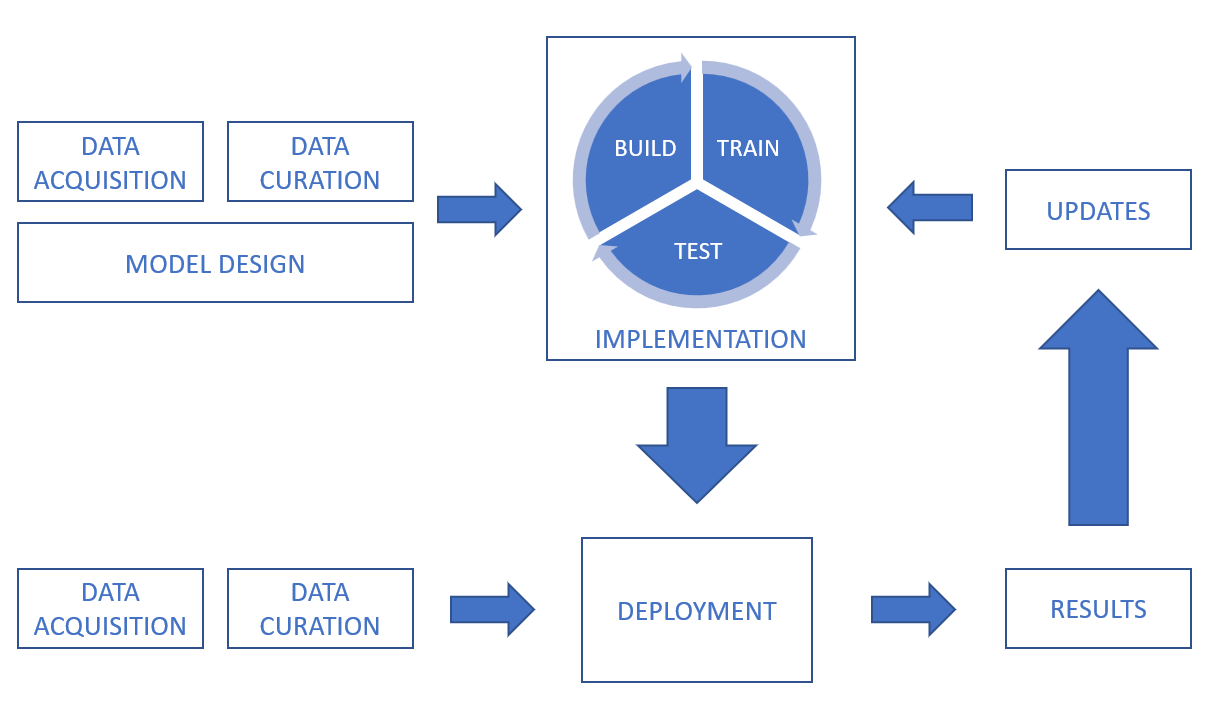

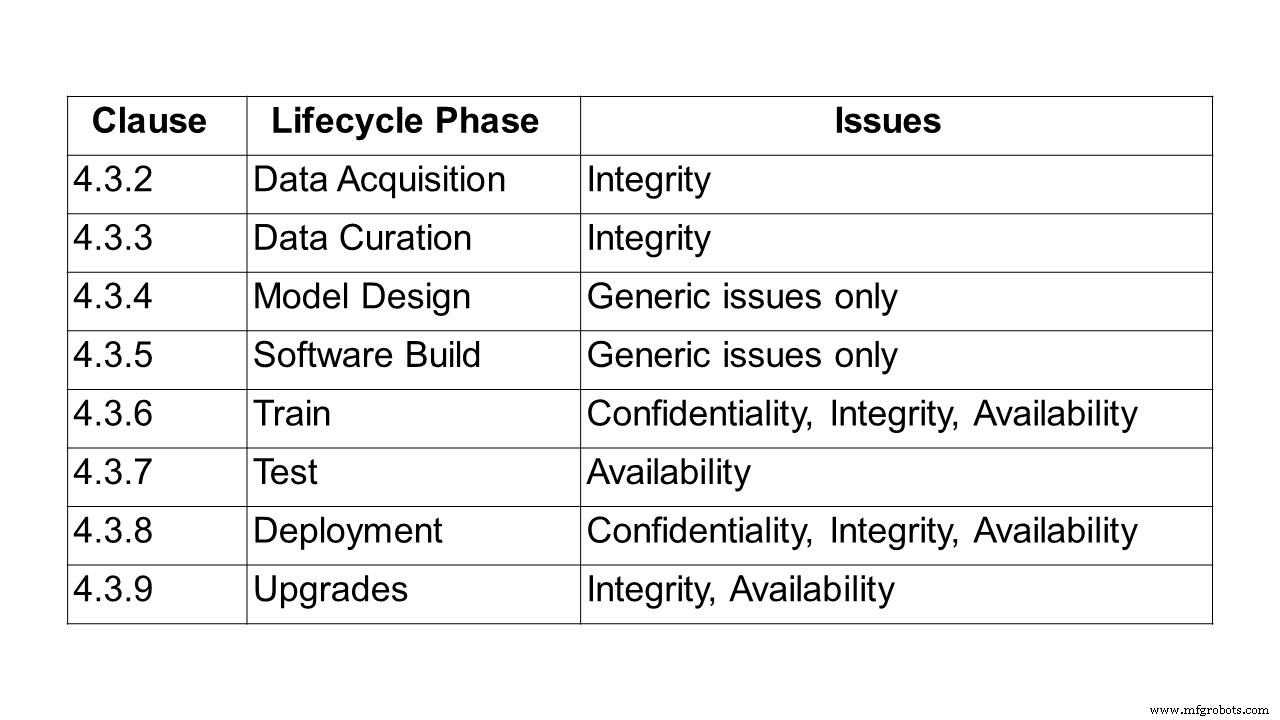

After defining AI and ML, the report dives into the data‑processing chain, addressing confidentiality, integrity and availability at every stage—from data acquisition, curation, model design and software build, through training, testing, deployment, inference and upgrades.

Data can come from sensors (e.g., CCTV, mobile phones, medical devices) or digital assets (trading platforms, documents, log files). It may be text, images, video or audio, and can be structured or unstructured. Security must cover both the data itself and its transmission and storage.

For example, during data curation, the integrity of datasets must be preserved when repairing, augmenting or converting them. In supervised ML, accurate and complete labelling is critical; poisoning attacks that corrupt labels can severely degrade model performance. Bias remains a key concern, and techniques such as data augmentation must be scrutinised for potential integrity impacts.

Design challenges around bias, data ethics and explainability are also examined. Bias can emerge not only during training but also after deployment, as illustrated by a 2016 chatbot that began tweeting offensive content due to malicious manipulation of its account. Although bias itself may not be a security flaw, it undermines user trust and can lead to functional failures.

Ethical considerations are highlighted through examples like autonomous vehicles and healthcare. A 2018 incident in Tempe, Arizona, where a self‑driving car struck a pedestrian, underscored legal and ethical dilemmas. MIT’s Moral Machine project, launched in 2016, explores how humans respond to algorithmic moral choices, informing how machines should act.

The report stresses that ethical concerns, while distinct from traditional security attributes (confidentiality, integrity, availability), shape user perception of trustworthiness and must be embedded in AI design.

Finally, the report catalogs attack types—including poisoning, backdoor and reverse‑engineering attacks—and presents real‑world use cases and incidents.

A poisoning attack manipulates the model during training so that it behaves in a way the attacker desires. Common vectors include:

- Data poisoning – injecting incorrect or mislabelled data during collection or curation.

- Algorithm poisoning – tampering with the learning algorithm itself, such as manipulating federated‑learning contributions.

- Model poisoning – replacing the deployed model with a malicious variant.

The evolution of AI—from its 1950s Dartmouth origins to modern applications—has led to threats such as ad‑blocker attacks, malware obfuscation, deepfakes and chatbot impersonation.

What’s next? Ongoing reports as part of this ISG

Future work items will deepen the investigation into several critical areas:

Security testing

This item aims to define objectives, methods and techniques for testing AI components. Guidelines will consider symbolic versus subsymbolic AI, non‑determinism, the test oracle problem and data‑driven behaviour. Topics include:

- Security testing approaches for AI

- Security‑focused test data for AI

- Test oracles tailored to AI outputs

- Adequacy criteria for AI security testing

- Security attribute test goals for AI

Results will build on the forthcoming AI Threat Ontology to address relevant threats.

AI threat ontology

This deliverable will create a shared taxonomy of AI‑specific threats, differentiating them from traditional system threats. It will align terminology across stakeholders and industries, covering attackers, defenders and AI systems.

Data supply chain report

Because training data is the lifeblood of AI, securing its supply chain is paramount. This report will survey current data sourcing practices, regulatory frameworks, standards and protocols, and perform a gap analysis to propose requirements for traceability, integrity and confidentiality of training data.

SAI mitigation strategy

Summarising existing and potential mitigations, this report will provide guidelines for defending AI systems against identified threats, outlining security capabilities, challenges and limitations for specific use cases.

The role of hardware in AI security

Hardware—both specialised and general‑purpose—plays a pivotal role in AI protection. The report will catalog hardware mitigations, requirements for secure AI, and opportunities to leverage AI for hardware defence, while noting hardware vulnerabilities that could amplify AI attacks.

The full ETSI report defining the problem statement for securing AI is available here.

Internet of Things Technology

- Essential Security Practices for Fog Computing

- Industrial IoT Security: Key Trends and Best Practices for 2020

- U.S. IoT Security Law Sets New Standards and Liability Requirements

- Accelerating Deep Learning with FPGAs: Speed, Flexibility, and Energy Efficiency

- TI Introduces BAW Resonator Chips to Revolutionize Next‑Generation Connectivity

- Urgent Action Needed to Counter Cyber Threats to Critical Infrastructure: New Report Highlights Industrial IoT Risks

- Securing the Global IoT: Three Essential Steps

- FTC Fines Williams‑Sonoma $1M Over Misleading ‘Made in USA’ Claims, Setting Precedent for Future Enforcement

- Revolutionary 3D‑Printed FRP Footbridge Sets New Standard for Circular Composite Construction

- Spider‑Inspired Design Boosts 3D Photodetector Performance for Biomedical Imaging