Streamlining Visual SLAM Development for Embedded Systems

Simultaneous Localization and Mapping (SLAM) enables a device—whether a robot, drone, or autonomous vehicle—to build a real‑time map of its surroundings while simultaneously determining its position within that map. SLAM can be implemented with a variety of algorithms and sensors, from cameras and LiDAR to sonar, radar, and inertial measurement units (IMUs).

Low‑cost, compact cameras have made monocular Visual SLAM the go‑to solution for many robots, from Mars rovers and agricultural field machines to delivery drones and urban autonomous platforms. Visual SLAM excels in GPS‑denied environments, such as indoor warehouses or densely built city centers where satellite signals are blocked or degraded.

This article explains the core visual SLAM workflow, covering the key modules and algorithms for feature detection, matching, and error correction. It also discusses the benefits of offloading SLAM computation to dedicated vision processing units (VPUs) and illustrates these advantages with the CEVA‑SLAM SDK.

Direct vs. Feature‑Based SLAM

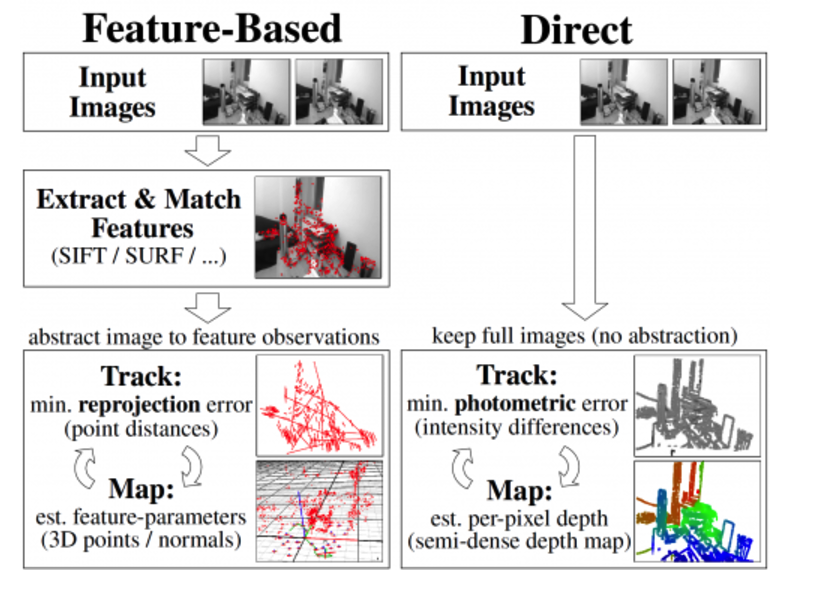

All visual SLAM systems track points across consecutive camera frames, triangulating their 3D positions while estimating the camera’s pose. They also continuously minimise reprojection error—the difference between projected and actual feature locations. The main distinction lies in how the image information is used.

Direct SLAM compares entire images, providing rich environmental detail but at the cost of higher processing load. Feature‑based SLAM, which is the focus of this piece, extracts distinct image features—corners, blobs, and other salient points—and relies solely on these for pose estimation. Although feature‑based methods discard some image data, they offer a simpler, faster, and more power‑efficient solution, especially for embedded hardware.

Figure 1: Direct vs. Feature‑Based SLAM. (Source: https://vision.in.tum.de/research/vslam/lsdslam)

The Visual SLAM Workflow

Feature‑based SLAM proceeds through four essential stages: 1) feature extraction, 2) feature description, 3) feature matching, and 4) loop closure. These stages are built on a set of well‑established building blocks that can be adapted to a range of SLAM implementations.

Feature Extraction

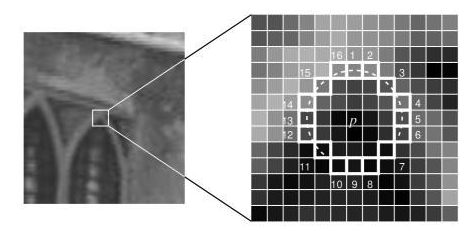

Feature extraction distils an image into a concise set of salient points—corners, edges, blobs, and complex structures such as doorways or windows—represented as compact vectors. Popular algorithms include Difference of Gaussian (DoG) and the fast, real‑time FAST9 corner detector.

Figure 2: SLAM Feature Extraction. (Source: https://medium.com/towards-artificial-intelligence/oriented-fast-and-rotated-brief-orb-1da5b2840768)

Feature Description

Once a feature is located, the surrounding pixel patch is converted into a descriptor—a compact representation that can be compared against other descriptors. ORB and FREAK are widely used descriptor algorithms, balancing distinctiveness with computational efficiency.

Feature Matching

Descriptors from successive frames are matched by computing the Hamming distance between bit‑vectors. This metric can be evaluated efficiently on hardware using XOR and pop‑count operations, enabling rapid matching even on resource‑constrained devices.

Loop Closure

Loop closure detects when the system revisits a previously seen location. By introducing a constraint between non‑adjacent frames, it dramatically reduces accumulated drift. State‑of‑the‑art loop‑closure techniques include bundle adjustment, Kalman filtering, and particle filtering.

Feature‑based SLAM is especially well suited for embedded solutions, delivering faster processing, lower memory usage, and robust performance across variable lighting, motion blur, and occlusions. The specific algorithm choice depends on the application’s map type, sensor suite, accuracy requirements, and other constraints.

Implementation Challenges

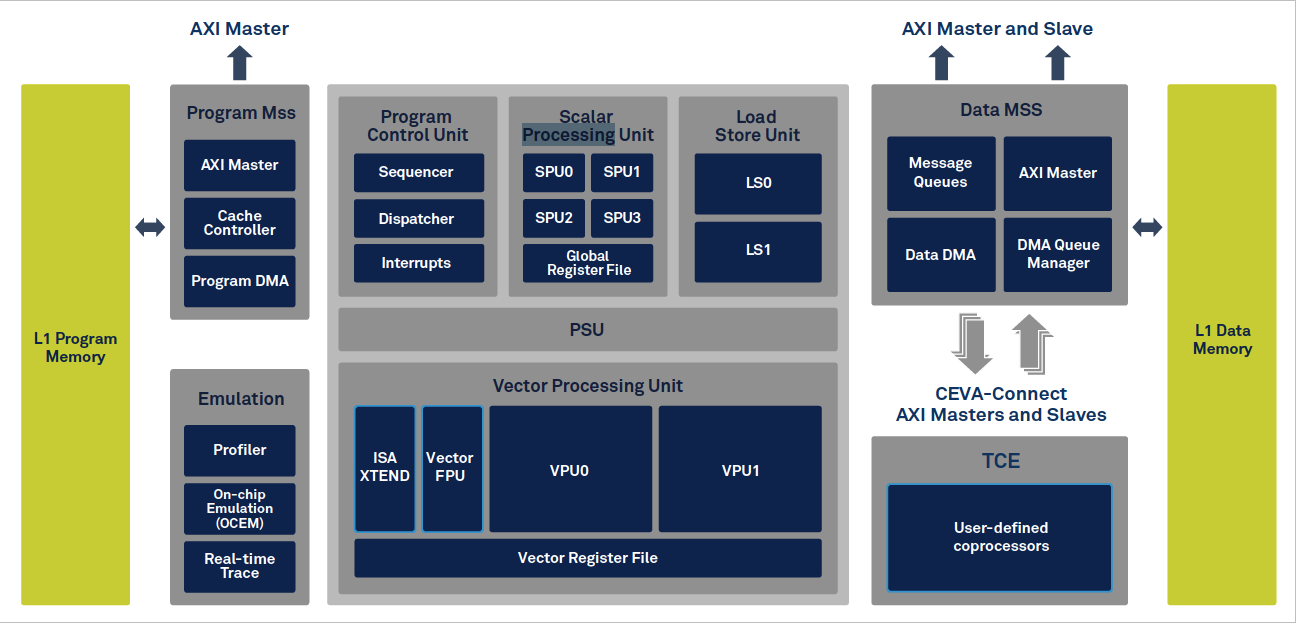

Visual SLAM’s computational intensity can overwhelm conventional CPUs, driving up power consumption and limiting frame rates. Dedicated VPUs, such as the CEVA‑XM6, provide the specialised architecture required to accelerate vision tasks, offering low power consumption, strong ALUs, high‑throughput memory access, and dedicated instructions for image processing.

Figure 3: CEVA XM6 Vision Processing Unit. (Source: CEVA)

Even with a VPU, developers face challenges: writing efficient code for feature matching, bundle adjustment, and other linear‑algebra‑heavy operations; managing memory layout to avoid costly non‑contiguous accesses; and integrating the VPU with the host CPU. High‑level libraries and optimized routines are essential to unlock the VPU’s full potential.

Accelerating Development with CEVA‑SLAM SDK

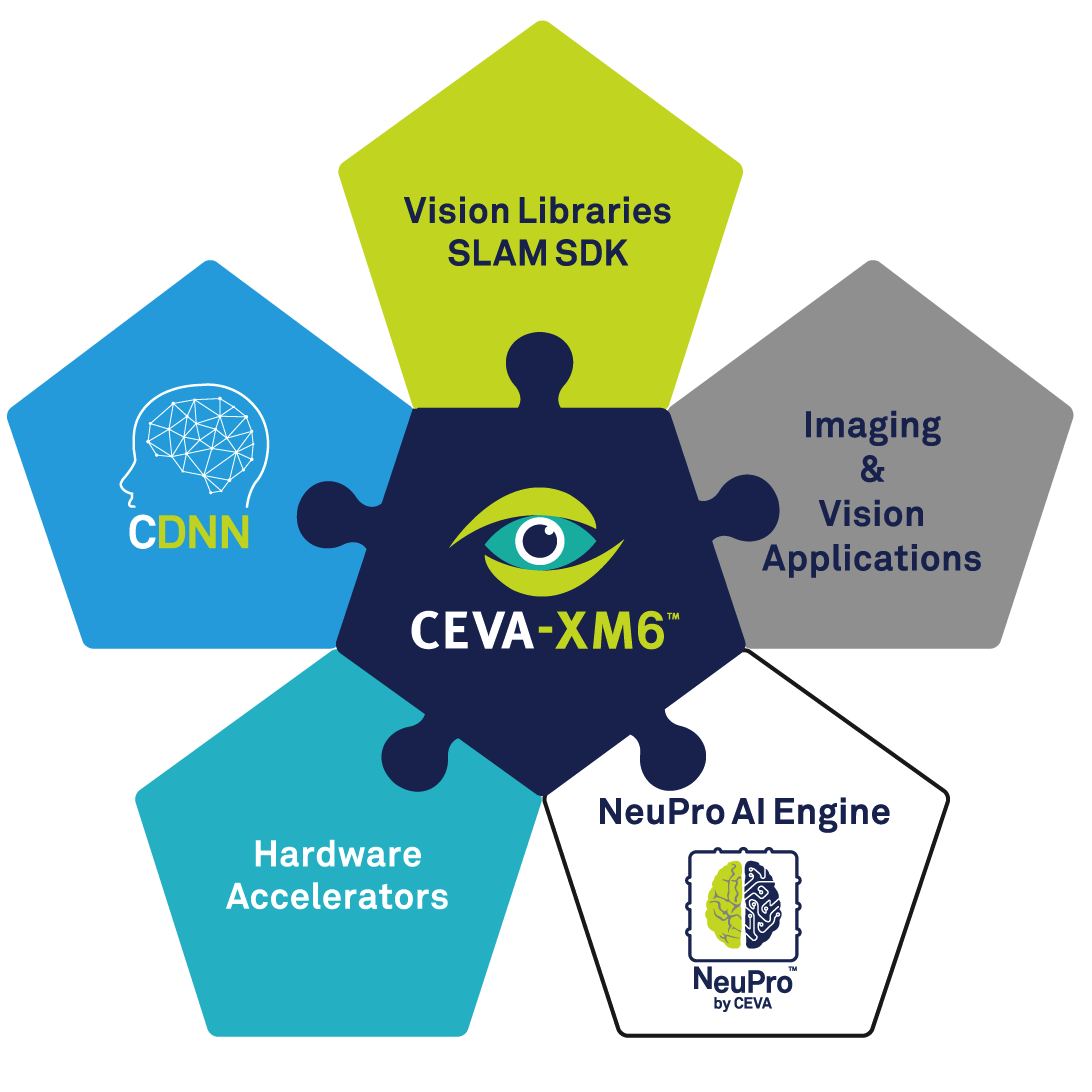

The CEVA‑SLAM SDK is a comprehensive toolchain that marries CEVA‑XM6 hardware with a rich set of vision libraries. It supplies pre‑optimised routines for feature detection, descriptor extraction, matching, bundle adjustment, and linear‑algebra operations. A unique parallel‑load instruction tackles non‑consecutive memory access, while a dedicated Hamming‑distance instruction accelerates matching.

Figure 4: The CEVA SLAM SDK. (Source: CEVA)

In a benchmark implementation, the SDK achieved 60 fps SLAM tracking while consuming only 86 mW—a performance level that would be difficult to reach on a general‑purpose CPU.

Conclusion

Visual SLAM is rapidly becoming a cornerstone technology for autonomous robots, drones, and smart vehicles. As embedded deployments grow, coding efficiency and power consumption become decisive factors. While VPUs offload heavy vision workloads, developing high‑performance, low‑power SLAM remains non‑trivial. By leveraging dedicated toolkits such as the CEVA‑SLAM SDK, developers can accelerate time‑to‑market and deliver reliable, energy‑efficient SLAM solutions.

Embedded

- Tungsten in Modern Warfare: Key Military Applications

- How AI Is Transforming Global Supply Chains

- Phthalocyanine Pigments: Applications, Industries, and Why Quality Matters

- Applications of Basic Dyes Across Industries

- Pigment Blue: Key Applications, Benefits, and Why Industry Leaders Rely on Trusted Manufacturers

- Overcoming the Three Key Challenges in IoT Solution Development

- From Ivory to Bakelite: Tracing the Evolution of Modern Plastics

- Choosing the Right Controller: PLC, PAC, DCS, or IPC Explained

- How Visual Management Drives Success in Industry 4.0

- 10 Revolutionary 3D Printing Applications Transforming Our World