Addressing Training Bias in AI: IBM’s AI Fairness 360 Toolkit

Addressing Training Bias in AI: IBM’s AI Fairness 360 Toolkit

Fairness is a cornerstone of responsible artificial intelligence. Yet, defining and enforcing it remains a complex challenge—especially when the technology is used in high‑stakes domains such as healthcare, hiring, and autonomous vehicles.

IBM’s New Approach

IBM Research is launching AI Fairness 360, a cloud‑based, bias‑detection and mitigation service that enables organizations to test and verify the fairness of their AI systems. The toolkit is built on open‑source metrics and algorithms that measure bias across datasets and models.

The Fairness Gap in Current AI Practices

According to Saska Mojsilovic, a Fellow at IBM Research, the industry has traditionally prioritized accuracy over fairness. She notes that the first question most people ask about AI is, “Can machines beat humans?” Instead, Mojsilovic argues, “What about fairness?” and warns that unchecked bias can lead to catastrophic outcomes in critical sectors.

Decomposing Fairness

To make the concept actionable, IBM’s team began by breaking down fairness into measurable components. Kush Varshney, a Research Scientist at IBM, explained that they examined fairness at both the individual and group levels, considering attributes such as gender and race, as well as legal and regulatory implications. The result was a set of 30 distinct metrics that evaluate bias in data, models, and decision‑making processes.

Measuring and Mitigating Bias

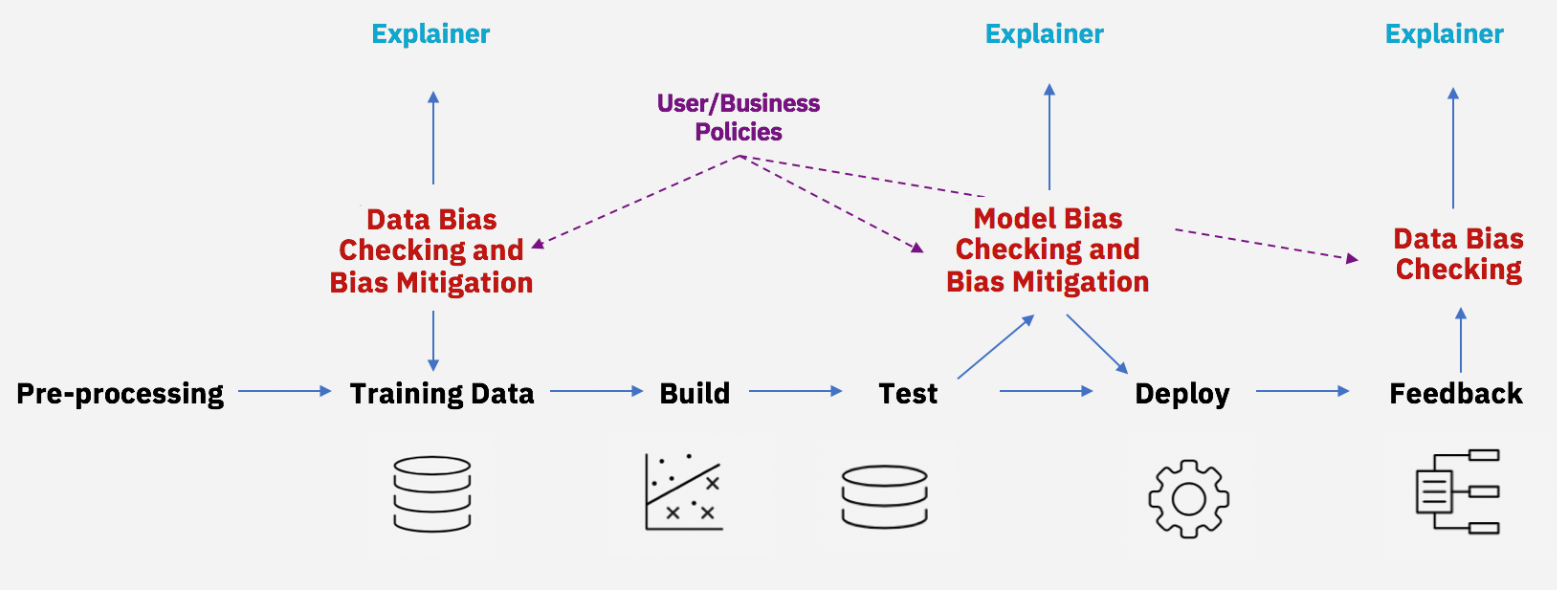

The AI Fairness 360 toolkit incorporates these metrics and offers algorithms not only to detect bias but also to debias models. The goal is to provide developers with actionable insights that can be applied throughout the AI lifecycle.

Mitigating bias throughout the AI lifecycle (Source: IBM)

Interdisciplinary Collaboration

Recognizing that fairness transcends computer science, IBM has engaged scholars from ethics, law, and social science. Varshney has presented at the Uehiro‑Carnegie‑Oxford Ethics Conference, while Mojsilovic participated in a UC Berkeley Law School AI workshop. Their collaboration ensures that the toolkit reflects both technical rigor and societal values.

Is an Algorithm Neutral?

Social scientists such as Young Mie Kim of the University of Wisconsin—Madison point out that AI bias can arise at any stage—from data sampling to model training. In her study “Algorithmic Opportunity: Digital Advertising and Inequality of Political Involvement,” Kim demonstrates how algorithmic decisions can reinforce existing inequalities. She cautions that claiming an algorithm is “neutral” ignores the embedded biases that appear during development and deployment.

By providing transparent metrics and mitigation strategies, AI Fairness 360 empowers organizations to build AI systems that are not only accurate but also equitable.

Internet of Things Technology

- Mastering Machine Learning on AWS: A Comprehensive Guide

- Bridging the Gap: Making Machine Learning Accessible at the Edge

- How Machine Learning Drives Efficiency in Industrial Production

- Maximizing the Impact of Reliability Training: Expert Insights from Bill Wilder

- Deep Learning vs. Machine Learning: Understanding the Key Differences

- Revolutionizing Asset Reliability: Machine Learning for Predictive Maintenance

- Harnessing Machine Learning for Industrial Innovation

- Inside the Role of AI Researchers & ML Engineers at JPMorgan

- Unlocking Machine Learning: Practical Insights for Industry Leaders

- Integrating Machine Learning into PLCnext Controllers