Microcontrollers Evolve to Power AIoT

Combining AI with IoT yields the AIoT—an expanding domain where microcontrollers now host sophisticated machine learning, thanks to breakthroughs in neural‑network compression and optimization. Modern smartphone application processors routinely execute AI inference for tasks such as image analysis and recommendation engines, demonstrating that high‑performance ML is no longer confined to supercomputers.

An ecosystem of billions of IoT devices will get machine learning capabilities in the next couple of years (Image: NXP)

Deploying such intelligence on low‑power microcontrollers unlocks transformative use cases: AI‑driven hearing aids that suppress ambient noise, smart appliances that identify users and apply personalized profiles, or sensor nodes that operate for years on micro‑joule power budgets. Edge inference reduces latency, enhances data security, and preserves user privacy.

Yet, embedding ML on microcontrollers remains challenging. Limited SRAM and flash restrict the size of neural models, and computational throughput is modest. Rapid advances in model quantization and pruning, coupled with vendor‑specific tooling, are steadily narrowing this gap.

TinyML Gains MomentumThe industry’s rapid expansion is reflected in the TinyML Summit, a Silicon Valley event that attracted 27 sponsors this year compared to 11 the previous year, with registration slots filling months in advance. Organizers also reported a surge in membership for their global, monthly design‑oriented meetups.

“We envision trillions of intelligent devices powered by TinyML, each sensing, analysing and autonomously acting to create healthier, more sustainable environments,” said Qualcomm’s Evgeni Gousev, co‑chair of the TinyML Committee, during the opening keynote.

He credited this momentum to more energy‑efficient hardware, refined algorithms, and mature software stacks, noting a rise in corporate and venture‑capital investment, as well as increased startup activity and M&A.

The TinyML Committee now considers the technology validated, predicting that early AI‑enabled microcontroller products will enter the market within 2–3 years, while truly transformative applications may appear in 3–5 years.

A key milestone arrived last spring when Google unveiled TensorFlow Lite for Microcontrollers—a lightweight inference engine tailored for devices with only kilobytes of RAM. The core runtime occupies just 16 KB on an ARM Cortex‑M3, and a complete speech‑keyword detection model fits in 22 KB. The library supports inference only, not training.

Industry Leaders Monitor TinyMLMajor microcontroller vendors closely track TinyML progress, as shrinking neural models expand their product opportunities. Most already offer ML support. For instance, STMicroelectronics supplies STM32Cube.AI, an extension pack that maps and runs neural networks on its STM32 ARM Cortex‑M family. Renesas provides the e‑AI development environment, translating models into C/C++ compatible code via its e² Studio. NXP reports customers deploying machine learning on its low‑end Kinetis and LPC MCUs, integrating AI through both hardware and software, especially within its crossover processors bridging application processors and microcontrollers.

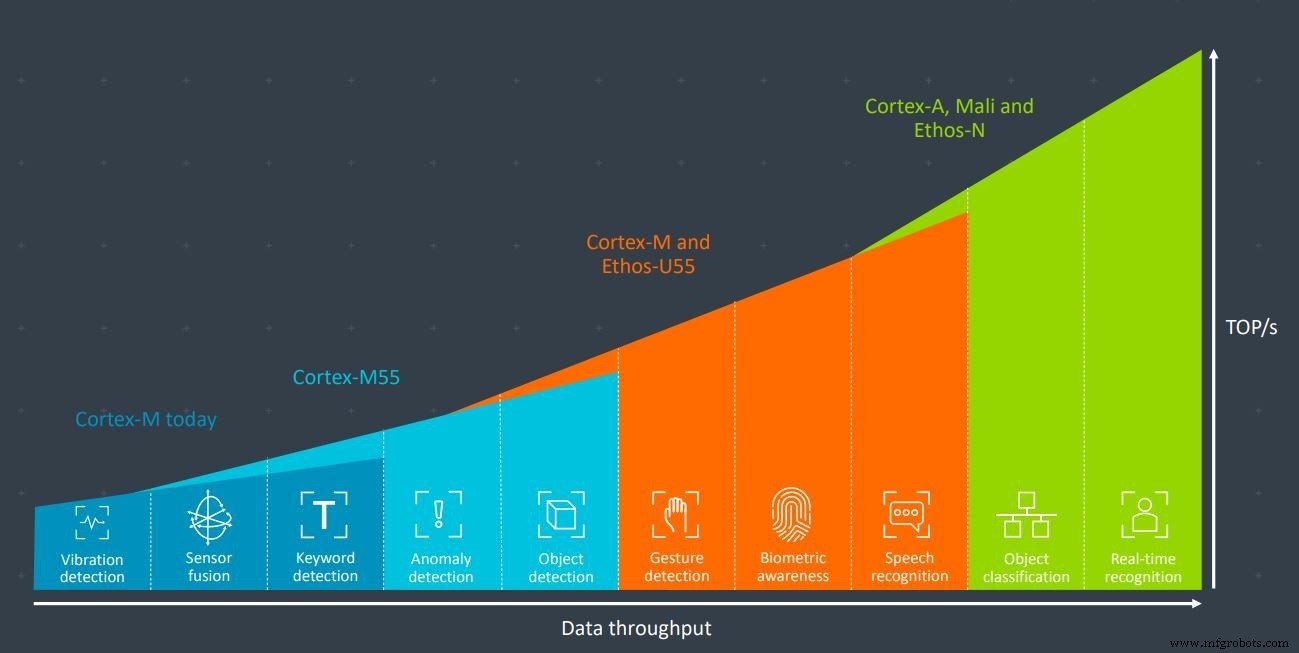

Arm‑Centric ArchitectureArm dominates the microcontroller market with its Cortex‑M series. The firm recently introduced the Cortex‑M55 core, engineered for ML workloads and paired with the Ethos‑U55 AI accelerator, both targeting ultra‑low‑power, resource‑constrained environments. Together, they deliver sufficient compute for gesture recognition, biometrics, and speech recognition.

Used in tandem, Arm’s Cortex‑M55 and Ethos‑U55 have enough processing power for applications such as gesture recognition, biometrics and speech recognition (Image: Arm)

So how can smaller firms differentiate themselves against Arm’s entrenched ecosystem?

XMOS CEO Mark Lippett quipped, “You can’t beat Arm by building another Arm‑based SoC—they excel at that. The only way is to introduce architectural advantages—both performance and flexibility.” XMOS’s Xcore.ai, a crossover processor for voice interfaces, illustrates this philosophy, though it does not directly compete with microcontrollers.

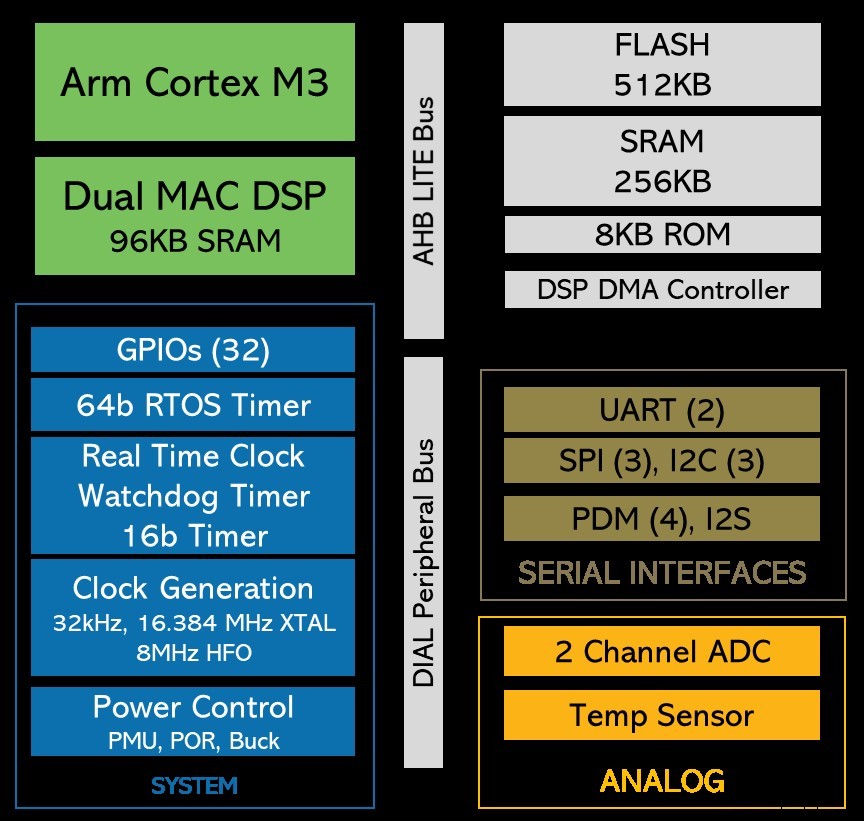

Voltage & Frequency Scaling in Ultra‑Low Power ChipsDuring the TinyML Summit, Eta Compute unveiled the ECM3532—an ultra‑low‑power device capable of always‑on image processing and sensor fusion with a 100 µW power budget. It incorporates an ARM Cortex‑M3 core and an NXP CoolFlux DSP core; either or both can handle ML workloads. The chip’s key innovation is continuous scaling of clock frequency and voltage for both cores, eliminating the need for a phase‑locked loop and dramatically cutting power consumption.

Eta Compute’s ECM3532 uses an Arm Cortex‑M3 core plus an NXP CoolFlux DSP core. The machine learning workload can be handled by either, or both (Image: Eta Compute)

With emerging competitors such as RISC‑V, why did Eta Compute select an Arm core for its ML acceleration?

Tewksbury explained to EETimes, “Arm’s ecosystem is simply more mature. It’s easier to bring a product to market with Arm than with RISC‑V at present, though that may evolve. RISC‑V excels in the Chinese market, but we target domestic and European customers where the Arm ecosystem is strongest.”

Tewksbury highlighted that AIoT spans a broad, fragmented market with numerous niche applications generating modest volumes. Collectively, however, the sector could encompass billions of devices. He emphasized that developers must avoid the cost of tailoring solutions for each use case; flexibility and ease of use are therefore critical, which Arm’s ecosystem provides.

Last October, Arm announced it would allow customers to create custom instructions for specialized workloads such as ML. This capability, once applied to future cores, could further reduce power consumption.

While the current M3 core cannot benefit from this feature, Tewksbury is optimistic: “Absolutely, we plan to leverage Arm’s custom instruction set in next‑generation products to push power efficiency even further.”

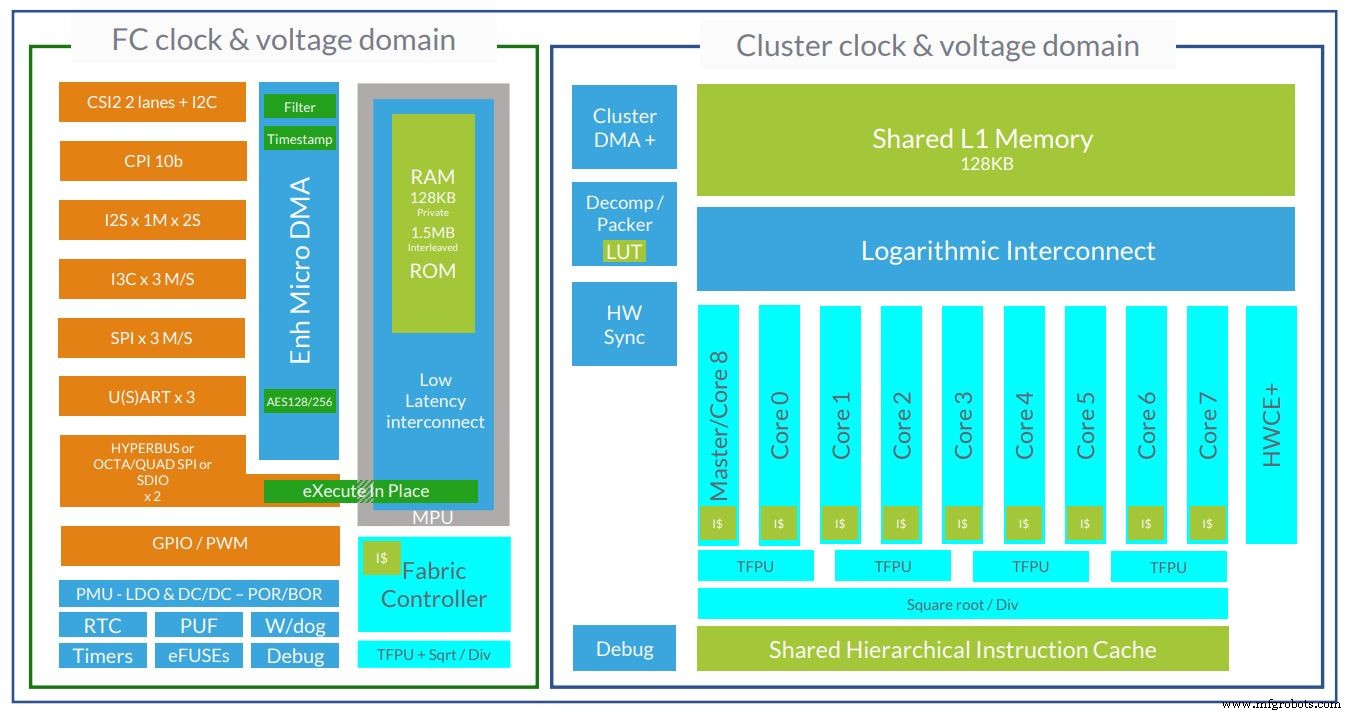

Emerging ISA Options: RISC‑VRISC‑V’s open‑source ISA removes licensing fees and allows designers to add proprietary extensions. Companies can tailor cores to specific workloads, protecting IP as needed. GreenWaves, a French startup, uses RISC‑V cores in its GAP8 and GAP9 chips—8‑core and 9‑core clusters, respectively. The GAP9 architecture now incorporates 10 RISC‑V cores.

The architecture of GreenWaves’ GAP9 ultra‑low power AI chip now uses 10 RISC‑V cores (Image: GreenWaves)

GreenWaves VP Martin Croome told EETimes that RISC‑V’s configurability at the instruction level is a decisive advantage. Custom extensions enable significant power reductions for ML and signal‑processing tasks. For a new company, creating such custom cores would otherwise be prohibitively expensive on other architectures, where licensing or IP costs would balloon.

Custom extensions alone yield a 3.6× improvement in energy efficiency versus unmodified RISC‑V cores. Croome also praised RISC‑V’s modern, clean ISA, which reduces implementation complexity and, consequently, power draw. Precise control over each core’s execution is essential for maximizing energy efficiency—something RISC‑V facilitates.

Croome acknowledged that if Arm could provide the same level of customization, it would be the natural choice. However, Arm’s mature software toolchain currently outstrips RISC‑V, though the latter’s ecosystem is rapidly maturing.

In summary, while some see Arm’s dominance as eroding, the company is countering by enabling custom extensions and developing ML‑oriented cores from the outset. Both Arm and non‑Arm solutions are now available for ultra‑low‑power AI, and as TinyML communities continue to shrink model sizes and refine frameworks, the market is poised to flourish.

Embedded

- Arm Introduces Cortex‑M55 & Ethos‑U55: New IP Cores Empowering TinyML on Low‑Power Endpoints

- Essential Criteria When Choosing a CNC Machine

- How Data Visualisation Drives Efficiency for Machine Builders

- 7 Expert Tips for Selecting the Ideal Machine Shop

- Understanding Milling Machines: Functions, Applications, and Benefits

- Maximize Machine Lifespan: Expert Coolant Management for Machine Shops

- Top CNC Machining Trends to Watch in 2021

- Professional Guide to Choosing a Resistance Spot Welding Machine

- Spot Welding Machines: What They Are and Why They’re Ideal for Almirah Construction

- Robotic Machine Guarding: Advanced Standards & Custom Solutions