Memory Technologies Powering Edge AI: Challenges and Opportunities

Edge AI is reshaping how we think about memory. As AI workloads migrate from the cloud to devices closer to the user, memory systems must deliver large capacity, high bandwidth, low latency, and energy efficiency while staying cost‑effective and reliable.

There is no single “edge AI” specification. The term broadly covers any AI‑enabled electronics outside a central cloud—ranging from enterprise data centers to autonomous vehicles, smart city infrastructure, and IoT endpoints.

Typical edge scenarios include computer vision for self‑driving cars, AI inference in manufacturing gates to detect product defects, 5G edge boxes on utility poles monitoring traffic, and mobile phones applying Snapchat filters or voice assistants.

Memory’s role is uniform across these applications: storing neural‑network weights, model code, input data, and intermediate activations. The challenge lies in balancing capacity, bandwidth, power, and cost while meeting the unique constraints of each deployment.

Edge data centersEdge data centers serve latency‑critical domains such as medical imaging, high‑frequency finance, and autonomous vehicle control. These sites use the same memory families as traditional servers—high‑performance DRAM, large‑capacity RDIMMs, and non‑volatile options like NVDIMMs.

"Low‑latency DRAM is essential for training and developing AI models," notes Pekon Gupta, solutions architect at Smart Modular Technologies. "Large data sets demand high‑capacity RDIMMs or LRDIMMs, while NVDIMMs accelerate write‑intensive workloads such as caching and checkpointing.”

Pekon Gupta

Telecom carriers are following a similar path, adding memory and compute to edge servers. Gupta highlights Intel Optane—3D‑XPoint non‑volatile memory that bridges DRAM and Flash—as a compelling choice for AI workloads that benefit from persistent, high‑capacity memory.

"Optane DIMMs and NVDIMMs serve distinct purposes: Optane excels in in‑memory databases and large, persistent AI datasets, while NVDIMMs provide low‑latency tiering and caching," Gupta explains.

Intel’s Kristie Mann reports that Optane is already powering e‑commerce recommendation engines, video personalization, and real‑time financial analytics. A server equipped with dual Intel Xeon Scalable processors and Optane persistent memory can host up to 6 TB of usable memory, offering a cost‑effective alternative to all‑DRAM configurations.

Intel’s Kristie Mann

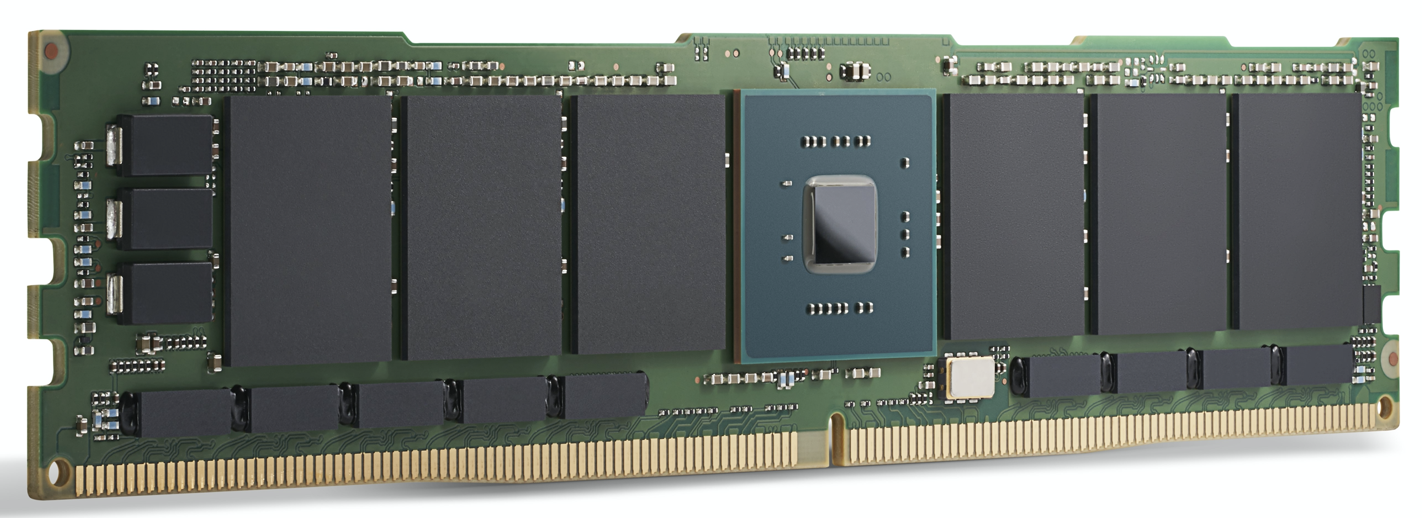

An Intel Optane 200 Series module. Intel says Optane is already used to power AI applications today. (Source: Intel) GPU acceleration

For high‑performance edge data centers, GPUs drive AI compute. Memory choices here include GDDR, designed for high‑bandwidth GPUs, and HBM (high‑bandwidth memory), a die‑stacked solution that places multiple memory dies directly on the GPU package.

HBM2E delivers up to 3.6 Gbps per lane, translating to 460 GB/s bandwidth per stack—two stacks can approach 1 TB/s. This performance, combined with low power consumption, makes HBM the memory of choice for Nvidia’s data‑center GPUs.

GDDR6, on the other hand, offers 18 Gbps per lane and 72 GB/s bandwidth. Four GDDR6 chips yield nearly 300 GB/s, a sweet spot for edge inference workloads. Frank Ferro, senior director of product marketing for IP Cores at Rambus, notes that LPDDR, while cost‑effective for endpoint inference, cannot match the bandwidth demands of modern AI models, pushing designers toward GDDR6.

Frank Ferro of Rambus

Other accelerators—such as the Achronix Speedster7t FPGA—also tap GDDR bandwidth, proving that both HBM and GDDR have roles in edge AI.

The far edgeAt the farthest edge—mobile phones, wearables, and sensor nodes—AI is predominantly inference. Devices like Syntiant’s voice‑control chips and Gyrfalcon’s camera‑effect accelerators use analog compute techniques, embedding matrix multiplication directly into memory cells.

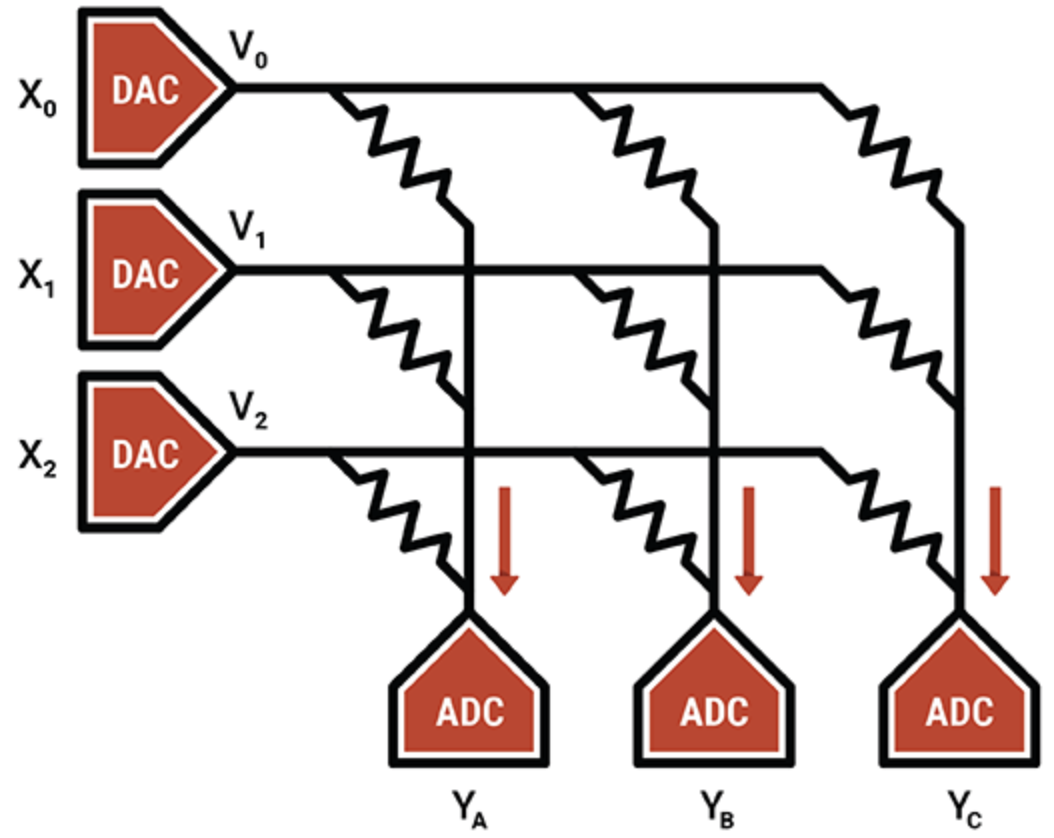

Mythic’s flagship IP leverages flash memory transistors as variable resistors to perform 8‑bit multiply‑accumulate operations, achieving remarkable density and energy efficiency. Their noise‑cancellation calibration enables reliable 8‑bit inference despite analog variability.

Mythic uses an array of Flash memory transistors to make dense multiply‑accumulate engines (Source: Mythic)

ASICs tailored for ultra‑low‑power inference combine distributed SRAM, bulk SRAM, and off‑chip DRAM. Geoff Tate of Flex Logix explains that the InferX X1 achieves 7.5 TOPS at 933 MHz with a single x32 LPDDR4 DRAM and 10 MB SRAM, striking a balance between speed, area, and cost.

As memory economics evolve, LPDDR will likely remain the dominant choice for edge inference due to its bandwidth, simplicity, and minimal silicon footprint.

Emerging memoriesBeyond established technologies, several non‑volatile memories offer novel capabilities for AI.

MRAM (magneto‑resistive RAM) stores bits using magnet orientation, allowing low‑voltage operation with controlled stochastic behavior. This randomness can be exploited for binarized neural networks (BNNs), which tolerate high bit‑error rates while maintaining accuracy. Andy Walker of Spin Memory notes that MRAM can reduce power consumption dramatically while preserving performance for edge AI tasks that do not demand extreme precision.

Andy Walker of Spin Memory

MRAM’s non‑volatility also opens the door to unified memory architectures that replace both embedded Flash and SRAM, cutting die area and static power.

Neuromorphic ReRAMResistive RAM (ReRAM) introduces synaptic plasticity, enabling neuromorphic systems that learn in situ. Politecnico Milano’s collaboration with Weebit Nano demonstrated ReRAM’s potential for unsupervised learning, allowing edge devices to adapt to changing environments without retraining from scratch.

In summary, memory technology is evolving rapidly to meet the demands of edge AI. Established memories—GDDR, HBM, Optane—continue to dominate data‑center edge, while LPDDR and on‑chip SRAM hold sway at the far edge. Emerging non‑volatile memories like MRAM and ReRAM are poised to deliver unprecedented energy efficiency and adaptive capabilities, shaping the next generation of AI‑enabled devices.

— This article was originally published on our sister site, EE Times.

Embedded

- Cold Chain Management: Overcoming Challenges with Advanced Technology Solutions

- Edge AI Chips: Driving the Future of On-Device Intelligence

- ST Microelectronics Showcases 28 nm FD‑SOI MCUs with Embedded PCM for Powertrain, ADAS, and Electrification

- Siemens Acquires Pixeom’s Edge Technology to Expand Digital Factory Solutions

- Edge Hyperconvergence: VMware & Eurotech Driving Efficient Industrial IoT

- Navigating IT Challenges in Modern Manufacturing: Strategies for Digital Transformation

- Addressing the Challenges of 5G Technology: A Comprehensive Guide

- Protecting Flash Memory in IoT & Edge Devices: Best Practices & Security Trends

- Edge Computing: Transforming Every Industry with Real-World Solutions

- Harness Micro‑Milling for a Competitive Advantage in Manufacturing