Qualcomm Unveils Cloud AI 100: A Power‑Efficient Edge AI Accelerator for 5G and Beyond

Nearly 18 months after its initial unveiling in April 2019, Qualcomm has now confirmed that the Cloud AI 100 silicon is in production and will ship during the first half of 2021. The company also disclosed the card form factors and performance benchmarks that underscore its readiness for edge‑AI workloads.

While Qualcomm has long dominated the smartphone market with its Snapdragon line, its earlier server‑grade Centriq line was discontinued in 2018. The Cloud AI 100 marks Qualcomm’s strategic entry into the near‑edge server market, delivering high‑throughput AI inference with exceptional power efficiency.

Qualcomm’s Cloud AI 100 is designed for near‑edge AI workloads such as 5G infrastructure and enterprise data centers.

Form Factors and Performance

Qualcomm will initially offer the Cloud AI 100 in three card formats:

- Dual M.2 Edge (DM.2e) – over 50 TOPS at 15 W

- Dual M.2 (DM.2) – 200 TOPS at 25 W

- PCIe – up to 400 TOPS at 75 W

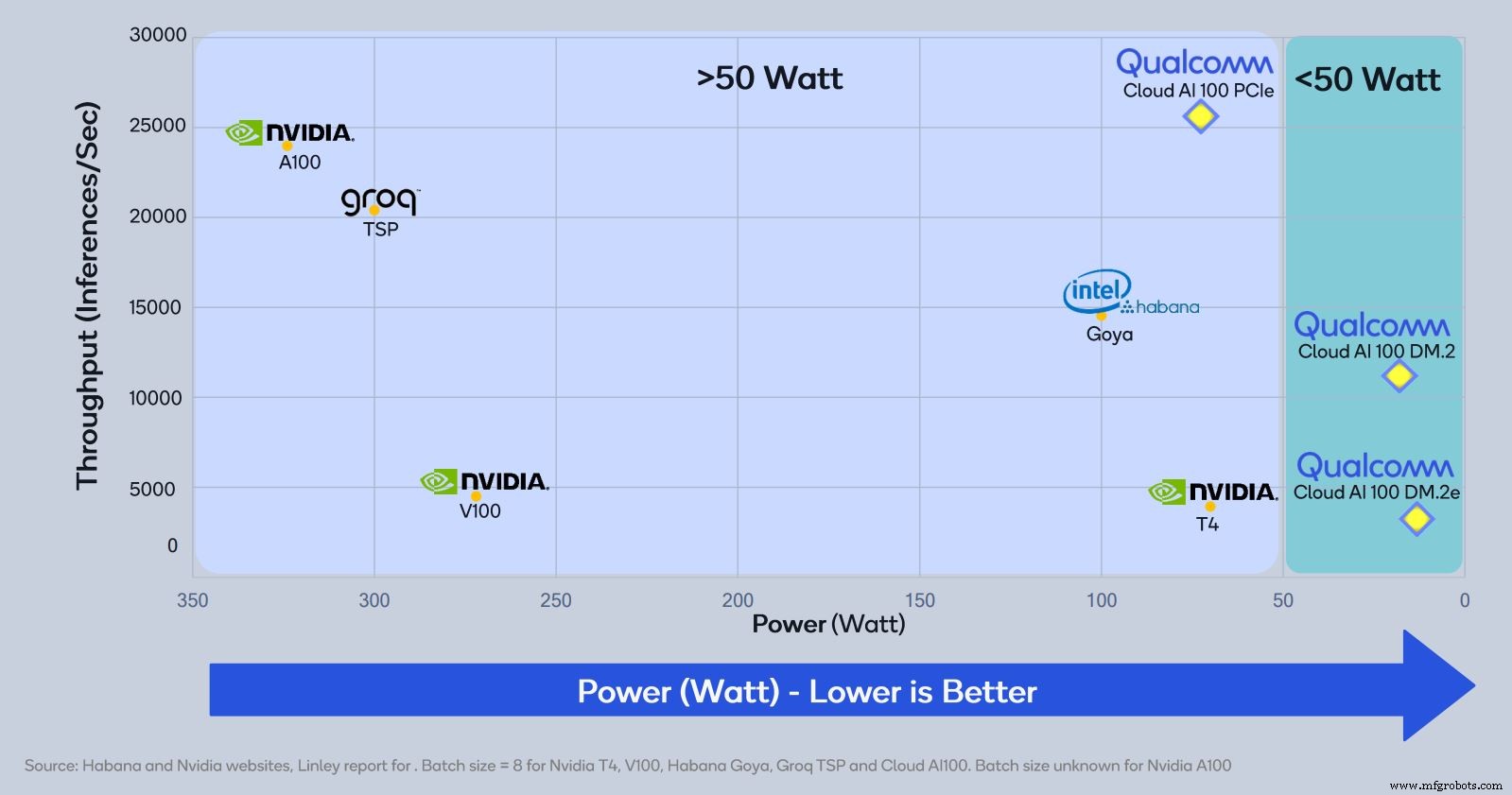

These figures represent the theoretical “raw” TOPS and do not account for real‑world application overhead. Nonetheless, Qualcomm’s own performance curves show the PCIe version outperforming many leading solutions while consuming only a fraction of the power.

ResNet‑50 inference throughput versus power consumption. Qualcomm’s cards lead in power efficiency, with a batch size of 8 (except Nvidia A100).

John Kehrli, Qualcomm’s Senior Director of Product Management, noted, “We bring over a decade of AI R&D from our mobile platforms to this new core. While the architecture differs from Snapdragon, the expertise and performance per watt are evident.”

Chip Architecture

The Cloud AI 100 is an inference accelerator built on a 7 nm FinFET process. It houses up to 16 AI cores that support INT8, INT16, FP16, and FP32 precision. The die contains 144 MB of on‑chip SRAM (128 MB shared, 1 MB per core) and interfaces with up to 32 GB of LPDDR4x DRAM (4×64 LPDDR4x at 2.1 GHz), delivering a high memory bandwidth essential for AI workloads.

Target Applications

The accelerator is tailored for a wide range of edge use cases, including computer vision, speech recognition, autonomous driving, language translation, and recommendation engines. Qualcomm is positioning the Cloud AI 100 for four primary markets:

- Data centers at the edge of the cloud

- Advanced Driver Assistance Systems (ADAS)

- 5G edge boxes (on‑premise, standalone devices for smart city and enterprise use)

- 5G infrastructure (AI‑powered beam‑forming and base‑station optimization)

In the autonomous driving space, a derivative of the Cloud AI 100 powers Qualcomm’s Snapdragon Ride platform, leveraging the same architecture and software stack.

Reference Design & Development Kit

Qualcomm introduced a new development kit featuring a reference design for a 5G edge box powered by the Cloud AI 100. The kit pairs the accelerator with a Snapdragon 865 host processor, offering full video pipelines that support up to 24 streams of full‑HD video decode. It also includes a pre‑certified Snapdragon X55 5G modem.

Kehrli emphasized, “This kit enables customers to launch demo applications immediately. It comes pre‑compiled with ResNet‑50, providing a quick start for edge AI solutions.”

Deployment Timeline

Qualcomm expects initial commercial deployments to focus on edge scenarios—smart cities, retail analytics, and manufacturing—due to shorter certification cycles. Sampling of the Cloud AI 100 is already underway with select customers, and the development kit will sample next month for a limited audience.

Note: This article was originally published on EE Times.

Embedded

- Serverless Computing: The Next Generation of “As a Service” Innovation

- Edge & Cloud Computing in IoT: A Concise Evolutionary Overview

- Edge, Endpoint, and Cloud AI: A Unified Future for Intelligent IoT

- Edge AI Chips: Driving the Future of On-Device Intelligence

- Qualcomm Unveils Cloud AI 100: A Power‑Efficient Edge AI Accelerator for 5G and Beyond

- Advantech Expands Accelerated Computing to the Edge with NVIDIA Integration

- ADLINK Deploys AI from Edge to Cloud with NVIDIA EGX Platform: Accelerated Edge Computing Solutions

- IXrouter: Seamless Edge‑to‑Cloud Connectivity for Industrial IoT

- Edge Cloud Computing: The Essential Backbone for IoT’s Rapid Growth

- Edge Computing vs Cloud Computing: Key Differences Explained