Edge, Endpoint, and Cloud AI: A Unified Future for Intelligent IoT

The COVID‑19 pandemic accelerated the demand for contact‑free interactions, pushing artificial intelligence closer to the device that users touch. AI‑driven voice controls, touch‑less payments, and autonomous video systems are no longer optional—they’re becoming essential for safe, seamless experiences.

Embedded Artificial Intelligence of Things (AIoT) is at the heart of this shift. By embedding AI directly into sensors and microcontrollers, we unlock hands‑free, real‑time decision making that keeps users safe and improves productivity across retail, manufacturing, and remote work.

AIoT Is Moving Out of the Cloud

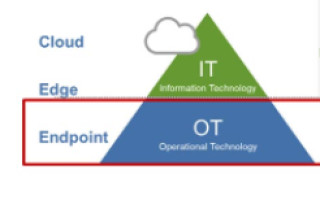

Historically, AI’s heavy computational demands were met in the cloud, but today we’re seeing a reverse trend. Training of deep‑learning models still occurs in data centers, but inference is migrating to the edge and device endpoints. This shift enables instant analytics and removes latency from the device‑to‑cloud‑to‑device loop.

While many endpoints still rely on CPU‑based inference, the industry is transitioning to chips that integrate neural‑processing units (NPUs) and dedicated AI accelerators. These on‑chip solutions provide the bandwidth, low power consumption, and real‑time responsiveness required for next‑generation connected systems.

Figure 1 (Source: Renesas Electronics)

An AIoT Future at Home and in the Workplace

Advances in AI accelerators, predictive control, and multimodal perception are unlocking new user interfaces for both industrial and consumer devices. Voice activation is becoming the default interface for always‑on systems, offering accessibility benefits and enabling touch‑less control in kitchens, offices, and factories.

Multimodal architectures—combining voice, vision, and gesture—enhance safety and usability. For example, a voice command can trigger visual recognition to identify objects or people, providing hands‑free surveillance or real‑time video conferencing that automatically switches focus between speakers.

In manufacturing, collaborative robots (CoBots) rely on multimodal AI to interpret human gestures and voice commands, allowing them to operate safely alongside workers. These systems use the “five senses” of vision, hearing, and touch to navigate complex, dynamic environments.

What’s on the Horizon?

IDC Research projects 55 billion connected devices worldwide by 2025, generating 73 zettabytes of data. Edge AI chips are expected to outpace cloud AI chips as deep‑learning inference continues to shift toward the edge and device endpoints. This integration of AI at every layer—cloud, edge, and endpoint—will underpin smart applications that communicate and interact in a more natural, human‑like way.

Dr. Sailesh Chittipeddi, Executive Vice President and General Manager of the IoT and Infrastructure Business Unit at Renesas, leads the company’s AIoT initiatives.

Internet of Things Technology

- Web‑Enabled DDS: Bridging IoT, Cloud, and Real‑Time Connectivity

- Defining the Edge: Where Edge Computing Truly Happens

- Edge & Cloud Computing in IoT: A Concise Evolutionary Overview

- Edge Hyperconvergence: VMware & Eurotech Driving Efficient Industrial IoT

- From Edge to Cloud: Mastering IoT Data Pipelines

- Mastering Fiber Reinforcement: The Art and Science of Braiding

- Accelerating Analytics: Comparing Edge, Hybrid, and Cloud Performance

- How IoT and Edge Computing Complement Each Other

- 5G Revolutionizes Manufacturing: High Expectations, Realistic Challenges

- Edge Computing & 5G: Powering Enterprise Transformation