Accelerate Alexa Integration with Developer Kits and Reference Designs

Design engineers can now leverage turnkey hardware modules and robust software services to embed Amazon’s Alexa Voice Service (AVS) into a wide spectrum of smart devices—from portable speakers and appliances to automotive infotainment and wearables—bringing cloud‑based Alexa experiences to the field.

Since Amazon unveiled the original Alexa device in 2014, AWS and leading chip manufacturers have released reference designs that streamline the integration of Amazon’s voice recognition technology and AVS interface. These pre‑built, pre‑tested solutions dramatically reduce development time for both hardware and software teams.

For companies with limited engineering bandwidth, reference designs provide an accessible path to deploy natural‑language understanding and voice interfaces. They eliminate the complexity of high‑quality audio processing and accelerate time‑to‑market.

Figure 1. Reference designs for AVS‑based voice applications are built to seamlessly integrate Amazon’s voice recognition technology into voice‑controlled devices. Source: STMicroelectronics

Wake‑Word Detection

A reliable wake‑word engine (WWE) that listens for “Alexa” is the foundation of any voice‑enabled product. The cloud‑based wake‑word verification confirms the context, ensuring that the system responds only when the user genuinely intends to engage Alexa. The hardware components in reference designs enhance this detection by capturing audio even in noisy, real‑world environments and from moderate distances.

Take the Cirrus Logic Voice Capture Development Kit for Amazon AVS as an example. It delivers proven acoustic tuning, enabling accurate “Alexa” detection in both quiet rooms and bustling environments, even when the user is several meters away. Noise suppression and echo cancellation algorithms improve reliability and user experience.

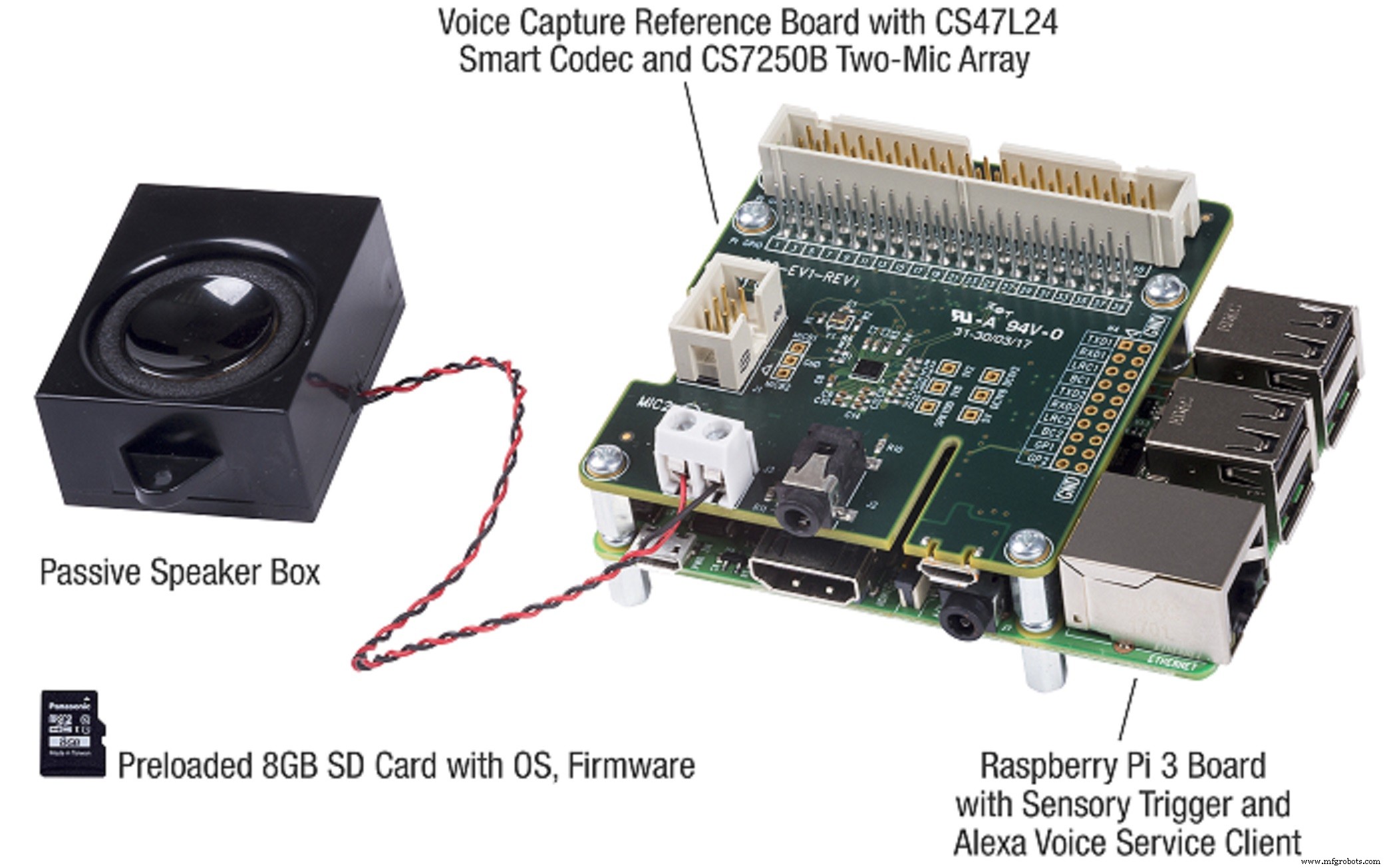

Figure 2. The far‑field AVS reference design targets smart speakers and other voice‑controlled smart‑home devices. Source: Cirrus Logic

The kit comprises a voice‑capture board featuring a two‑mic array, a Raspberry Pi 3 (RPi3), a speaker, and a preloaded microSD card with firmware for instant productivity. A control console provides a user‑friendly interface for acoustic tuning and diagnostics.

Key hardware components include Cirrus Logic’s CS47L24 smart codec, CS7250B digital MEMS microphones, and SoundClear algorithms for voice control, noise suppression, and echo cancellation. The smart codec integrates hi‑fi DACs, a stereo headphone amp, and a mono speaker amp, minimizing board real estate and BOM costs.

The MEMS microphones boast an ultra‑low noise floor and a 103 dB dynamic range, ensuring precise voice capture under challenging noise conditions. SoundClear algorithms actively block interfering noise, enabling reliable wake‑word detection and audio capture even during loud music playback.

Audio Front‑End (AFE)

The AFE is a critical component of any AVS‑based design. It captures the user’s voice, amplifies it, reduces background noise, and forwards the signal to the cloud. Building a robust AFE from scratch can be daunting, which is why development kits are invaluable.

DSP Concepts’ TalkTo audio front‑end, integrated with AVS‑qualified voice processing, exemplifies this approach. It is shipped as part of STMicroelectronics’ AWS IoT Core reference design based on STM32 MCUs. Features include advanced noise reduction, echo cancellation, and beamforming for far‑field audio detection, all finetuned via the free Audio Weaver tool.

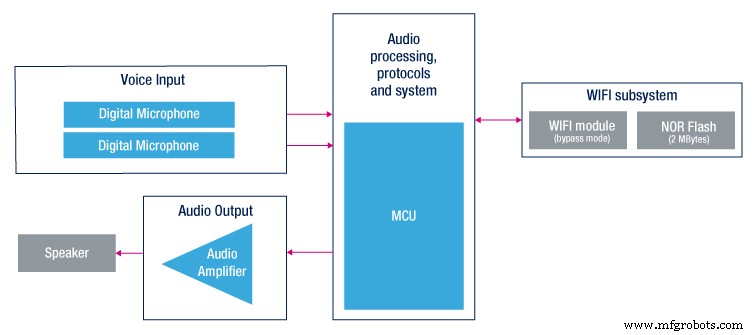

Figure 3. A single‑chip solution comprising audio front‑end processing, local wake‑word detection, communication interfaces, and memory reduces BOM costs and simplifies layout. Source: STMicroelectronics

The ST board measures 36×65 mm and incorporates a Wi‑Fi module alongside an STM32H743 MCU. This MCU integrates audio front‑end processing, local wake‑word detection, communication interfaces, and memory—all on one chip. The reference design also includes a separate audio daughterboard for rapid prototyping.

The daughterboard houses the FDA903D audio codec, user LEDs, buttons, and two MP23DB01HP MEMS microphones spaced 36 mm apart, ideal for size‑constrained designs. A privacy mode can disable the microphones, with a red LED indicating that Alexa cannot hear commands.

Far‑Field Voice Recognition

Other chipmakers contribute their own reference platforms. NXP’s 7‑microphone array design, coupled with advanced beamforming and audio‑processing algorithms, claims to recognize user requests from across the room—even when loud music is playing. By integrating Amazon’s far‑field voice recognition technology with NXP’s i.MX application processors, the platform simplifies the creation of sophisticated voice‑controlled devices.

These voice‑enabled designs are reshaping how users interact with everyday appliances—from toasters and cookers to thermostats and blinds. Reference boards and capture kits offer the fastest route to market, delivering highly accurate wake‑word triggering and command interpretation in noisy environments.

We are at the dawn of the voice‑enabled device revolution. The diversity of applications means that pre‑designed, pre‑tested reference boards and kits will play a pivotal role in accelerating product development and reducing design complexity.

>> This article was originally published on our sister site, EDN.

Internet of Things Technology

- Audio Edge Processors: The Key to Seamless Voice Integration in IoT Devices

- Why 2017 Became the Year of Voice Interfaces

- Accelerate IoT Design with Microchip’s Advanced Development Boards

- BrainChip Launches Akida Neuromorphic Development Kits for Edge AI

- STMicroelectronics Launches $99 LoRa Development Kits for Rapid IoT Deployment

- Accelerating IoT Innovation with Smart Development Boards

- Azure RTOS Expands Across Major Embedded Platforms with New Development Kits

- ams Unveils NanoVision and NanoBerry Kits Powered by 1 mm × 1 mm NanEyeC Sensor

- Alexa‑Controlled ARDrone 2.0 Demo: Voice‑Activated Drone with Raspberry Pi & Hologram Nova

- Arduino IoT Cloud & Amazon Alexa Integration: A Step-by-Step Guide