BrainChip Launches Akida Neuromorphic Development Kits for Edge AI

BrainChip, a leading neuromorphic computing IP provider, introduced two new development kits featuring its Akida neuromorphic SoC at the recent Linley Fall Processor Conference. The kits come in an x86 Shuttle PC format and an Arm‑based Raspberry Pi design, giving developers ready‑to‑use platforms for building and testing spiking neural network (SNN) applications. Akida silicon is also available for integration into custom designs.

Akida’s neuromorphic architecture delivers ultra‑low‑power AI by mimicking the brain’s event‑driven processing. Unlike conventional deep‑learning models, SNNs transmit information through discrete spikes that encode both spatial patterns and temporal sequences. This event domain is ideal for edge devices that require real‑time, power‑conscious inference from sensor streams.

In addition to native SNN workloads, BrainChip’s neural processing units (NPUs) can execute convolutional neural networks (CNNs) after converting them to the event domain. The conversion, performed by the MetaTF software flow, preserves CNN feature extraction while enabling on‑chip learning through spike‑timing dependent plasticity—eliminating the need for cloud‑based retraining.

BrainChip’s development boards are available for x86 Shuttle PC or Raspberry Pi. (Source: BrainChip)

"Akida is ready for tomorrow’s neuromorphic technology, but it already solves today’s edge AI challenges," said Anil Mankar, BrainChip co‑founder and chief development officer, to EE Times. "Our runtime software removes the learning curve around SNNs and the event domain, allowing developers familiar with TensorFlow or Keras to port models and evaluate power and accuracy directly on our hardware."

Mankar explained that CNNs excel at extracting features from large datasets. By converting CNN layers to event‑based operations—except for the final classification layer—Akida retains this capability while adding spike‑timing learning. Native SNNs can use one‑bit precision, whereas converted CNNs typically employ 1‑, 2‑ or 4‑bit spikes. BrainChip’s quantization tool helps designers choose the appropriate bit‑width per layer. For example, a MobileNet V1 model quantized to 4 bits achieved 93.1% accuracy on a 10‑class classification task.

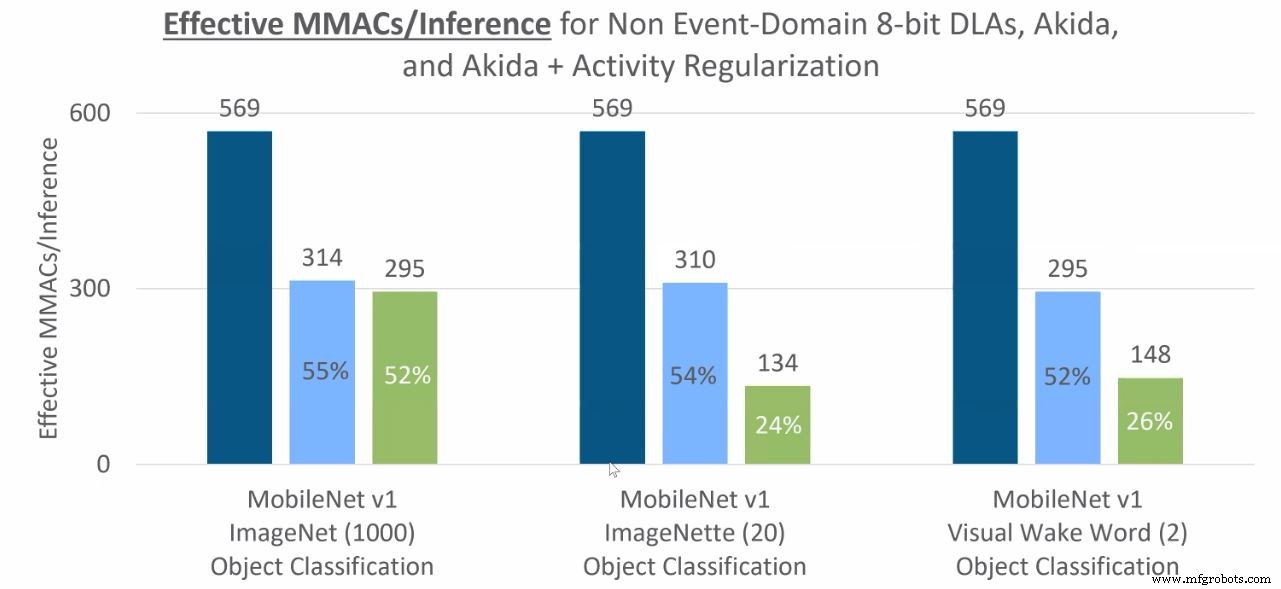

Converting to the event domain also yields significant power savings due to sparsity. In the event domain, only non‑zero activation values generate spikes, and the NPU processes only those events. For a typical CNN, roughly half of the activations are zero after ReLU, so the event‑based implementation reduces the number of MAC operations dramatically, lowering power consumption.

Functions such as convolution, point‑wise convolution, depth‑wise convolution, max pooling and global average pooling can all be translated to the event domain. The following diagram illustrates the reduction in MAC operations for an object classification task: dark blue represents the traditional CNN, light blue shows the event‑domain Akida implementation, and green indicates further activity regularization.

MAC operations required for object classification inference (dark blue is CNN in the non‑event domain, light blue is event domain/Akida, green is event domain with further activity regularization). (Source: BrainChip)

In a real‑world benchmark, a keyword‑spotting model running on the Akida board after 4‑bit quantization consumed just 37 µJ per inference (equivalent to 27,336 inferences per second per watt). The model achieved 91.3% accuracy while operating at a modest 5 MHz clock.

Sensor Agnostic

BrainChip’s NPU IP and Akida chip are network‑agnostic and support a wide range of sensors. Whether processing images, audio, olfactory, gustatory, or tactile data, the same hardware can handle both CNNs (via conversion) and native SNNs.

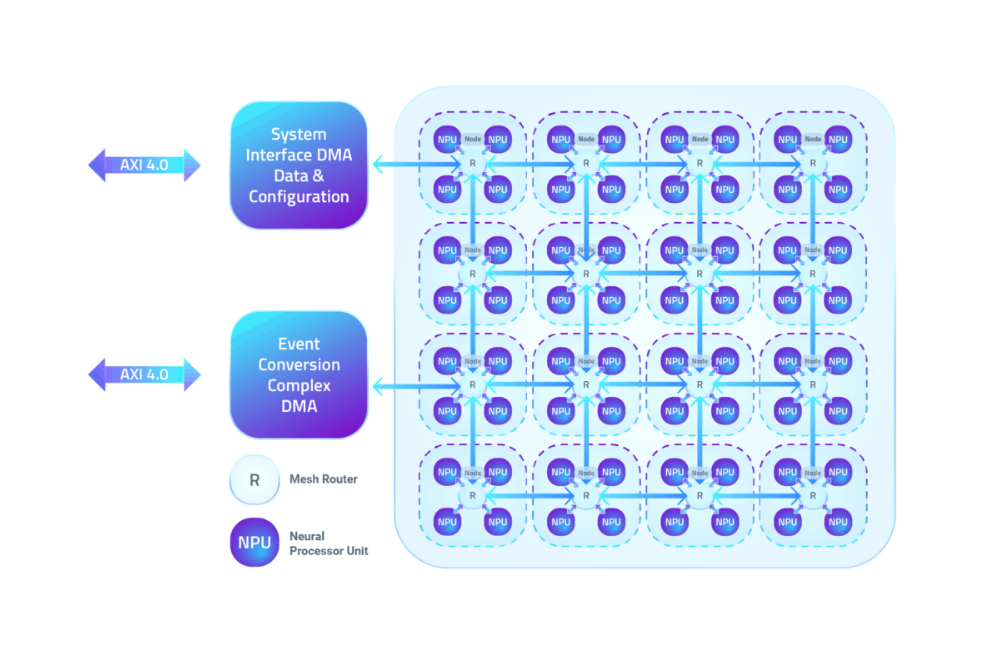

Each NPU node contains 100 kB of local SRAM for parameters, activations and event buffers, and up to four nodes can be clustered into a mesh network. Networks spanning multiple nodes communicate directly between layers without CPU intervention, although certain non‑CNN workloads still require CPU support. The IP can scale to 20 nodes, enabling larger models to run in multiple passes on smaller hardware.

Nodes of four BrainChip NPUs are connected by a mesh network. (Source: BrainChip)

A demo video showcased Akida in a vehicle cabin: one chip simultaneously detected the driver, recognized the driver’s face, and identified their voice. Keyword spotting required only 600 µW, facial recognition 22 mW, and visual wake‑word inference 6‑8 mW—demonstrating the chip’s suitability for automotive edge AI.

Rob Telson, BrainChip’s vice president of worldwide sales, noted that Akida’s 28‑nm TSMC process provides a strong baseline, and IP customers can move to finer nodes for even greater power efficiency. He also highlighted on‑chip learning for facial recognition: with sufficient neurons in the final layer, a system can grow from recognizing 10 faces to over 50 with one‑shot learning, eliminating cloud dependency.

Early Access Customers

BrainChip employs 55 staff across its headquarters in Aliso Viejo, CA, and design offices in Toulouse, France; Hyderabad, India; and Perth, Australia. The company holds 14 patents, trades on the Australian stock exchange, and is listed on the U.S. OTC market.

Early‑access customers include NASA, automotive, military, aerospace, medical (e.g., olfactory COVID‑19 detection), and consumer electronics firms. Renesas has licensed a 2‑node Akida NPU IP for integration into future microcontroller units aimed at sensor data analysis in IoT deployments.

Akida IP and silicon are available now.

>> This article was originally published on our sister site, EE Times.

Related Contents:

- Startup packs 1000 RISC‑V cores into AI accelerator chip

- AI chip targets low‑power edge devices

- AI‑enabled SoCs handle multiple video streams

- Building security into an AI SoC using CPU features with extensions

- Microcontroller architectures evolve for AI

For more Embedded, subscribe to Embedded’s weekly email newsletter.

Embedded

- Cloud Computing vs On-Premise: Which Infrastructure Best Suits Your Business?

- Accelerate Alexa Integration with Developer Kits and Reference Designs

- Neuromorphic Chips: The Next Frontier for AI Performance

- Innatera Unveils First Neuromorphic AI Chips for Ultra‑Low‑Power Edge Applications

- STMicroelectronics Launches $99 LoRa Development Kits for Rapid IoT Deployment

- Accelerating IoT Innovation with Smart Development Boards

- Azure RTOS Expands Across Major Embedded Platforms with New Development Kits

- ams Unveils NanoVision and NanoBerry Kits Powered by 1 mm × 1 mm NanEyeC Sensor

- C# Events: Handling User Actions and System Notifications

- Fog Computing Explained: Bridging Cloud and Edge for Faster, Smarter Data