Designing Low‑Latency Acoustic Systems: Why Strategic Planning Matters

Low‑latency, real‑time acoustic processing is essential across embedded applications such as voice preprocessing, speech recognition, and active noise cancellation (ANC). As performance demands grow, developers must adopt a strategic approach that balances speed, determinism, and resource constraints. Relying on a high‑performance SoC to handle every task can seem convenient, but neglecting latency and real‑time guarantees often leads to system failures. This article outlines key considerations when choosing between a SoC and a dedicated audio DSP, helping designers avoid costly surprises.

Low‑latency acoustic systems span many domains. In automotive, for instance, they enable personal audio zones, road‑noise cancellation, and in‑car communication—all of which rely on sub‑millisecond response times.

Electrification has amplified ANC’s importance, as the absence of engine noise exposes road‑to‑vehicle interface sounds. Reducing this noise improves ride comfort and reduces driver fatigue. Implementing low‑latency ANC on an SoC poses challenges in latency, scalability, upgradeability, algorithm support, hardware acceleration, and customer support. We examine each factor below.

Latency

Latency is critical in real‑time acoustic processing. If a processor cannot keep pace with data movement and computation, audio dropouts become inevitable.

SoCs typically feature small on‑chip SRAM and rely heavily on cache for local memory access. This introduces nondeterministic code and data availability, increasing processing latency. For applications like ANC, even modest jitter can be a deal‑breaker. Moreover, SoCs run general‑purpose operating systems that manage heavy multitasking loads, further amplifying nondeterminism and making it hard to support complex acoustic algorithms in a multitasking environment.

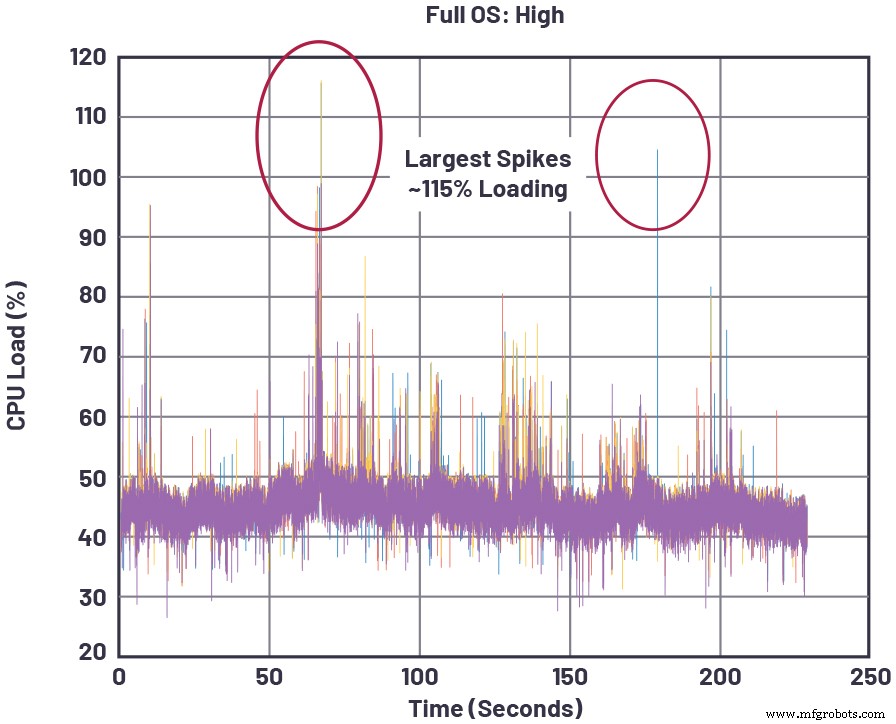

Figure 1 illustrates a representative SoC under real‑time audio load. CPU utilisation spikes whenever higher‑priority tasks—media rendering, web browsing, or app execution—are serviced. When utilisation exceeds 100 %, the SoC leaves real‑time mode, resulting in audio dropouts.

click for full size image

Figure 1: Instantaneous CPU loads for a representative SoC running high‑audio‑memory processing in addition to other tasks [1]. (Source: Analog Devices)

Audio DSPs, by contrast, are architected for low latency throughout the signal path—from sampled audio input to composite output. They feature ample L1 instruction and data SRAM for most algorithms, and on‑chip L2 memory to buffer intermediate data. Running a real‑time operating system (RTOS) guarantees that incoming samples are processed and delivered before the next batch arrives, preventing buffer overflows.

Boot‑time latency—time to first audible output—is also critical in automotive, where warnings must be delivered within strict windows. SoCs often have lengthy boot sequences that bring up a full OS, making it hard to meet these deadlines. A standalone DSP with its own RTOS can be optimised for rapid boot, comfortably satisfying time‑to‑audio requirements.

Scalability

SoCs that manage large automotive systems struggle to scale acoustic performance from low‑end to high‑end configurations. Each additional subsystem—remote tuner, infotainment cluster, etc.—creates resource contention for memory bandwidth, CPU cycles, and bus arbitration, inflating real‑time latency.

The acoustic subsystem itself also scales: increasing microphone/speaker counts in ANC or adding 3D audio features demands more processing power. A dedicated audio DSP serves as a coprocessor, freeing the SoC from real‑time acoustic constraints and allowing modular, cost‑effective scaling across product tiers.

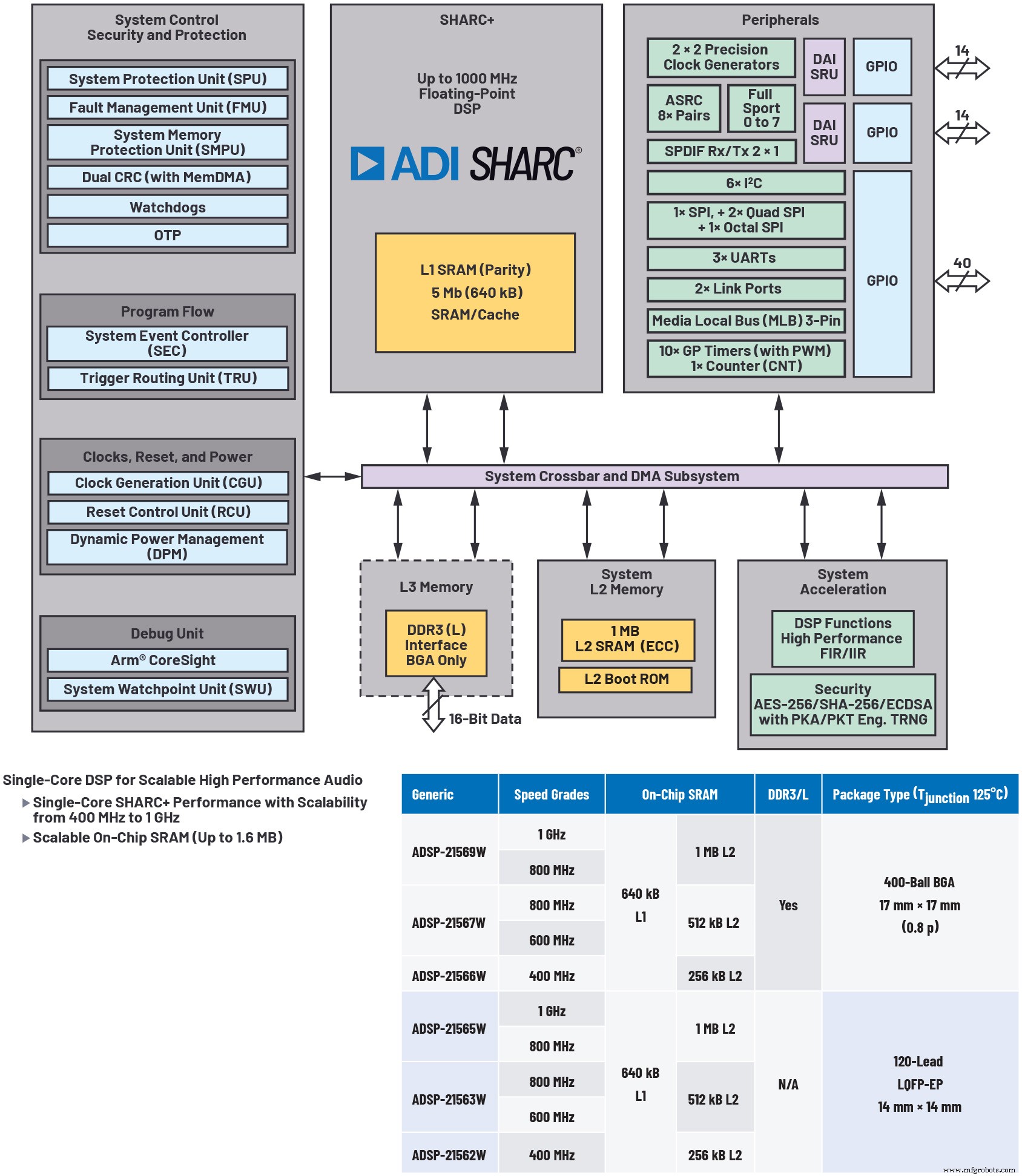

Figure 2 demonstrates how a separate audio processor (e.g., the ADSP‑2156x DSP) resolves scalability issues, enabling modular design and optimal cost‑performance trade‑offs.

click for full size image

Figure 2: Illustrative of a highly scalable audio processor. Using a separate audio processor, such as the ADSP‑2156x DSP, resolves scalability problems, enables modular design, and optimises cost. (Source: Analog Devices)

Upgradeability

Over‑the‑air firmware updates are now common in vehicles, requiring robust upgrade paths for critical patches and new features. In SoCs, adding features increases competition for CPU and memory resources, often unpredictably impacting real‑time audio performance. New functionality must be cross‑tested across all operating modes, exponentially raising verification effort. Upgradeability therefore depends heavily on available SoC MIPS and the roadmap of other subsystems.

Dedicated audio DSPs decouple acoustic performance from other SoC domains, simplifying upgrade cycles and reducing cross‑dependency risks.

Algorithm Development and Performance

Audio DSPs are purpose‑built for real‑time acoustic algorithm development. Many DSP families provide graphical development environments that let engineers with minimal DSP experience design complex audio pipelines, cutting development time without compromising quality.

ADI’s SigmaStudio, for example, offers an intuitive GUI with a library of signal‑processing blocks, enabling rapid prototyping of sophisticated audio flows (see Figure 3). It also supports graphical A2B configuration for audio transport, accelerating system integration.

click for full size image

Figure 3: Graphical audio development environments like ADI’s SigmaStudio provide access to a wide variety of signal‑processing algorithms integrated into an intuitive GUI, simplifying the creation of complex audio signal flows. (Source: Analog Devices)

Audio‑Friendly Hardware Features

Beyond the core architecture, audio DSPs include dedicated multichannel accelerators for FFTs, FIR/IIR filtering, and asynchronous sample‑rate conversion (ASRC). These offload intensive operations from the core, boosting effective performance. They also enable a flexible, user‑friendly programming model thanks to optimised data‑flow management.

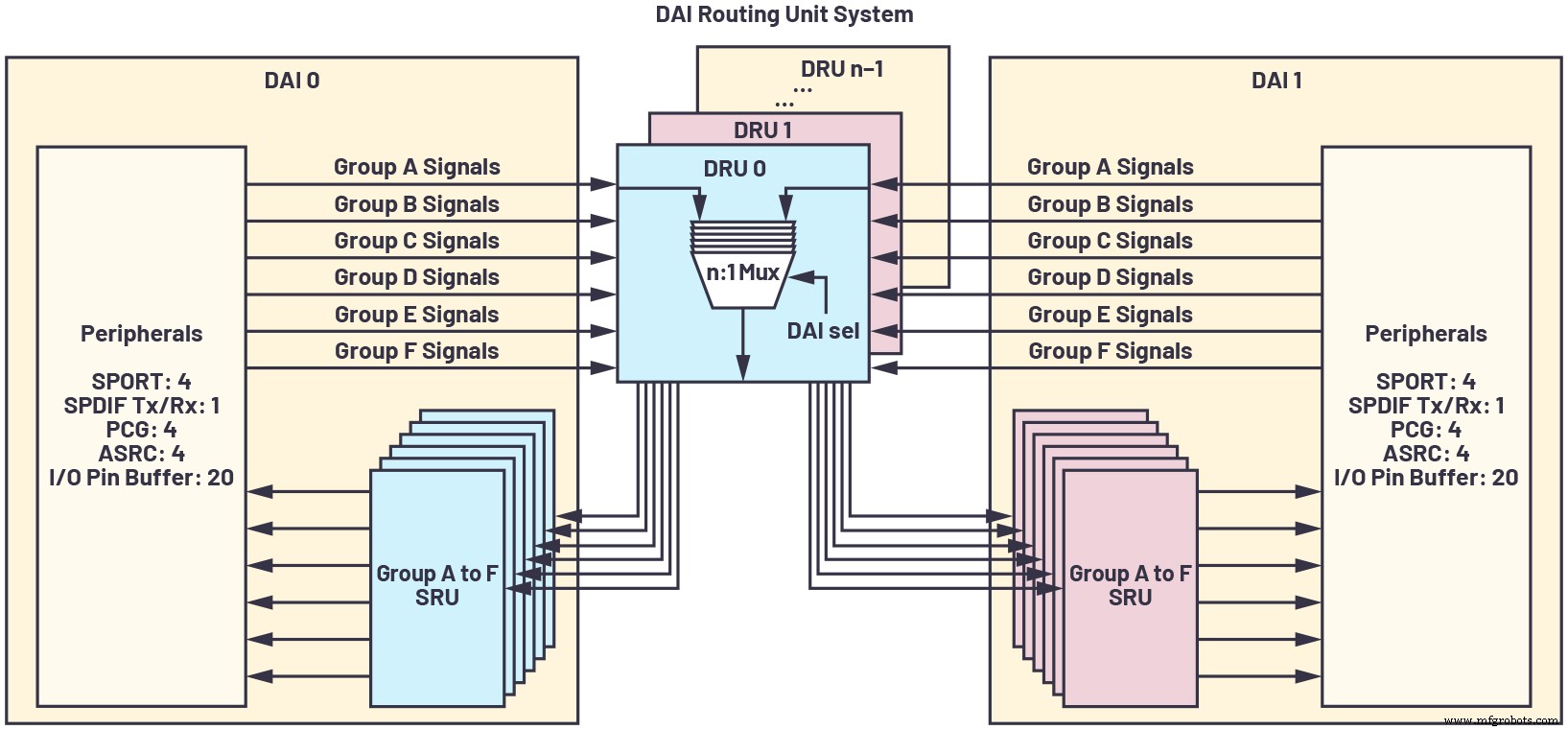

With growing channel counts, filter streams, and sampling rates, a maximally configurable pin interface is essential. It allows in‑line sample‑rate conversion, precision clocking, and synchronous high‑speed serial ports, routing data efficiently and avoiding added latency. ADI’s SHARC family demonstrates this capability with its Digital Audio Interconnect (DAI) (see Figure 4).

click for full size image

Figure 4: A digital audio interconnect (DAI) is a maximally configurable pin interface that allows in‑line sample‑rate conversion, precision clocking, and synchronous high‑speed serial ports to route data efficiently and avoid added latency or external interface logic. (Source: Analog Devices)

Customer Support

Vendor support is a critical factor in embedded development. While SoC vendors may tout on‑chip DSPs, their acoustic expertise is often limited. Support tends to be fragmented: developers may face a complex ecosystem, limited libraries, and high NREs to port custom algorithms. Success is not guaranteed, especially if the vendor lacks mature low‑latency frameworks.

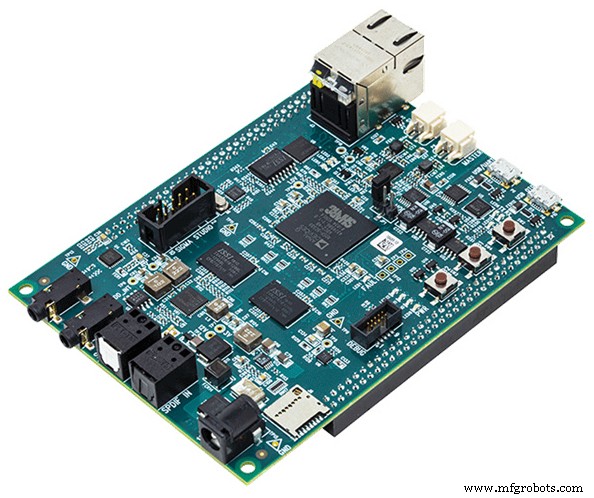

In contrast, dedicated audio DSPs come with a mature ecosystem—optimised algorithm libraries, device drivers, RTOS, and user‑friendly tools. Reference platforms, such as ADI’s SHARC audio module platform (see Figure 5), accelerate time‑to‑market and are a rarity among SoCs.

click for full size image

Figure 5: DSPs commonly provide audio‑focused development platforms like the SHARC audio module (SAM). (Source: Analog Devices)

Designing real‑time acoustic systems requires deliberate, strategic allocation of resources. Relying on leftover SoC headroom is rarely sufficient. A standalone audio DSP, optimised for low latency, yields greater robustness, shorter development cycles, and scalable performance for future product tiers.

Reference

[1] Paul Beckmann. “Multicore SOC Processors: Performance, Analysis, and Optimization.” 2017 AES International Conference on Automotive Audio, August 2017.

David Katz has 30 years of experience in analog, digital, and embedded systems design. He is director of systems architecture for automotive infotainment at Analog Devices, Inc. He has published internationally close to 100 embedded processing articles, and he has presented several conference papers in the field. Previously, he worked at Motorola, Inc., as a senior design engineer in cable modem and factory automation groups. David holds both a B.S. and an M.Eng. in electrical engineering from Cornell University. He can be reached at david.katz@analog.com.

David Katz has 30 years of experience in analog, digital, and embedded systems design. He is director of systems architecture for automotive infotainment at Analog Devices, Inc. He has published internationally close to 100 embedded processing articles, and he has presented several conference papers in the field. Previously, he worked at Motorola, Inc., as a senior design engineer in cable modem and factory automation groups. David holds both a B.S. and an M.Eng. in electrical engineering from Cornell University. He can be reached at david.katz@analog.com.

Related Contents:

- Blending hardware and software for better audio

- How audio edge processors enable voice integration in IoT devices

- AI finds its voice in audio chain

- Adaptive ANC solutions bring enhanced audio capabilities

- Design considerations for low‑power, always‑on voice command systems

For more Embedded, subscribe to Embedded’s weekly email newsletter.

Embedded

- Enhanced Edge Audio Processing for Voice-Activated Devices

- Arm Unveils Ethos-U65 microNPU for Cortex‑A Application Processors

- Blaize Unveils Graph Streaming Processor (GSP) for AI Workloads

- Optimizing Performance with Multi‑Chip Inference Architectures

- NXP Launches i.MX 8M Plus: A Multicore Edge Processor with Built‑In Neural Processing Unit

- PIC18-Q43 Microcontrollers: Zero‑Latency Configurable Peripherals for Real‑Time Control

- Real‑Time Motion Planning for Autonomous Vehicles in Urban Environments: A Scaled‑Down Prototype Study

- Strategic Digital Shop Floor Initiatives: A Blueprint for Manufacturing Resilience

- Is Your Manufacturing System Smart? Unlock Real‑Time Plant Floor Data for Competitive Advantage

- Badger Sheet Metal Works: Expert Process Pipe Fabrication & Strategic Route Planning