Mipsology’s Zebra Turns GPU AI Code Into FPGA Accelerators With One Command

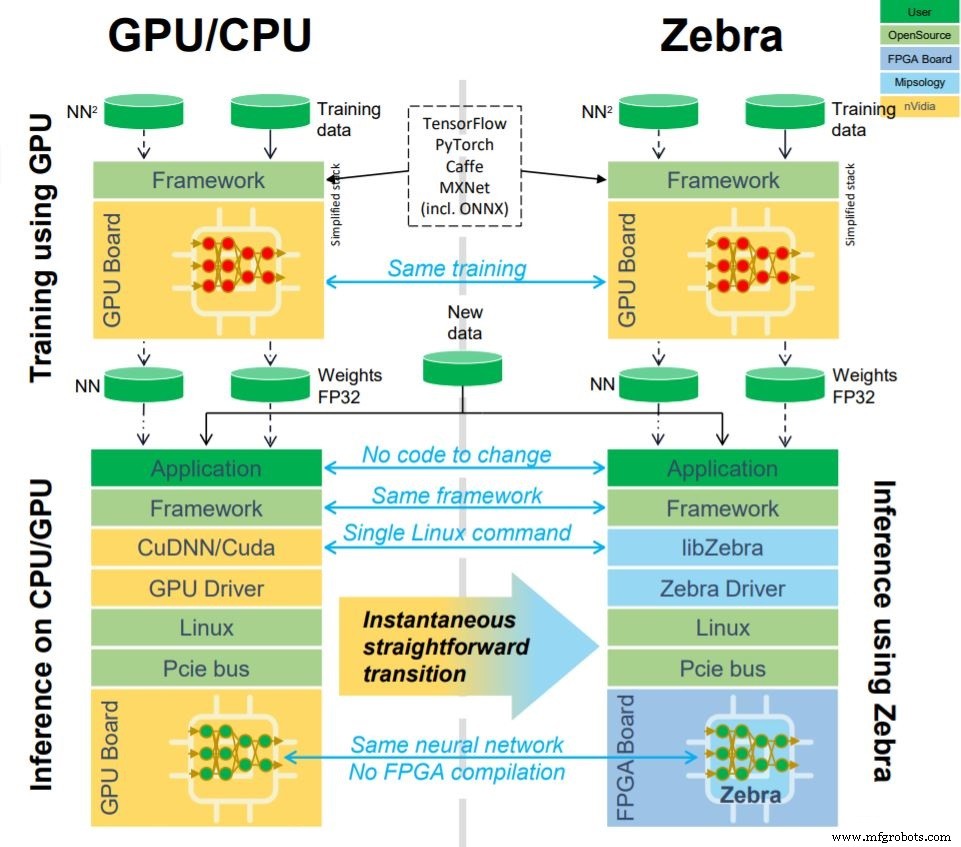

Mipsology, a forward‑thinking AI software startup, has partnered with Xilinx to unlock the full potential of FPGAs for AI workloads. Their “zero‑effort” solution, Zebra, translates existing GPU code so it can run on Mipsology’s custom AI compute engine on an FPGA—no code changes or retraining required.

Today Xilinx shipped Zebra with the latest build of its Alveo U50 data‑center accelerator card, adding seamless GPU‑to‑FPGA conversion to the device out of the box. Zebra already accelerates inference on other Xilinx boards, including the Alveo U200 and Alveo U250.

The latest build of Xilinx’s Alveo U50 data‑center accelerator card now ships with Mipsology’s Zebra software, enabling GPU AI code to run on FPGAs (Image: Xilinx)

"The acceleration that Zebra delivers on our Alveo cards outshines CPUs and GPUs," said Ramine Roane, Xilinx’s vice president of marketing. "With Zebra, the Alveo U50 satisfies the flexibility, performance, high‑throughput, and low‑latency demands of AI workloads, making it a compelling choice for any deployment."

Plug‑and‑Play FPGAs

Historically, FPGAs have been perceived as complex to program, especially for non‑specialists. Mipsology’s vision is to make FPGAs a plug‑and‑play platform—equally simple to use as a CPU or GPU. The goal is to enable users to switch from traditional accelerators to FPGA with a single line of code.

“Mipsology is essentially the CUDA of FPGAs,” said CEO Ludovic Larzul in an interview with EE Times. “We provide software that abstracts the FPGA so AI users can focus on the model, not the hardware.”

Critically, this transition is achievable by non‑experts. No deep AI expertise or FPGA knowledge is needed, and the model does not have to be retrained.

“Ease of use is paramount. Many AI projects are managed by teams that don’t have an in‑house AI or FPGA specialist,” Larzul explained. “They might develop neural networks elsewhere, and once a model is trained, they want to deploy it without redesigning it.”

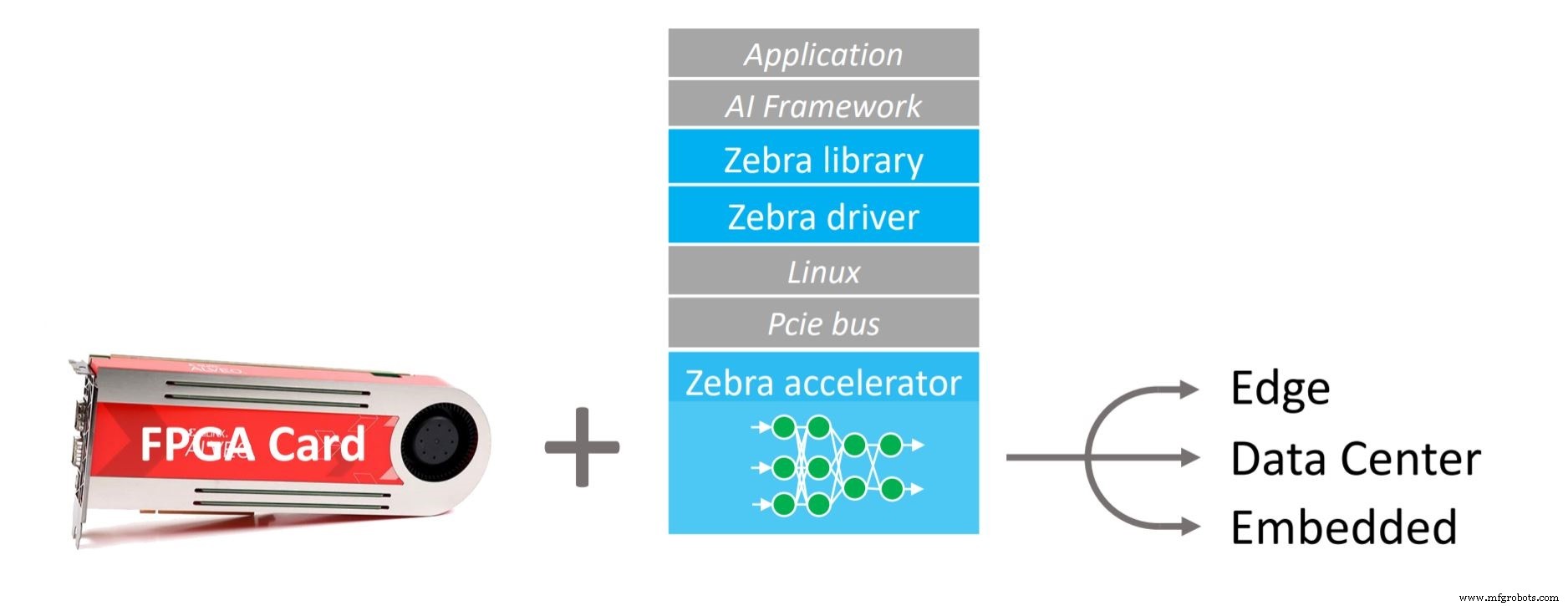

Zebra’s stack. The technology spans data‑center, edge, and embedded use cases (Image: Mipsology)

Why Not Vitis?

Xilinx already offers Vitis, a comprehensive toolchain for bringing FPGAs to data scientists and developers. So why partner with a third‑party? Larzul says the answer is simple: performance and simplicity.

“We’re doing better,” he said. “And we’re doing it right.”

Mipsology’s Zebra is entirely independent of Vitis, XDNN, or Xilinx’s neural‑network accelerator engine. Instead, it runs on a dedicated compute engine that natively supports customers’ existing convolutional neural network (CNN) models. In contrast, XDNN, while capable, is less suited to custom networks and can be cumbersome to integrate.

“We’re positioned to let FPGAs take on GPUs head‑on, matching them in performance, cost, and usability,” Larzul added.

Zebra’s stack in detail. We hide the hardware complexity to make FPGAs a seamless switch from GPUs or CPUs (Image: Mipsology)

Cost is a primary driver for many customers. “They want lower hardware costs without redesigning the network,” Larzul said. “By eliminating retraining and network modification, we reduce the non‑recurring engineering cost.”

FPGAs also offer superior reliability. Because they consume less silicon real estate and operate at lower temperatures than GPUs, they incur lower long‑term maintenance costs—an important consideration for data‑center operators.

“Total cost of ownership includes more than just the board price,” Larzul emphasized. “It also covers keeping the system operational.”

Zebra is engineered to close the performance gap. While FPGAs typically deliver fewer tera‑operations per second (TOPS) than other accelerators, Zebra’s compute engine maximizes efficiency. “Our FPGA can process more images per second than a GPU with six times the TOPS,” Larzul claimed.

How is this achieved? Although specific details are proprietary, Larzul noted that Zebra avoids pruning (which would degrade accuracy without retraining) and steers clear of extreme quantisation below 8‑bit, preserving model fidelity.

Beyond CNNs—dominant in image and video tasks—Zebra also supports BERT, Google's NLP model that shares underlying mathematical structures. Future releases may target LSTM and RNN architectures, though these present additional challenges.

The Team Behind Zebra

Founded in 2015, Mipsology employs around 30 R&D engineers in France, with a lean business development squad in California. The company has raised $7 million, including a $2 million award from a French government innovation competition in 2019.

Mipsology’s core talent comes from EVE, an ASIC emulator firm acquired by Synopsys in 2012 for its ZeBu verification products. EVE’s technology—leveraging thousands of interconnected FPGAs to replicate ASIC behavior—has been used by major ASIC vendors worldwide.

With 12 pending patents, Mipsology works closely with Xilinx and remains compatible with third‑party accelerator cards, such as Western Digital’s small‑form‑factor (SFF U.2) and Advantech’s Vega‑4001.

>> This article was originally published on our sister site, EE Times.

Embedded

- Choosing the Ideal CAD Software for Your 3D Printing Workflow

- Accelerating Deep Learning with FPGAs: Speed, Flexibility, and Energy Efficiency

- STSAFE‑A100 Evaluation Pack – Secure Element Kit with Integrated Software for IT & IoT

- Optimizing AI Models for Efficient Embedded Deployment

- Amodel PPA Supreme – Next‑Generation Polyphthalamide for E‑Mobility and Metal‑Replacement Applications

- High-Performance Vibratory Conveyors for Foundry Applications

- Key Applications of Pneumatic Actuators in Modern Automation

- Essential Mining Tools: Four Key Equipment Types Every Operation Needs

- Staubli Robotics Unveils Advanced Software Suite for Development and Maintenance

- Revolutionizing Healthcare: How 3D Printing Is Transforming Medical Applications