Nvidia Launches Drive AGX Orin, 200‑TOPS AI Chip with 3× Efficiency for Autonomous Vehicles

The company will also make its AI models for autonomous vehicles available to developers.

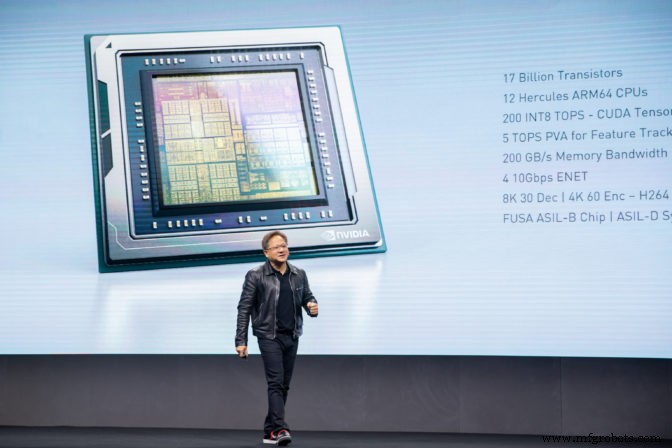

At the company’s GPU Technology Conference (GTC) in Suzhou, China, Nvidia CEO Jensen Huang took to the stage to introduce Drive AGX Orin, the next generation SoC in the company’s automotive portfolio.

Orin follows Drive AGX Xavier, launched just under 2 years ago at CES 2018. Xavier is Nvidia’s current flagship SoC for AI acceleration in vehicles.

Orin, with 17 billion transistors, is almost double the size of Xavier’s 9 billion and delivers nearly 7x the performance (200 TOPS for INT8 data). Despite its size, Orin also offers 3x the power efficiency of Xavier, the company said.

“This is a huge boost in performance, but it’s not just about the TOPS. It’s about the architecture being designed for very complex workloads, diverse and redundant algorithms that run inside an autonomous vehicle. Xavier handles today’s workloads; Orin will handle tomorrow’s.” — Danny Shapiro, senior director of automotive at Nvidia.

Nvidia CEO Jensen Huang presents Orin to the audience at the company’s GPU technology conference in China (Image: Nvidia)

Orin will use 12 Hercules ARM64 CPUs alongside next‑generation Nvidia GPU cores and new deep‑learning and computer‑vision accelerators, the details of which were not disclosed.

Designed for Level 2 to Level 5 autonomous vehicles and robotics, Orin can run many neural networks and other applications simultaneously while achieving ISO 26262 ASIL‑D safety levels. Leveraging the Nvidia Drive platform, Orin is software‑compatible with Xavier.

The Orin family will include a range of configurations based on a single architecture and will be available for customer production runs in 2022.

Federated Learning

Nvidia also announced a partnership with Didi, the app‑based transportation provider active in Asia, Latin America and Australia.

Didi will use Nvidia GPUs in its data centre to train machine‑learning algorithms and the Nvidia Drive platform for inference in its Level 4 autonomous vehicles. Didi spun out its autonomous‑driving unit into a separate company in August and will launch virtual GPU cloud services for customers based on Nvidia GPUs.

In a separate announcement, Nvidia revealed it will make pre‑trained models for the deep neural networks it developed for Nvidia Drive freely available to autonomous‑vehicle developers. These include models for detecting traffic lights and signs, as well as vehicles, pedestrians and bicycles. They also include path‑perception, gaze detection and gesture‑recognition algorithms.

Orin will offer 200 TOPS, 7x the performance of Xavier at 3x the power efficiency (Image: Nvidia)

Importantly, these models can be customized using tools provided by the company and can be updated using federated learning. Federated learning trains locally at the edge, preserving data privacy, before a central model is updated with training results from multiple sources.

“The AI autonomous vehicle is a software‑defined vehicle required to operate around the world on a wide variety of datasets,” said Jensen Huang, CEO of Nvidia. “By providing AV developers access to our DNNs and advanced learning tools to optimise them for multiple datasets, we’re enabling shared learning across companies and countries while maintaining data ownership and privacy. Ultimately, we are accelerating the reality of global autonomous vehicles.”

Embedded

- The Story & Science of Potato Chips: From George Crum to Modern Production

- From Nixtamal to Snack: The Complete Journey of Tortilla Chip Production

- Bluetooth Mesh Design Choices: Module vs. Discrete Device

- MLCommons Unveils MLPerf Inference Benchmark 2024: Edge, Data Center, Mobile, and Notebook Results

- AImotive Launches aiWare3P Neural Network Accelerator for High-Performance Automotive Vision

- Ultra‑Small u‑blox BMD‑380 Bluetooth 5.0 Module with Built‑In Ceramic Antenna

- MIT Breakthrough: 1.6 mm² Cryptographic ID Chip Combats Counterfeiting with Terahertz Backscatter

- Edge AI Acceleration: Leading Specialized Processors for 2024

- SamurAI: Low‑Power AI Chip Sets New Benchmark for Image Recognition in IoT

- Lattice & Etron Unveil Compact, Low‑Power Memory Controller for Edge AI Workloads