MLCommons Unveils MLPerf Inference Benchmark 2024: Edge, Data Center, Mobile, and Notebook Results

MLCommons, the independent organization that curates the MLPerf benchmark suite, has announced the latest round of MLPerf Inference scores. The new results are neatly split into four device classes—data center, edge, mobile, and notebook—making it easier for buyers, vendors, and researchers to compare performance across real‑world workloads.

Device‑Class Segmentation

Separating the scores by device class reflects the distinct form factors and performance profiles that characterize each market. As David Kanter, executive director of MLCommons told EE Times, “If you’re talking about a notebook, it’s probably running Windows; if you’re talking about a smartphone, you’re probably running iOS or Android.” By isolating mobile and notebook results, the benchmark provides clearer insights into the capabilities of the most relevant accelerators for each use case.

State‑of‑the‑Art Models

Unlike the previous round, which focused primarily on vision tasks, the current benchmark incorporates five modern, production‑grade models:

- DLRM – a large‑scale recommendation engine

- 3D‑UNet – a medical imaging model for tumor detection in MRI scans

- RNN‑T – a speech‑to‑text engine

- BERT – a natural‑language‑processing transformer

- MobileNetEdge, SSD‑MobileNetv2, Deeplabv3, and Mobile BERT – mobile‑optimized variants of the above

These choices were driven by an advisory board that includes industry experts from recommendation, medical imaging, and speech domains, ensuring the benchmarks reflect the most demanding workloads in production today.

Data Center Performance

As anticipated, Nvidia’s GPUs dominate the data‑center class. In fact, 85% of the total submissions were powered by Nvidia hardware, and the company swept every category it entered. The benchmark shows a widening performance gap: on a basic computer‑vision model, the Nvidia A100 delivers roughly 30× the throughput of an Intel Cooper Lake CPU, and 237× faster than a comparable CPU on recommendation‑system workloads. Paresh Kharya, Nvidia’s senior director of product management, highlights that a single DGX‑A100 can match the performance of a 1,000‑node CPU cluster for recommendation tasks, offering tremendous value for scale.

The only non‑CPU, non‑GPU entrant in this class was Mipsology’s Zebra accelerator, running on a Xilinx Alveo U250 FPGA. Zebra achieved 4,096 ResNet queries per second in server mode versus 5,563 for an Nvidia T4, and 5,011 samples per second offline compared to 6,112 for the T4.

In the research‑development category, Taiwanese company Neuchips’ RecAccel—an FPGA‑based accelerator for DLRM—performed on par with or below Intel’s Cooper Lake CPUs and fell short of Nvidia’s results. These early‑stage prototypes demonstrate the breadth of emerging AI hardware, even if they are not yet commercially available.

Edge‑Tier Outcomes

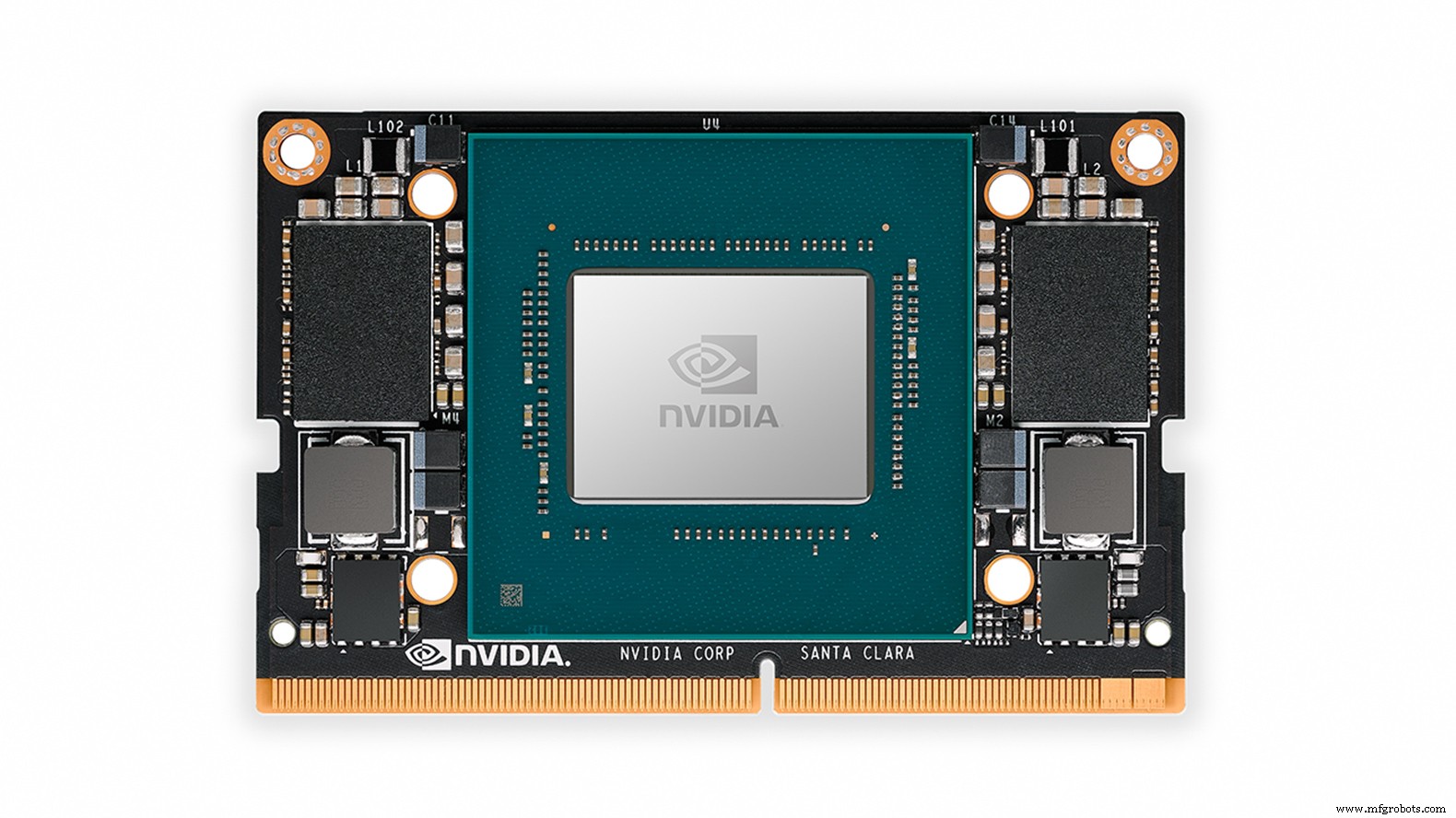

Edge systems were largely powered by Nvidia’s A100, T4, AGX Xavier, and Xavier NX chips. Centaur Technology’s reference design, featuring its proprietary x86 processor and a separate AI coprocessor, beat Nvidia’s Tesla T4 in single‑stream ResNet latency but lagged behind on throughput. British consultancy dividiti presented a wide range of scores—from Raspberry Pi to Nvidia AGX Xavier—highlighting how operating system choices and the use of on‑chip accelerators can influence results.

New entrants from the research‑development pool included Russian company IVA Technologies and Korean startup Mobilint. While neither achieved breakthrough performance, their inclusion shows that prototype accelerators are reaching a level of software maturity that warrants benchmarking against commercial solutions.

Mobile and Notebook Benchmarks

The mobile class saw three comparable submissions: MediaTek’s Dimensity 820 (in the Xiaomi Redmi 10X 5G), Qualcomm’s Snapdragon 865+ (tested on an Asus ROG Phone 3), and Samsung’s Exynos 990 (used in the Galaxy Note 20 Ultra). Samsung led in image classification and NLP, MediaTek excelled in object detection, and Samsung again outperformed in image segmentation. The results illustrate that no single SoC dominates across all tasks, underscoring the importance of workload‑specific optimization.

The notebook category contained a single Intel reference design featuring the forthcoming Xe‑LP GPU. Although the limited data set makes detailed comparison difficult, the Xe‑LP achieved 2.5× higher throughput than the best mobile SoC on image‑segmentation (DeeplabV3) and 1.15× better on object detection (SSD‑MobileNetv2).

Looking Ahead

Kanri emphasizes MLCommons’ commitment to diversity in the benchmark ecosystem. The organization encourages non‑Nvidia, non‑Intel, and small‑company participation, with an open division that allows any network or model to be tested. “We’re also working on adding a power‑measurement dimension for the next round,” Kanter says, inviting the community to help develop the necessary tooling.

For a complete, detailed list of MLPerf Inference results, click here.

Source: EE Times

Edge results dominated by Nvidia GPUs, including the Jetson Xavier NX

Embedded

- Leveraging DSPs for Real‑Time Audio AI at the Edge

- Harnessing Energy Harvesting for Reliable Edge IoT Devices

- ADLINK & Charles Industries Unveil First Complete Micro‑Edge AI/ML Solution for 5G Pole‑Mounted Deployments

- How Data Visualisation Drives Efficiency for Machine Builders

- Bridging the Gap: Making Machine Learning Accessible at the Edge

- Accelerating Industrial Edge Vision with NXP’s i.MX 8M Plus Processor

- Machine Learning Accelerates Pipeline Safety: Real-Time Fault Detection Saves $10M

- Edge Computing: The New Heartbeat of the Cloud Era

- Automating Data Quality to Accelerate AI & ML Success

- Accelerate Business Insights with Automated Data Science & Machine Learning Solutions