AImotive Launches aiWare3P Neural Network Accelerator for High-Performance Automotive Vision

Hungary‑based AImotive, a leader in automotive AI solutions, has begun shipping its aiWare3P neural network (NN) inference engine intellectual property (IP) to key customers. The aiWare3P core, announced last year, delivers a purpose‑built accelerator for high‑resolution automotive vision and is fully compliant with ISO26262 ASIL A, B and above certification requirements.

The IP is available as a fully synthesizable RTL block that can be integrated into a system‑on‑chip (SoC) or deployed as a standalone accelerator. Its low‑level microarchitecture consumes far fewer host‑CPU and shared‑memory resources than competing GPU‑based or SoC solutions, enabling lower latency and higher determinism for real‑world automotive workloads.

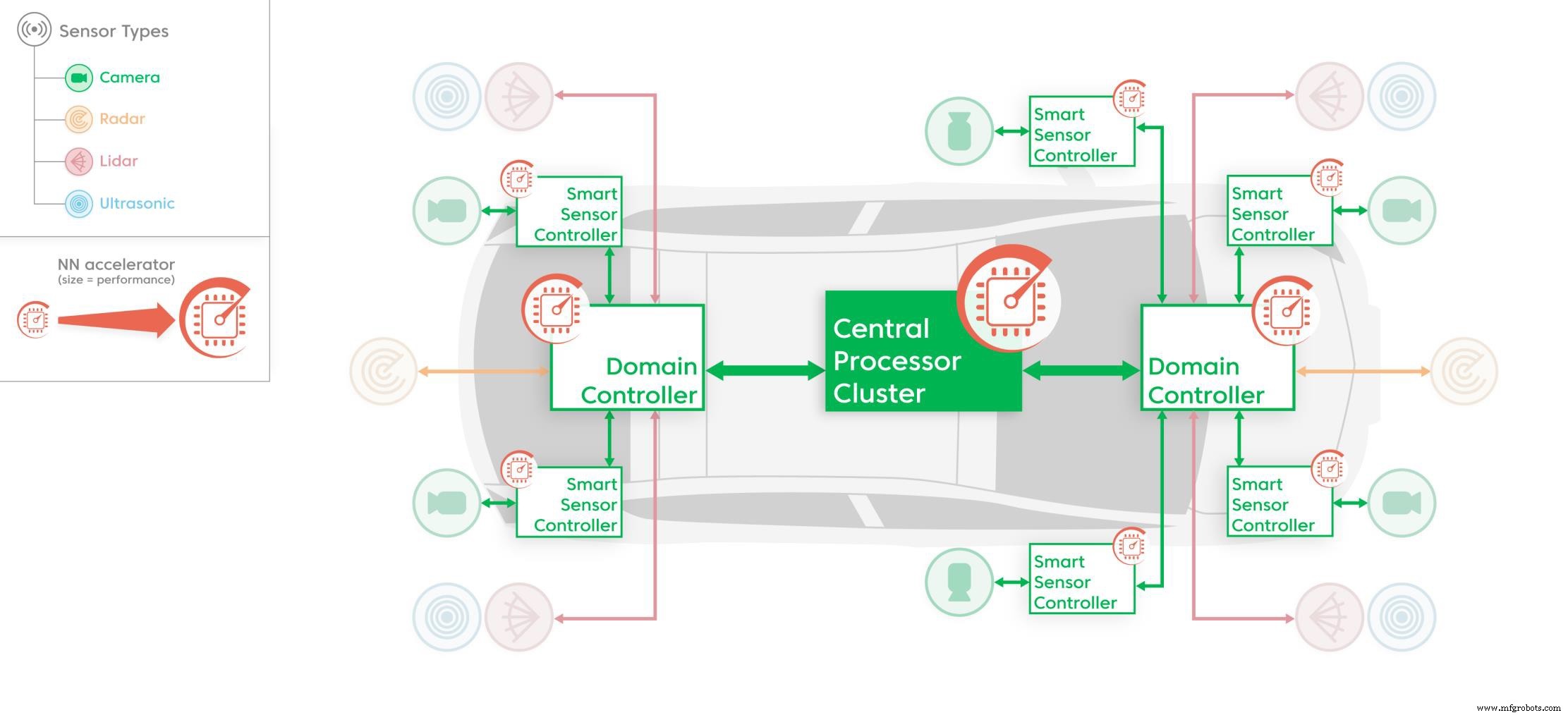

Dedicated NN accelerators like the aiWare3P IP used in various parts of the vehicle electronics platform (Source: AImotive)

Dedicated NN accelerators like the aiWare3P IP used in various parts of the vehicle electronics platform (Source: AImotive)

“Most chip vendors discuss accelerators in academic terms that don’t translate to production,” explains Tony‑King Smith, AImotive’s executive advisor. “Our design eliminates DSPs and network‑on‑chip (NoC) complexity, focusing solely on automotive inference. This gives us ultra‑low latency from input to output and frees the host CPU for other tasks.”

The aiWare3P core delivers up to 16 TMAC/s (>32 TOPS) per core at 2 GHz, with multi‑core and multi‑chip configurations reaching 50+ TMAC/s (>100 INT8 TOPS). These figures support multi‑camera and heterogeneous sensor platforms common in L2, L2+ and L3 autonomous driving.

Built to AEC‑Q100 extended‑temperature specifications, the core includes features that facilitate ASIL‑B and higher certification. Its patented ground‑up design delivers highly deterministic dataflow management and up to 100× more on‑chip memory bandwidth than other accelerators, achieving 95% sustained efficiency on complex deep neural networks (DNNs) with large HD inputs.

The aiWare SDK supports Khronos NNEF and ONNX formats, compiling binaries directly without low‑level DSP or MCU programming. It offers automated FP32‑to‑INT8 quantization with minimal accuracy loss and a suite of DNN performance‑analysis tools, enabling engineers to transition lab‑trained models to production automotive hardware seamlessly.

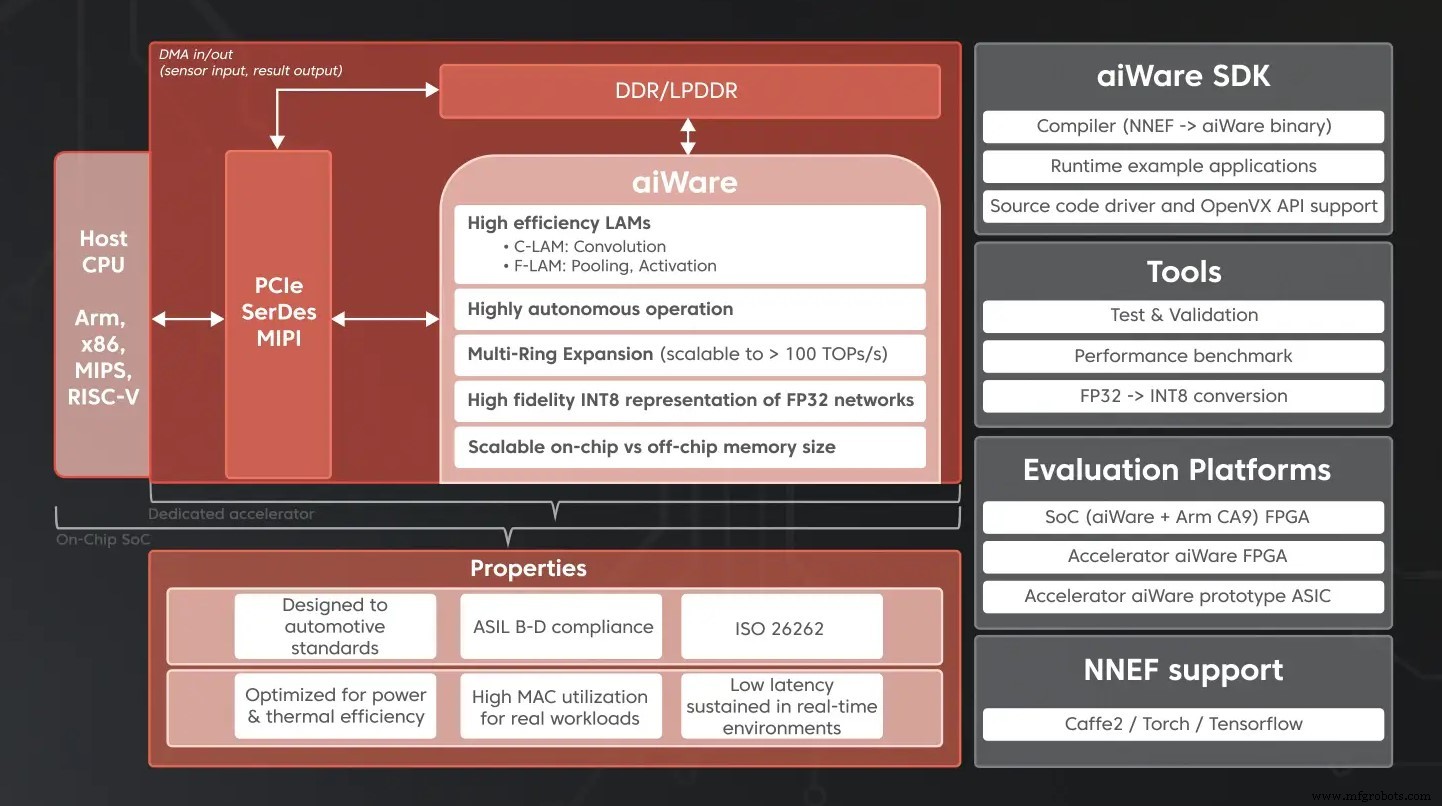

The building blocks of an automotive AI accelerator, including the aiWare hardware IP (Source: AImotive)

The building blocks of an automotive AI accelerator, including the aiWare hardware IP (Source: AImotive)

Senior Vice President of Hardware Engineering Marton Feher states, “aiWare3P unites all we’ve learned about accelerating neural networks for vision‑based automotive AI. It’s now one of the industry’s most efficient solutions for mass production of L2/L2+/L3 AI.”

Customers are already deploying aiWare3P in production L2/L2+ platforms and exploring advanced heterogeneous sensor fusion. Notable partners include Nextchip, which is integrating the core into its Apache5 Imaging Edge Processor, and ON Semiconductor, collaborating on a joint demonstration of sensor fusion capabilities.

AImotive will publish updated benchmark results in Q1 2020, emphasizing realistic high‑resolution camera inputs over synthetic 224×224 tests, underscoring its commitment to transparent, application‑centric performance data.

No Host‑CPU Intervention Required

New features support a broader portfolio of pre‑optimized embedded activation and pooling functions, enabling 100% of most NNs to run entirely within the aiWare3P core. Real‑time data compression reduces external memory bandwidth needs, and cross‑coupling between C‑LAM convolution engines and F‑LAM function engines enhances overlapped and interleaved execution efficiency.

The tile‑based microarchitecture simplifies physical implementation on any process node by easing timing constraints. Logical tile‑based data management scales workloads to the core’s maximum 16 TMAC/s without caches, NoCs, or other power‑heavy multi‑core approaches that typically hinder determinism and silicon area.

The RTL for aiWare3P will be available to all customers from January 2020, accompanied by an upgraded SDK featuring improved compilers and real‑time fine‑grained hardware analysis tools.

Embedded

- Interrupts Explained: Types, Mechanisms, and Applications in Modern Computing

- Xilinx Unveils Composable SmartNICs, AI Video Analytics, Low‑Latency Trading, and an App Store for Data Center Acceleration

- Blaize Unveils Graph Streaming Processor (GSP) for AI Workloads

- GreenWaves Unveils GAP9: Ultra‑Low‑Power AI Accelerator Achieves 50 mW Power with 50 GOPS Performance

- How Hardware Accelerators Power Modern AI Systems

- Melexis Launches ASIL‑Ready Hall‑Effect Sensor for Safety‑Critical Automotive Systems

- Arbe Unveils First Automotive-Grade 30 fps 4D Imaging Radar Processor

- Kneron Unveils KL720 AI SoC, Boosting Edge Device Power Efficiency

- Revolutionizing Automotive Design with Carbon Fiber Technology

- Top 6 Ways Robots Revolutionize Automotive Manufacturing