IBM’s Phase‑Change Artificial Neurons Mimic Brain Spikes for Ultra‑Dense, Low‑Power Computing

Manuel Le Gallo’s research will inspire a new generation of extremely dense neuromorphic computing systems. (Source: IBM Research – Zurich)

Drawing inspiration from the human brain’s spike‑based communication, IBM Research in Zurich has engineered artificial neurons that emulate the “integrate‑and‑fire” behavior seen when we touch a hot plate. These devices can detect patterns and uncover correlations in large data sets while consuming power and occupying space comparable to biological tissue—an achievement that has eluded scientists for decades.

The breakthrough is detailed in the paper “Stochastic phase‑change neurons,” which graced the cover of Nature Nanotechnology today. The work demonstrates how phase‑change memory elements can be configured to act as ultra‑dense, low‑energy neurons capable of unsupervised learning at high speed.

I spoke with co‑author and IBM Research – Zurich scientist Manuel Le Gallo, who is completing his PhD at ETH Zurich.

How does an artificial neuron function?

Manuel Le Gallo: An artificial neuron implements an “integrate‑and‑fire” circuit. It accumulates incoming signals; when the summed potential reaches a threshold, it emits a spike. This simple accumulation can realize surprisingly complex computations.

What inspired the design?

ML: The goal is to mirror the computational power of a biological neuron. While traditional artificial neurons rely on CMOS transistors, our approach uses phase‑change devices to achieve the same functionality with dramatically lower power and higher density.

What was your role?

ML: My research on phase‑change physics over the past three years informed the design of these neurons and enabled us to interpret the experimental data presented in the paper.

“We think our approach will be more efficient, especially for processing large amounts of data.”

—Manuel Le Gallo, IBM Research scientist

Where can artificial neurons be applied?

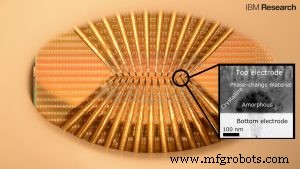

The tiny squares are contact pads that are used to access the nanometer‑scale phase‑change cells (not visible). Sharp probes touch the pads to alter the phase configuration in response to neuronal input. Each probe set accesses a population of 100 cells.

ML: Our experiments show that a single neuron with multiple plastic synapses can detect temporal correlations across many binary event streams—such as social media, weather, or IoT sensor data—by firing when the events align.

Why is neuromorphic computing more efficient?

ML: Conventional architectures separate memory and logic, forcing costly data shuttling. In neuromorphic systems, computation and storage coexist, eliminating memory‑to‑logic transfers and dramatically reducing energy consumption, especially for massive data sets.

Manuel Le Gallo earned his Master’s in Electrical Engineering at ETH Zurich and completed his thesis at IBM. He is now pursuing a PhD.

About the author: Millian Gehrer is a summer intern at IBM Research – Zurich, interviewing scientists to learn about their work. In the fall, he will begin studying Computer Science at Princeton University.

Nanomaterials

- How AI and ML Revolutionize Asset Tracking: Enhancing Accuracy, Efficiency, and Insight

- Elevate Asset Reliability: Harness Machine Learning for Proactive Asset Performance

- Deep Learning-Based Prediction Network for Metamaterial Design with Split Ring Resonators

- Superconducting Nanowire Neurons Achieve Human Brain‑Level Efficiency

- AI, ML, and Deep Learning Explained: Key Differences and How They Work

- Small Manufacturers Leverage AI to Drive Lean Operations and Efficiency

- AI-Driven Test Automation: Revolutionizing QA for Modern ERP Systems

- AI‑Powered Camera System for Accurate Cap Crack Detection in High‑Speed Production Lines

- Enhancing Human Expertise with Machine Learning: Trusted Solutions by Senseye

- Accurate Battery Lifetime Forecasting with AI – DOE Argonne Study