Fundamentals of Facial Recognition: How AI Identifies Faces

For centuries the human face has been the most intuitive means of identification. Today it remains the leading biometric method because it can be captured from photos, videos, or live feeds without requiring the subject’s active cooperation.

Facial recognition encompasses a series of steps—detection, alignment, feature extraction, and comparison—to identify or verify individuals in visual media. Despite practical hurdles, it is now integral to healthcare, law enforcement, transportation, security, and smart‑home solutions.

In this guide you’ll learn:

- What facial recognition really is

- Key algorithm categories

- Core stages of a facial‑recognition system

- Essential building blocks

- How a commercial SDK implements the technology

What is Facial Recognition?

Facial recognition is a biometric technique that uses deep‑learning models to analyze and store unique facial characteristics. Software typically identifies around 80 landmark points—eyes, nose, mouth, jawline, etc.—to create a compact feature vector. New images are then matched against stored vectors to confirm identity. This approach is highly accurate and minimally invasive.

Algorithm Classification

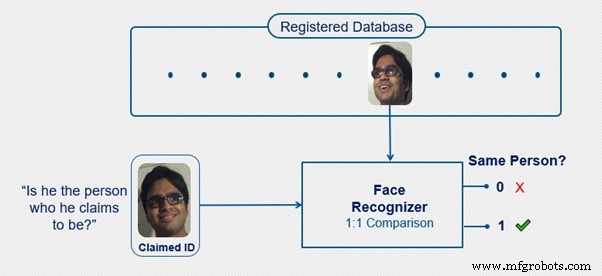

Systems perform two primary tasks: verification (1‑to‑1 comparison) and identification (1‑to‑n search). Verification answers “Is this the claimed person?” and is faster and more precise. Identification scans the entire database to locate the individual.

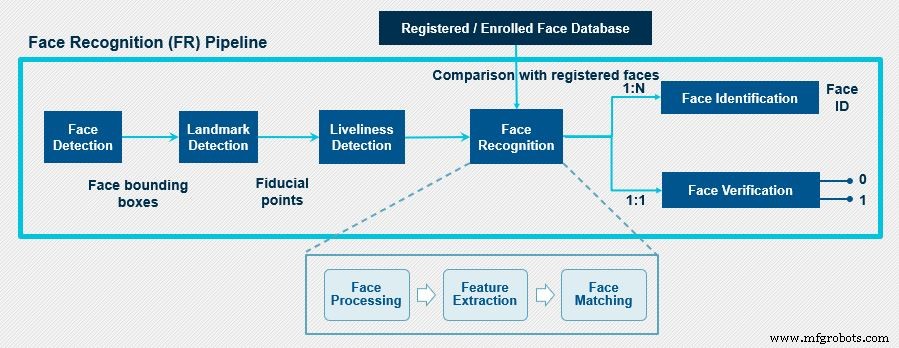

Stages of a Facial Recognition System

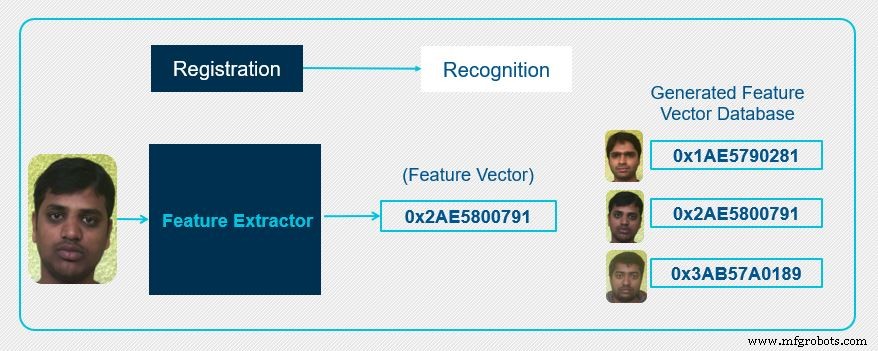

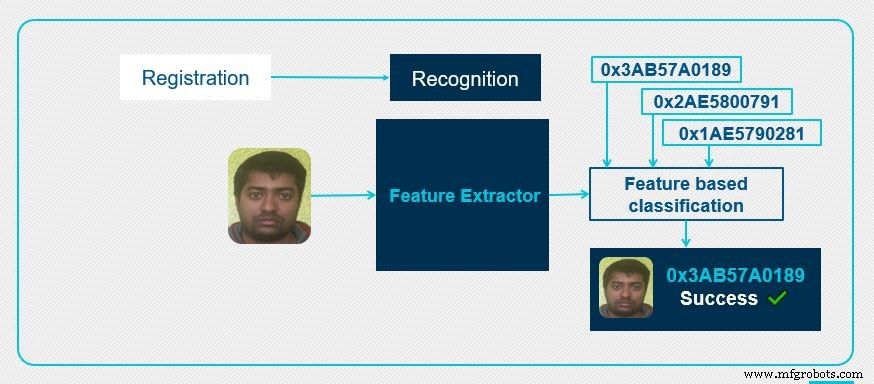

The workflow consists of two main stages: registration and recognition.

Registration enrolls known faces, generating a unique feature vector for each. These vectors populate the database for future comparisons.

Recognition extracts a feature vector from an input image and compares it against stored vectors. The system computes distances and returns the best match when a threshold is met.

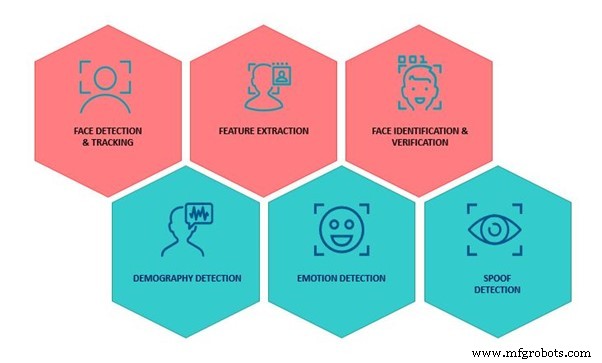

Core Building Blocks

Typical systems include:

- Face detection – locates faces and outputs bounding boxes.

- Landmark detection – identifies key facial points for alignment.

- Liveness detection – prevents spoofing by ensuring the face is live.

- Feature extraction – creates a discriminative vector.

- Matching – compares vectors and generates similarity scores.

After detection, the face is aligned using landmark points, normalizing scale, rotation, and translation. Liveness checks guard against photos or videos. Finally, the recognition module handles pose, expression, illumination, and occlusion variations before matching.

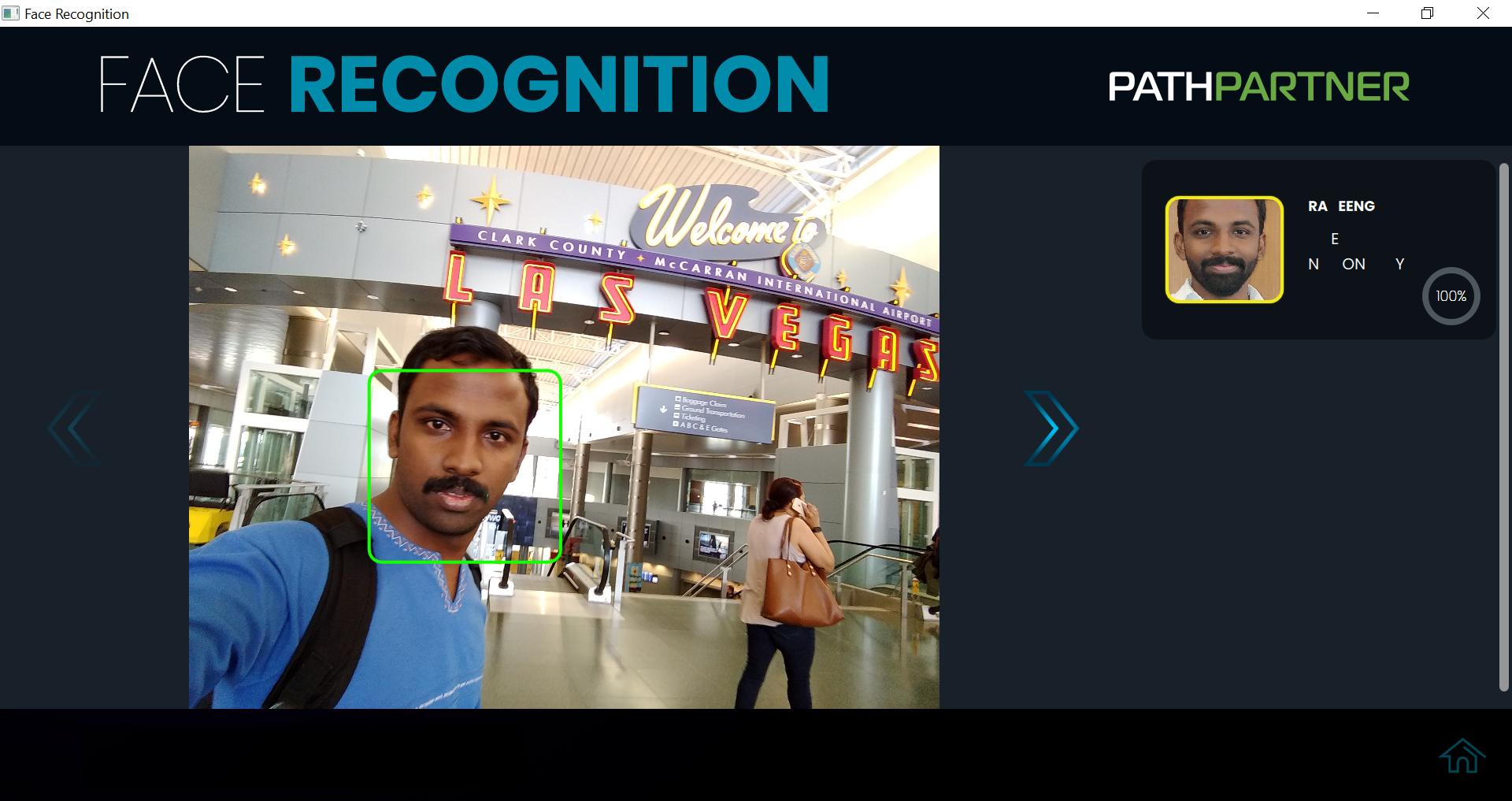

Example: PathPartner’s Facial Recognition SDK

PathPartner offers a licensed SDK that implements the six critical tasks of facial recognition using machine‑learning and computer‑vision algorithms. It supports two deployment modes:

- Low‑complexity model (~10 MB) for resource‑constrained devices.

- High‑complexity model (~90 MB) for full‑service edge platforms.

Optimized for embedded hardware from Texas Instruments, Qualcomm, Intel, ARM, and NXP, the SDK can also run on cloud servers.

Building a CNN‑Based Facial Recognition System

Convolutional Neural Networks (CNNs) dominate modern facial recognition because they naturally handle occlusion, lighting, and pose variations. The typical pipeline involves:

- Data collection – curated datasets covering pose, illumination, expression, occlusion, gender, background, ethnicity, age, eye, and appearance.

- Model design – lightweight CNNs for driver‑monitoring systems and deeper networks for attendance systems.

- Training & optimization – pre‑training on large public sets (FDDB, LFW) and fine‑tuning on in‑house data.

Handling Real‑World Challenges

- Illumination – convert RGB to NIR‑like images or fine‑tune models on NIR data.

- Pose & Expression – estimate head pose from landmarks, generate canonical frontal views, and align faces.

- Occlusion – train models to focus on the eye and forehead region for masked faces.

- Appearance – robust representation and matching resilient to hairstyle, aging, and cosmetics.

Conclusion

Facial recognition is the most natural biometric modality. Recent advances—highlighted by a NIST report—show dramatic accuracy gains over the past five years. Today the technology powers retail, automotive, banking, healthcare, and marketing solutions, while also unlocking emotion and behavior analysis.

Embedded

- How Voice Interfaces Are Democratizing Interaction: Trends, Tech, and Market Growth

- Build a Secure Facial Recognition Door with Windows IoT and Raspberry Pi

- Time‑Sensitive Networking: How TSN Drives Predictable Industrial Ethernet

- Mastering Maintenance Work Orders: A Complete Guide

- Disrupt or Die? Master Digital Strategy with Fundamental Insights

- Condition‑Based Maintenance: Core Principles and Key Advantages

- Mastering PCB Fabrication: Key Principles for High-Quality Printed Circuit Boards

- Mastering Electrohydraulic Valves: Key Design Principles

- Mastering Gear Chamfering & Deburring: Key Techniques for Optimal Performance

- Understanding the Motoman UP165: Key Features and Benefits