TI Unveils First Automotive SoC with Dedicated AI Accelerator

Texas Instruments (TI) has, for the first time, embedded a dedicated AI accelerator into its automotive system‑on‑chip (SoC), underscoring the rapid integration of deep‑learning capabilities in advanced driver‑assist systems (ADAS). The new block combines TI’s latest C7x digital signal processor (DSP) IP with a proprietary matrix‑multiplication accelerator.

The TDA4VM, one of the inaugural Jacinto 7 SoCs, fuses sensor pre‑processing and data analytics to manage inputs from an 8‑megapixel front‑mounted camera, or alternatively four to six 3‑megapixel cameras running concurrently with radar, LiDAR and ultrasonic feeds. These sensors power ADAS features such as automated parking, while deep learning enables sensor fusion and object detection.

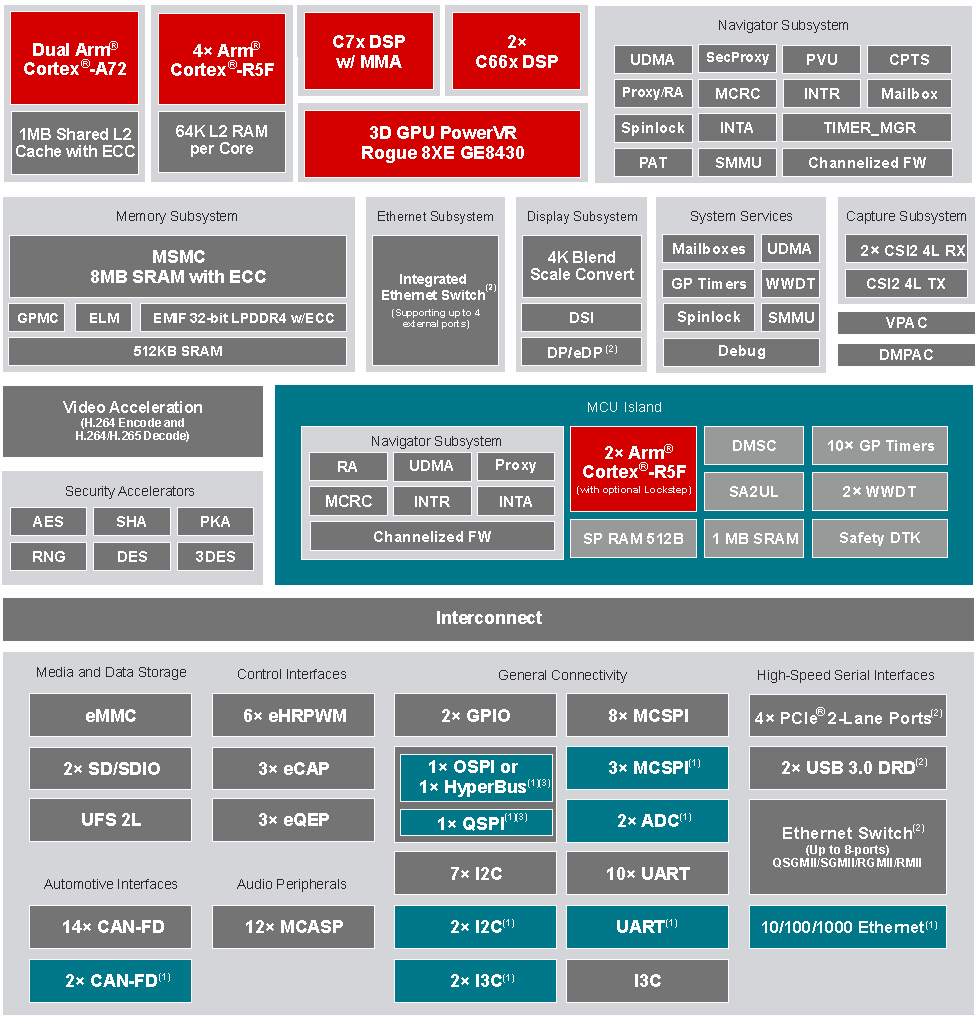

The TDA4VM includes a deep‑learning accelerator for ADAS, analysing camera, radar, LiDAR and ultrasonic data (Image: TI)

DSP Plus MMA

At a TI press event in Munich, Germany, EETimes Europe spoke with Sameer Wasson, vice‑president and business unit manager of TI’s processor business, and Curt Moore, general manager and product line manager of TI’s Jacinto product line.

“This is the first SoC that has the C7x DSP on it,” said Moore. “We added vector instructions tailored for computer vision, but also built on the DSP heritage of handling massive data streams—crunching them efficiently and delivering results in real time.”

click for larger image

Figure: TDA4VM Functional Diagram. (Source: Texas Instruments)

The new C7x DSP excels at processing voluminous data streams and performing complex mathematical operations in demanding real‑time environments. Its data‑streaming capability is paired with a matrix‑multiplication accelerator—“the MMA”—to accelerate deep‑learning workloads.

Sameer Wasson (Image: TI)

“We lovingly call it the MMA,” said Wasson. “With libraries like TIDL (Texas Instruments Deep Learning), the MMA’s complexity is abstracted away; developers program through TIDL, while the C7x delivers data in and out at unprecedented speed.”

The TDA4VM targets ADAS systems with power envelopes between 5 W and 20 W. In practice, front‑camera solutions typically stay under 7 W, whereas more sophisticated use cases—such as automatic valet parking—can approach the 20 W upper limit.

TI argues that deploying a high‑tech SoC can actually lower overall system cost for front‑camera applications.

“With the right deep‑learning models, you can avoid stereo cameras,” Wasson said. “A single, lower‑cost lens can deliver equivalent performance, thanks to the powerful engine that compensates for reduced sensor input.”

Range of Compute

Curt Moore (Image: TI)

The TDA4VM’s deep‑learning engine delivers 8 TOPS. As the flagship Jacinto 7 launch, Moore positions it as a mid‑range performer; future variants will span both lower and higher compute levels. For example, a 2 TOPS device may suffice for driver‑monitoring or occupancy detection.

“One of the beauties of the automotive market is that all these use‑cases coexist,” Wasson said. “Even when OEMs roll out a new platform, a single SoC can serve multiple vehicle lines, each with distinct requirements. The challenge then is ensuring software compatibility across that spectrum.”

Moore highlighted the breadth of vehicles now incorporating ADAS—from entry‑level models priced at $10–12 k to premium cars above $100 k.

“Drivers in a $100 k vehicle expect a different ADAS experience than those in a $12 k model,” he noted. “A $3 k ADAS package feels premium in the former but basic in the latter.”

He also pointed out budget constraints: “Even large automakers may only spend $10 million annually on development. That cost must be amortized over a relatively small fleet, unlike handset makers that ship tens of millions of units.”

Volume production of the TDA4VM is slated to begin in the second half of 2020, with pre‑production units and the TDA4VMXEVM evaluation module already available.

Embedded

- Accelerating Deep Learning with FPGAs: Speed, Flexibility, and Energy Efficiency

- CEVA Unveils NeuPro‑S: Next‑Gen AI Processor for Edge Deep Neural Network Inference

- Arbe Unveils First Automotive-Grade 30 fps 4D Imaging Radar Processor

- ICP Mustang‑F100: FPGA Accelerator for Real‑Time Deep Learning Inference

- NXP & Moter Launch Edge Platform Bridging Vehicle Data to Insurance Ecosystem

- AI, ML, and Deep Learning Explained: Key Differences and How They Work

- Integrating Dual Deep Learning Models for Enhanced Industrial Automation

- Deep Learning vs. Machine Learning: Understanding the Key Differences

- Deep Learning Explained: The Key to Modern AI

- Deep Learning: Transforming Industries with Intelligent Automation