How Sensor Fusion Enhances Reliability, Security, and Efficiency in Autonomous Systems

Sensor fusion remains a cornerstone of modern technology, especially as the Internet of Things expands and autonomous vehicles and advanced driver‑assist systems (ADAS) become mainstream. Though the concept dates back to the 1960s, today’s industry is unlocking new insights into which sensor streams to combine and how to extract actionable intelligence. The right mix depends on the specific application and the balance between cost, risk, and benefit.

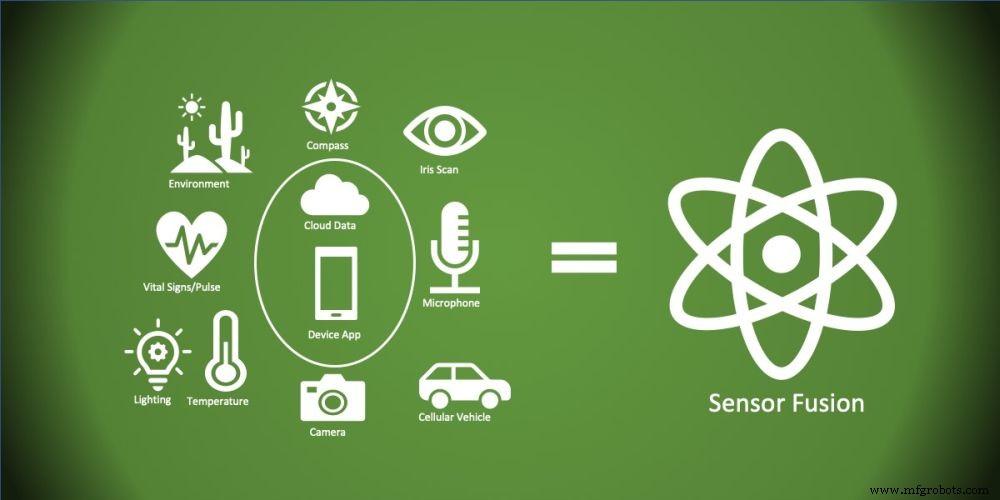

How much sensor fusion is enough depends on the application and the cost/risk benefits (Image: SAR Insight and Consulting)

In an era where individual actors and state‑backed agencies pose increasing threats to autonomous systems, sensor fusion is more critical than ever. While the political debate often centers on 5G’s information‑security risks, malware that can sabotage or extort autonomous‑system operators presents a tangible danger. System architects must recognize these threats and avoid costly missteps that have plagued automotive and aviation giants. For instance, Ford’s 1970s Pinto recall underscored the human cost of insufficient safety measures, and Boeing’s decision to charge extra for critical sensor‑fusion redundancy on the 737 MAX continues to ripple across its supply chain.

Beyond safety, the economic and health advantages of advanced sensor‑fusion—whether monitoring human activity or powering industrial automation—are already evident.

Fault Tolerance and Resilience

Every sensor and predictive model carries inherent error. By aggregating multiple measurements of the same variable, systems gain reliability and resilience, mitigating failures that could otherwise be catastrophic. While redundancy introduces cost and complexity, the Ford and Boeing cases illustrate that short‑sighted shortcuts can lead to disastrous outcomes.

Attack Resistance

Malware attackers constantly seek ways to disrupt sensor‑based systems. Robust data‑fusion strategies, coupled with secure protocols and AI‑driven anomaly detection, can shield operations from such intrusions. Typical attack vectors include:

- Signal spoofing of LiDAR and cameras;

- Signal cancellation and interference with ABS magnetic sensors or vandalized traffic signs;

- Side‑channel leaks where implanted malware harvests sensitive information via sensors.

Human‑Activity Multi‑Sensor Fusion

Combining wearable and ambient sensors to interpret human behavior delivers superior health outcomes and cost savings—critical as populations age. Use cases span eldercare, fall detection, security surveillance, athlete monitoring, first‑responder status, and navigation aids for the impaired.

Data Fusion in the Network

Historically, fusion and analytics occurred on central servers or in the cloud. The miniaturization and cost reduction of sensors now enable edge‑level AI and machine learning. Future hybrid architectures will layer data fusion across three tiers:

- Low‑level fusion on smart devices or gateways aggregating multiple inputs;

- Mid‑level fusion at hub gateways and edge compute nodes for deeper analytics across a broader device set;

- High‑level fusion in data centers or the cloud, offering the widest system perspective.

Lower Operating Costs

Sensor fusion extends the reach and utility of autonomous devices—UAVs, robotics, and more—cutting operating expenses. Benefits include automated collision avoidance for inspection drones, remote driver intervention in largely autonomous transport, and reduced labor costs in remote‑operations scenarios.

Trends

Miniaturization and cost cuts continue across sensors, processors, and connectivity, fueled by consumerization in industrial and IoT ecosystems. CES 2020 showcased MEMS sensor breakthroughs, such as smaller LiDAR mirrors that benefit automotive and intelligent transportation systems.

The Kalman filter remains the standard algorithm for sensor fusion, modeling system states with continuous measurement and prediction. In increasingly complex systems, architects also employ machine learning and neural networks to counter sophisticated signal‑injection attacks.

By thoughtfully integrating sensor fusion, designers and architects can strengthen system integrity, reliability, and robustness—protecting people, property, and economic interests from both malfunction and malicious activity.

>> This article was originally published on our sister site, EE Times Europe.

Sensor

- In‑Display Fingerprint Sensor: Evolution, Technology, and Benefits

- DS18B20 Temperature Sensor – Precise 1‑Wire Digital Thermometer for Industrial & Consumer Use

- STMicroelectronics Unveils Advanced Time‑of‑Flight Sensor for Precise Ranging

- Understanding the Oxygen (O2) Sensor: Role, Placement, and Failure Signs

- BASELABS Launches Dynamic Grid: Raw‑Data Sensor Fusion for Advanced Automotive Modeling

- Prophesee & Sony Unveil Stacked Event‑Based Vision Sensor with Record HDR and Ultra‑Small Pixels

- Ambarella Acquires Oculii for $307.5 M, Expanding Radar & Sensor Fusion for Autonomous Vehicles

- MKR1000 Multi‑Mode Environmental Sensor Deck – Lightning, Gas, UV, Temp, Humidity & Pressure

- Dual-Mode Electronic Skin: Touchless and Tactile Sensing via Magnetic Fields

- Advanced TOMOPLEX Sensor Film for Real-Time Aerospace Monitoring