Adding Bias Nodes to a Multilayer Perceptron in Python

This article explains how to incorporate bias nodes into a multilayer perceptron (MLP) using Python, and evaluates their effect on classification performance.

Welcome to the All About Circuits neural‑network series, authored by Director of Engineering Robert Keim. If you haven’t yet explored the other lessons, you’ll find them listed below. They provide a solid foundation for understanding the concepts discussed in this post.

- How to Perform Classification Using a Neural Network: What Is the Perceptron?

- How to Use a Simple Perceptron Neural Network Example to Classify Data

- How to Train a Basic Perceptron Neural Network

- Understanding Simple Neural Network Training

- An Introduction to Training Theory for Neural Networks

- Understanding Learning Rate in Neural Networks

- Advanced Machine Learning with the Multilayer Perceptron

- The Sigmoid Activation Function: Activation in Multilayer Perceptron Neural Networks

- How to Train a Multilayer Perceptron Neural Network

- Understanding Training Formulas and Backpropagation for Multilayer Perceptrons

- Neural Network Architecture for a Python Implementation

- How to Create a Multilayer Perceptron Neural Network in Python

- Signal Processing Using Neural Networks: Validation in Neural Network Design

- Training Datasets for Neural Networks: How to Train and Validate a Python Neural Network

- How Many Hidden Layers and Hidden Nodes Does a Neural Network Need?

- How to Increase the Accuracy of a Hidden Layer Neural Network

- Incorporating Bias Nodes into Your Neural Network

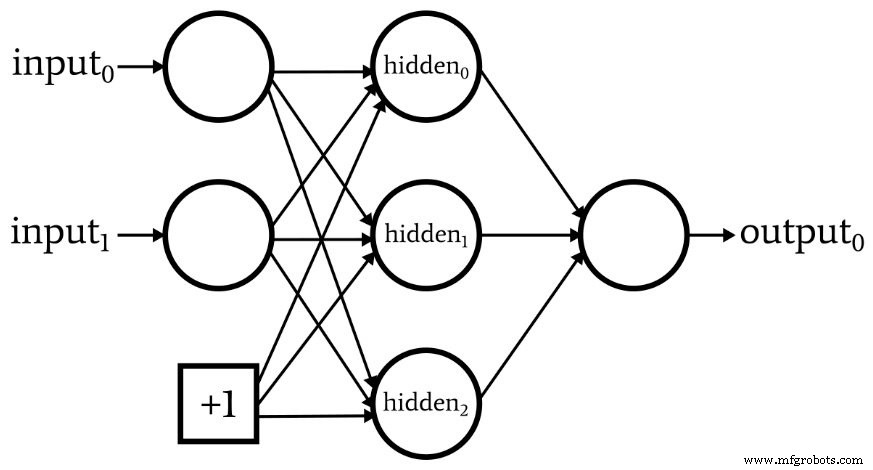

Bias nodes are additional inputs that provide a constant value—typically +1—directly to a perceptron’s input or hidden layers. They allow the network to shift decision boundaries without altering the input data itself. This article presents two practical methods for adding bias nodes and shows how to assess their impact on model accuracy.

Method 1: Adding Bias via the Spreadsheet

When your training or validation data reside in a spreadsheet, you can insert a bias column without touching the Python code.

Steps:

- Insert a new column in the spreadsheet and fill it with the desired bias value (usually +1).

- Adjust the input‑layer dimensionality in your code to account for the new column.

This approach is quick and requires no code changes, making it ideal for prototyping.

Method 2: Integrating Bias Directly in Python

For more flexibility—such as adding bias to hidden layers—you’ll modify the network architecture in code. Below is a concise example that adds bias nodes to both the input and hidden layers.

Updating Input‑Layer Dimensionality

# Load training data

training_data = np.array(...) # shape: (samples, features)

training_count = training_data.shape[0]

# Append a column of +1s for the input bias

bias_column = np.ones((training_count, 1))

training_data = np.hstack((training_data, bias_column))

# Repeat for validation data

validation_data = np.hstack((validation_data, np.ones((validation_count, 1))))

Here, np.ones creates a column of ones that represents the constant bias input. The np.hstack function then appends this column to the existing feature matrix.

Adding a Hidden‑Layer Bias Node

# During feedforward computation

for node in range(hidden_dim):

# Compute weighted sum for each hidden node

z = np.dot(input_vector, weights_input_hidden[:, node])

post_activation[node] = activation_function(z)

# Insert bias node value (+1) into the hidden layer

post_activation[hidden_dim] = 1.0 # Bias node at the end

When hidden_dim includes the bias node, the loop stops before the last node, and the bias value is assigned afterward. Adjust the corresponding validation loop in the same way.

Choosing Different Bias Values

Although +1 is conventional, you can experiment with other constants. For the input bias, multiply bias_column by the desired value. For the hidden bias, modify the assignment in the feedforward loop accordingly.

Evaluating Bias Impact

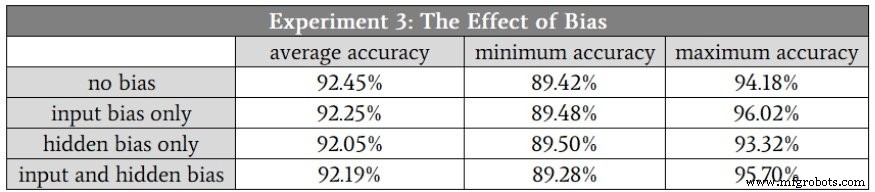

In Part 16 of the series, the MLP struggled to classify the high‑complexity Experiment 3 dataset. To determine whether bias nodes could help, we performed ten independent training runs with a hidden layer of seven units, comparing four configurations: no bias, input bias only, hidden bias only, and both biases.

The results show minimal differences—bias nodes did not significantly improve accuracy for this particular dataset. This outcome aligns with findings from other studies that suggest bias nodes can be beneficial in certain contexts but are not a universal performance booster.

Conclusion

While bias nodes rarely transform classification accuracy in all scenarios, they remain a valuable architectural tool. Incorporating bias support into your codebase ensures you can quickly test its effectiveness on future datasets or model variants. By following the methods outlined above, you can add bias nodes with minimal effort and evaluate their impact systematically.

Industrial robot

- Local Minima in Neural Network Training: Myth or Reality?

- Optimizing Hidden Layer Size to Boost Neural Network Accuracy

- Choosing the Right Number of Hidden Layers and Nodes in a Neural Network

- Validating Neural Networks for Reliable Signal Processing

- Building a Multilayer Perceptron Neural Network in Python: A Practical Guide

- Designing a Flexible Perceptron Neural Network in Python

- Train Your Multilayer Perceptron: Proven Strategies for Optimal Performance

- Step 6: Seamlessly Integrate Cobots into Your Operations

- Step 3: Seamlessly Deploy Cobots for Optimal Production

- Enhance Your Production Line: Partnering with a Skilled Fabricator for Superior Results