Validating Neural Networks for Reliable Signal Processing

Why Validation Matters in Neural‑Network‑Based Signal Processing

In this continuation of AAC’s neural‑network series, we dive into the crucial role of validation when applying neural networks to real‑world signal‑processing tasks.

- How to Perform Classification Using a Neural Network: What Is the Perceptron?

- How to Use a Simple Perceptron Neural Network Example to Classify Data

- How to Train a Basic Perceptron Neural Network

- Understanding Simple Neural Network Training

- An Introduction to Training Theory for Neural Networks

- Understanding Learning Rate in Neural Networks

- Advanced Machine Learning with the Multilayer Perceptron

- The Sigmoid Activation Function: Activation in Multilayer Perceptron Neural Networks

- How to Train a Multilayer Perceptron Neural Network

- Understanding Training Formulas and Backpropagation for Multilayer Perceptrons

- Neural Network Architecture for a Python Implementation

- How to Create a Multilayer Perceptron Neural Network in Python

- Signal Processing Using Neural Networks: Validation in Neural Network Design

- Training Datasets for Neural Networks: How to Train and Validate a Python Neural Network

The Distinct Nature of Neural‑Network Signal Processing

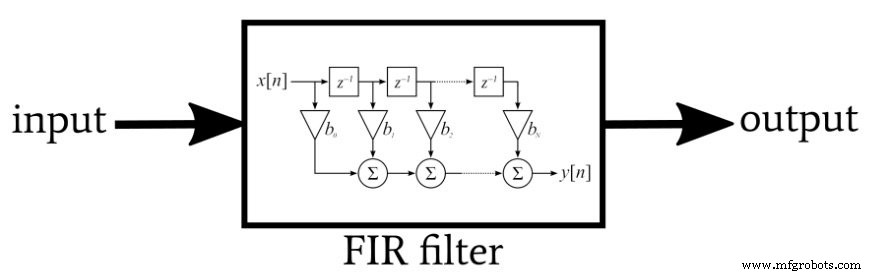

Traditional signal‑processing systems rely on hand‑crafted algorithms. Engineers design a mathematical model—such as a FIR filter—to perform a specific function, then implement that algorithm on a processor tailored to the application’s needs.

Neural networks, by contrast, are empirical. Their internal mathematics form a generic framework that learns a customized model from data. While the underlying equations are well understood, the actual algorithm that emerges depends on the training set, learning rate, initial weights, and other hyperparameters.

A FIR filter is a classic, mathematically precise signal‑processing system.

A neural network’s behavior depends on many factors, unlike a fixed‑algorithm filter.

Think of it as the difference between a child learning language by exposure and an adult studying grammar. The child’s brain spontaneously internalizes patterns from sheer data volume, while the adult must explicitly memorize rules. Similarly, a neural network discovers patterns from data; it does not start with explicit linguistic or signal‑processing rules.

The Critical Role of Validation

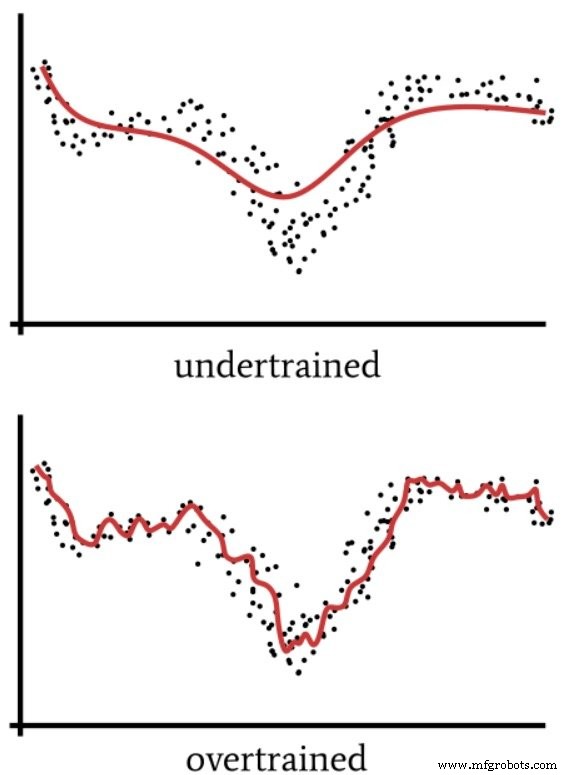

Neural networks can uncover complex patterns that traditional algorithms may miss, but their empirical nature introduces risks. For instance, children sometimes over‑regularize English verbs, saying “goed” instead of “went.” A neural network that over‑fits the training data may similarly produce unexpected errors when faced with new inputs.

Under‑training can leave gaps in the model, while over‑training can lock the network into brittle, over‑specific solutions. Both scenarios can lead to unacceptable failures in safety‑critical or high‑stakes applications—such as autonomous vehicles or aerospace control systems.

Because a training set is always finite, validation—defined here as systematic testing, analysis, and observation on data not used for training—is essential to confirm that the network will perform reliably on real‑world inputs.

Under‑ and over‑training can produce hidden failures; validation mitigates these risks.

Terminology: Validation vs. Verification

In software engineering, verification checks that a product is built correctly, while validation confirms that the correct product is built. In neural‑network development, we use “validation” to encompass testing, analysis, and observation that ensure the trained model meets system requirements.

Practical Validation Strategies

For experimental or instructional networks, validation typically involves running the trained model on unseen data and measuring classification accuracy. More advanced projects—especially in aerospace—may adopt formal V&V frameworks, such as NASA’s “Verification & Validation of Neural Networks for Aerospace Systems” or the comprehensive text Methods and Procedures for the Verification and Validation of Artificial Neural Networks (293 pages).

Future articles will explore specific validation techniques, from cross‑validation and confusion matrices to rigorous statistical hypothesis testing.

Industrial robot

- Local Minima in Neural Network Training: Myth or Reality?

- Adding Bias Nodes to a Multilayer Perceptron in Python

- Training Neural Networks with Excel: Building & Validating a Python Multilayer Perceptron

- Building a Multilayer Perceptron Neural Network in Python: A Practical Guide

- Designing a Flexible Perceptron Neural Network in Python

- Train Your Multilayer Perceptron: Proven Strategies for Optimal Performance

- Perceptron Basics: How Neural Networks Classify Data

- Integrating Machine Vision with Neural Networks for Advanced Industrial IoT

- Neural Networks Accelerate X‑Ray Imaging Reconstruction

- High-Speed PCB Design: Differential Signal Processing & Simulation Verification