Building a Multilayer Perceptron Neural Network in Python: A Practical Guide

This article walks you through a Python program that trains a neural network for advanced classification.

This is the 12th entry in AAC's neural network development series. See what else the series offers below:

- How to Perform Classification Using a Neural Network: What Is the Perceptron?

- How to Use a Simple Perceptron Neural Network Example to Classify Data

- How to Train a Basic Perceptron Neural Network

- Understanding Simple Neural Network Training

- An Introduction to Training Theory for Neural Networks

- Understanding Learning Rate in Neural Networks

- Advanced Machine Learning with the Multilayer Perceptron

- The Sigmoid Activation Function: Activation in Multilayer Perceptron Neural Networks

- How to Train a Multilayer Perceptron Neural Network

- Understanding Training Formulas and Backpropagation for Multilayer Perceptrons

- Neural Network Architecture for a Python Implementation

- How to Create a Multilayer Perceptron Neural Network in Python

- Signal Processing Using Neural Networks: Validation in Neural Network Design

- Training Datasets for Neural Networks: How to Train and Validate a Python Neural Network

In this article, we’ll build on our work with simple perceptron networks and extend it to a fully functional multilayer perceptron (MLP) implemented in Python.

Developing Comprehensible Python Code for Neural Networks

After reviewing numerous online resources, I found that many implementations are either overly complex or lack clarity. My goal was to create a program that is both educational and intuitive, allowing readers to see how theory translates into code.

While this script prioritizes readability over raw performance, it remains a solid foundation for experimentation. Real‑world applications often demand optimised code to handle large datasets, but for learning and small‑scale projects, clarity is more valuable.

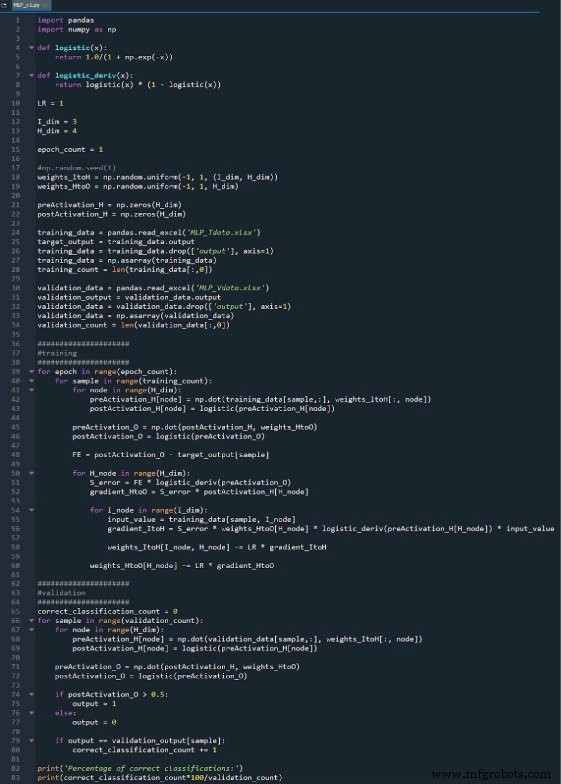

The full Python source is available as an image below and can be downloaded as MLP_v1.py. The code performs training and validation, with the focus of this post on the training process.

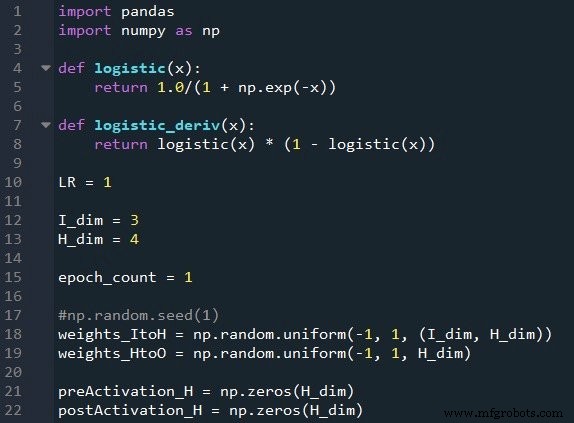

Preparing Functions and Variables

The NumPy library handles all numerical operations, while Pandas facilitates easy import of training data from Excel. We use the logistic sigmoid function for activation and its derivative for backpropagation. The learning rate, input dimensionality, hidden layer size, and epoch count are set here. Random initial weights are generated with np.random.uniform(-1, 1), and a fixed seed ensures reproducibility. Empty arrays for hidden pre‑activation and post‑activation values are also initialized.

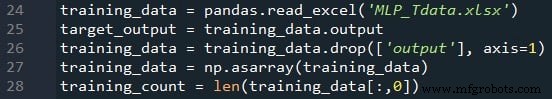

Importing Training Data

Following the procedure from Part 4, we read the dataset from Excel, separate the target column, remove it from the feature matrix, convert the remaining data to a NumPy array, and store the sample count in training_count.

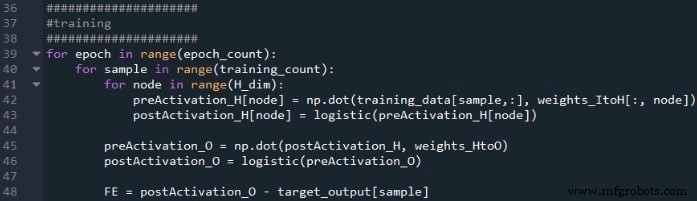

Feedforward Processing

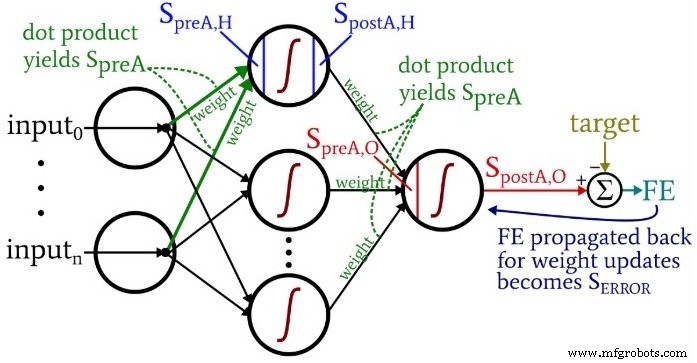

The feedforward phase computes the network output by propagating inputs through the hidden layer to the output node. The code below demonstrates the multi‑epoch loop, per‑sample calculations, hidden‑layer pre‑activation and activation, and final output computation.

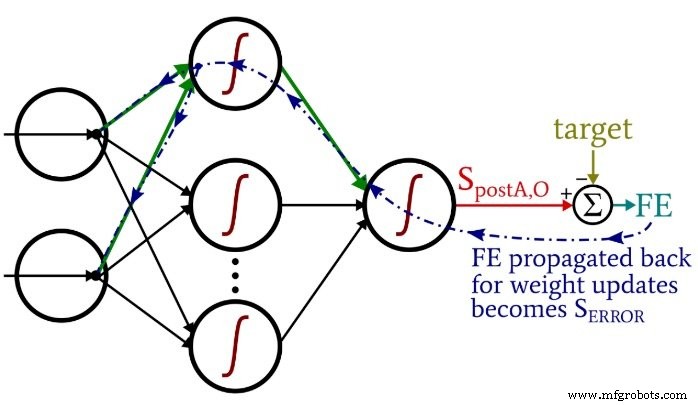

Inside each epoch, we iterate over all samples. For each sample, we compute the hidden node activations via dot products, apply the sigmoid, then compute the output node pre‑activation and post‑activation. The error is the difference between the target and the output.

Backpropagation

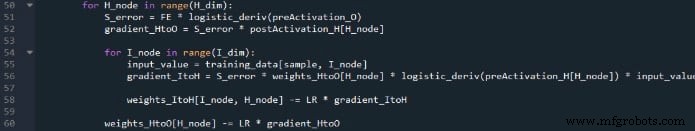

After feedforward, backpropagation adjusts weights in reverse order, propagating error signals from the output back through the hidden layer. The following image illustrates the nested loops and weight updates.

We first compute the output error term S_ERROR, then update the hidden‑to‑output weights. Subsequently, for each hidden node, we calculate its contribution to the input‑to‑hidden weight updates using the current hidden‑to‑output weight. This order preserves the correct gradient calculations.

Conclusion

This concise script encapsulates the core principles of an MLP: data ingestion, forward propagation, error computation, and weight adjustment via backpropagation. I hope it serves as a clear bridge between neural‑network theory and practical implementation.

Below is the complete code for reference:

Download Code

Industrial robot

- Optimizing Hidden Layer Size to Boost Neural Network Accuracy

- Choosing the Right Number of Hidden Layers and Nodes in a Neural Network

- Training Neural Networks with Excel: Building & Validating a Python Multilayer Perceptron

- Designing a Flexible Perceptron Neural Network in Python

- Mastering Weight Updates and Backpropagation in Multilayer Perceptrons

- Train Your Multilayer Perceptron: Proven Strategies for Optimal Performance

- Training a Basic Perceptron Neural Network with Python: Step‑by‑Step Guide

- A Beginner’s Guide to Perceptron Neural Networks: Classifying Data with a Simple Example

- Perceptron Basics: How Neural Networks Classify Data

- Master Python File Handling: Create, Read, Write, and Open Text Files with Ease