A Beginner’s Guide to Perceptron Neural Networks: Classifying Data with a Simple Example

Understanding the Perceptron: A Practical Example of Data Classification

This article is part of a series that demystifies Perceptron neural networks. Whether you’re starting fresh or want to revisit a specific topic, the related posts below provide a comprehensive roadmap.

- How to Perform Classification Using a Neural Network: What Is the Perceptron?

- How to Use a Simple Perceptron Neural Network Example to Classify Data

- How to Train a Basic Perceptron Neural Network

- Understanding Simple Neural Network Training

- An Introduction to Training Theory for Neural Networks

- Understanding Learning Rate in Neural Networks

- Advanced Machine Learning with the Multilayer Perceptron

- The Sigmoid Activation Function: Activation in Multilayer Perceptron Neural Networks

- How to Train a Multilayer Perceptron Neural Network

- Understanding Training Formulas and Backpropagation for Multilayer Perceptrons

- Neural Network Architecture for a Python Implementation

- How to Create a Multilayer Perceptron Neural Network in Python

- Signal Processing Using Neural Networks: Validation in Neural Network Design

- Training Datasets for Neural Networks: How to Train and Validate a Python Neural Network

What Is a Single‑Layer Perceptron?

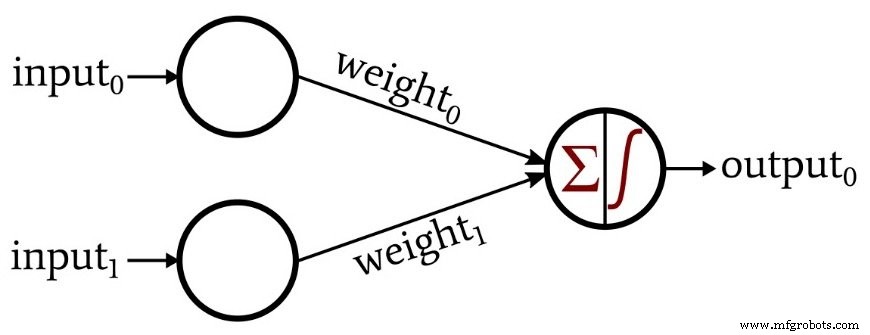

A perceptron is the most elementary type of neural network. It consists of an input layer that distributes data to a single computational layer, whose neurons perform weighted summations followed by an activation function. The connections between the input nodes and the computational layer are assigned scalar weights, which determine the influence of each input feature on the final output.

Although the network contains two physical layers (input and output), it contains only one computational layer, which is why it is called a single‑layer perceptron.

Classifying with a Perceptron

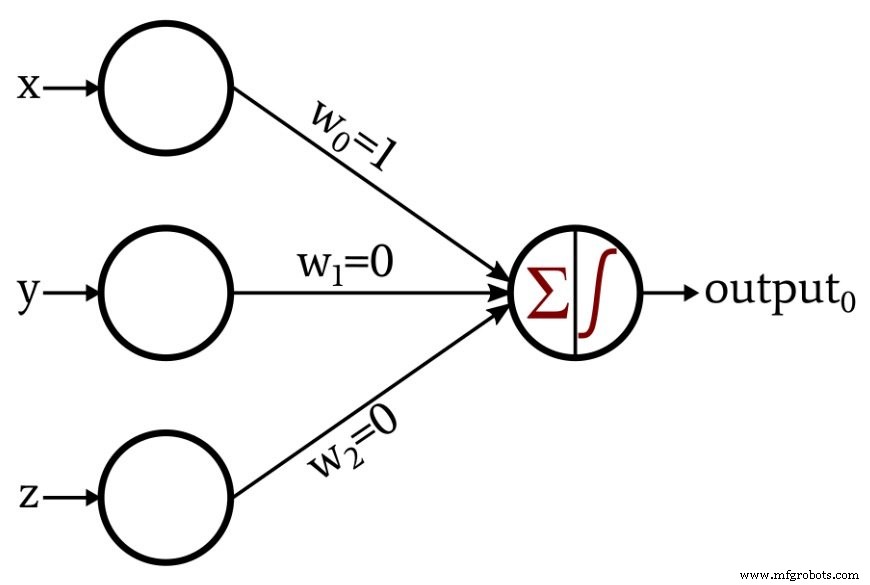

Let’s examine a concrete example. We’ll construct a perceptron that receives a three‑dimensional vector ⟨x, y, z⟩ and classifies points based on their position relative to the x‑axis:

- Below the x‑axis (y < 0): classified as invalid (output 0)

- On or above the x‑axis (y ≥ 0): classified as valid (output 1)

The network’s input vector maps directly to the three coordinates: input₀ = x, input₁ = y, and input₂ = z. Because the problem is linear, we can assign the weights directly:

We then set the output neuron’s activation to a unit step function:

\[f(x)=\begin{cases}0 & x < 0\\1 & x \geq 0\end{cases}\]

With these settings, the y and z components are effectively ignored (weights 0), and the classification depends solely on the x coordinate. If the point lies below the x‑axis, the weighted sum is negative and the step function outputs 0; otherwise it outputs 1.

From Manual Design to Automated Training

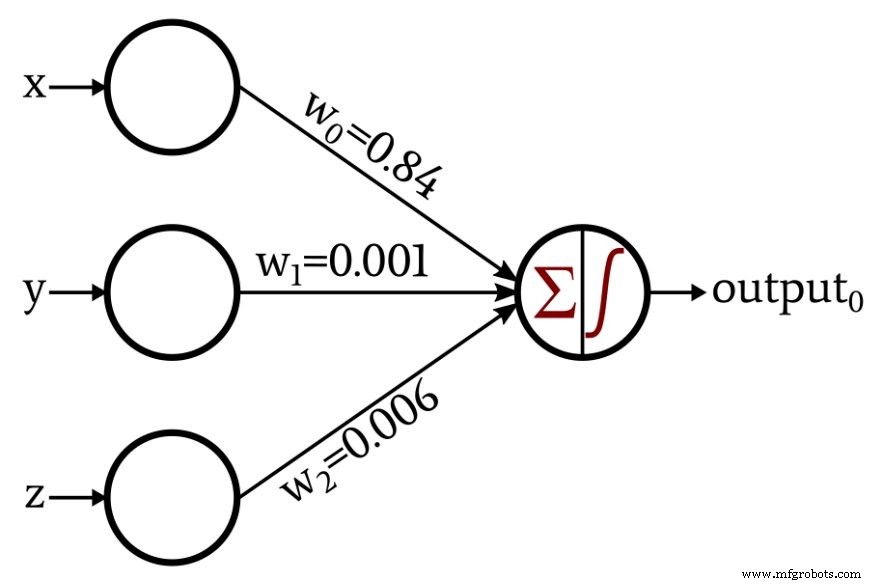

The example above shows how a human can design a perceptron for a trivial problem. In many real‑world scenarios—handwriting recognition, speech processing, medical diagnosis—defining a precise mathematical relationship between inputs and desired outputs is impractical. Instead, we rely on a data‑driven approach: we provide the network with many input–output pairs and let it learn the underlying pattern.

Training is the process that adjusts the weights so the perceptron produces correct outputs on unseen data. It iteratively compares the network’s predictions with the known labels and nudges the weights in the direction that reduces the error.

After training on 1,000 synthetic points for our valid/invalid classifier, the perceptron automatically discovered the appropriate weight vector, which now matches the manually derived solution:

What’s Next?

In the forthcoming article we’ll walk through a concise Python implementation of a single‑layer perceptron and detail the training routine that produced the weights shown above. Stay tuned to learn how to code and train your own perceptron from scratch.

Embedded

- Analog In‑Memory Computing: Power‑Efficient Edge AI Inference

- Optimizing Hidden Layer Size to Boost Neural Network Accuracy

- Choosing the Right Number of Hidden Layers and Nodes in a Neural Network

- Building a Multilayer Perceptron Neural Network in Python: A Practical Guide

- Train Your Multilayer Perceptron: Proven Strategies for Optimal Performance

- Training a Basic Perceptron Neural Network with Python: Step‑by‑Step Guide

- Perceptron Basics: How Neural Networks Classify Data

- Leveraging Work Order Data: A Proven Path to Maintenance Excellence

- Using realloc() in C: Syntax, Best Practices & Example

- Leveraging Data to Accelerate Digital Transformation