Microcontrollers Power the Next Wave of Edge AI

Until recently, machine learning—and even deep learning—was considered the preserve of powerful servers, with training and inference handled by gateways, edge servers, or data centers. That view held because the shift toward distributing computation between cloud and edge was still nascent. Today, breakthroughs from industry and academia have shifted that paradigm.

Consequently, high‑performance processors delivering trillions of operations per second (TOPS) are no longer a prerequisite for ML workloads. Modern microcontrollers, many equipped with dedicated ML accelerators, now bring intelligent inference directly to edge devices.

These controllers execute ML tasks efficiently, at minimal cost and power, connecting to the cloud only when needed. In essence, microcontrollers with embedded ML accelerators mark the next leap in endowing sensors—microphones, cameras, environmental monitors—with on‑device intelligence, the cornerstone of the IoT value chain.

How deep is the edge?The edge is commonly defined as the farthest point from the cloud in an IoT network, often represented by an advanced gateway or edge server. In reality, the edge terminates at the sensors themselves—those positioned close to the end user. It is therefore logical to embed as much analytics as possible directly within those sensors, a role for which microcontrollers are perfectly suited.

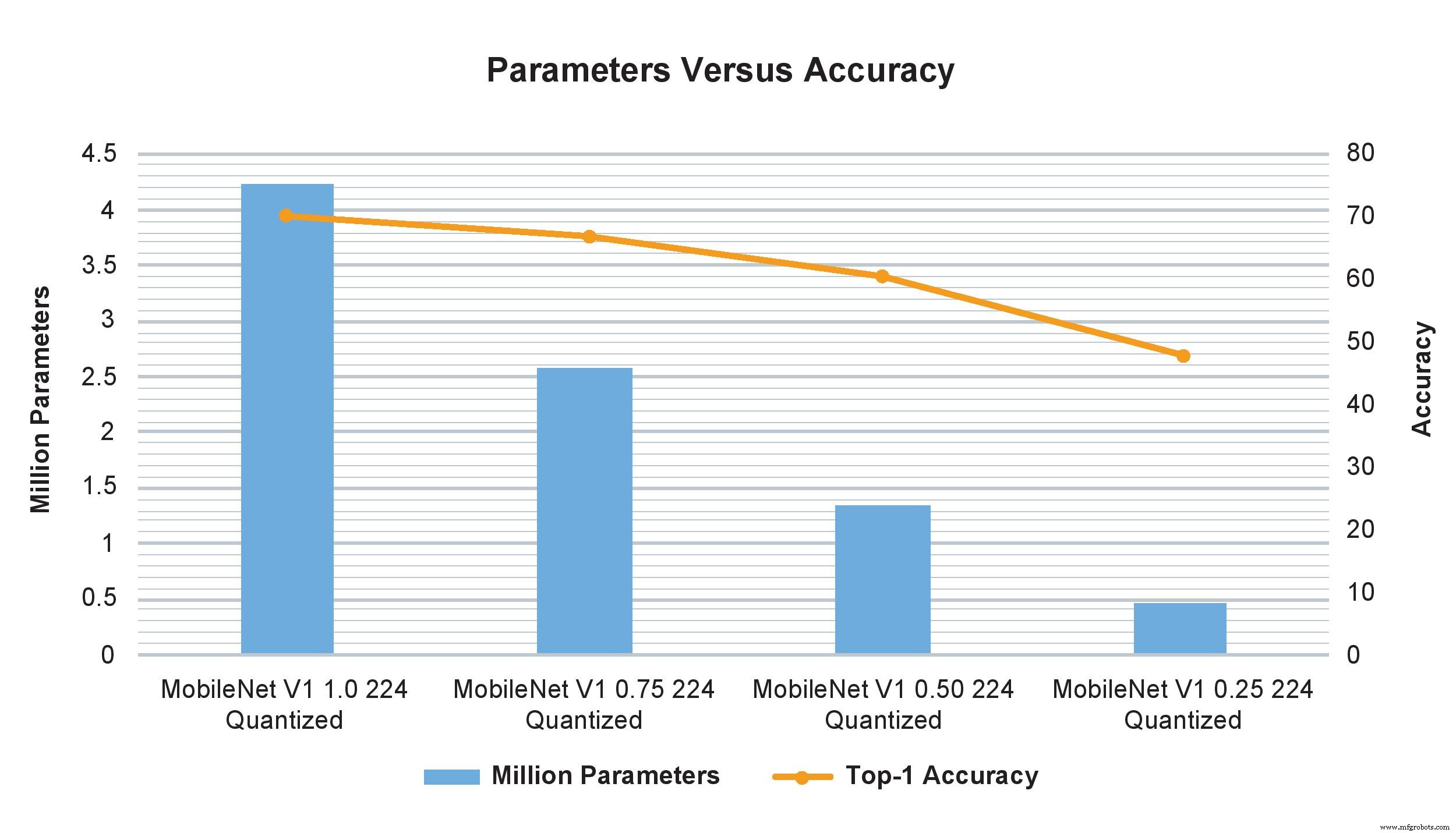

MobileNet V1 model examples of varying width multipliers show a drastic impact on the number of parameters, computations, and accuracy. However, just changing the width multiplier from 1.0 to 0.75 minimally affects the TOP-1 accuracy but significantly impacts the number of parameters and computations (Image: NXP)

Single‑board computers can indeed perform edge processing, delivering impressive performance and, when clustered, approaching small‑scale supercomputers. Yet they remain too bulky and expensive for the thousands of units demanded by large‑scale deployments. Moreover, they require external DC power—often unavailable in constrained environments—whereas an MCU draws only milliwatts and can operate on coin‑cell batteries or a few solar cells.

Consequently, the field of microcontroller‑based edge ML—known as TinyML—has exploded in popularity. TinyML aims to enable inference, and eventually training, on small, low‑power, resource‑constrained devices, particularly microcontrollers, instead of relying on larger platforms or the cloud. This necessitates compact neural‑network models that fit within limited processing, storage, and bandwidth budgets while preserving performance.

Resource‑optimized models enable devices to capture adequate sensor data for their tasks while fine‑tuning accuracy and reducing resource demands. Consequently, although some data may still be transmitted—directly to the cloud or via an edge gateway—the volume is significantly lower because preliminary analysis has already been executed on the device.

A common TinyML use case is a camera‑based object‑detection system that can capture high‑resolution images yet faces limited storage, necessitating resolution reduction. With on‑device analytics, the camera captures only the objects of interest, preserving higher resolution for the selected frames. This level of functionality—once reserved for powerful hardware—is now achievable on microcontrollers thanks to TinyML.

Small but mightyAlthough TinyML is a nascent field, it already delivers impressive inference—and increasingly, training—performance on modest microcontrollers, with negligible accuracy loss. Recent successes span voice and facial recognition, voice‑command natural‑language processing, and simultaneous execution of multiple sophisticated vision algorithms.

Practically, a microcontroller priced under $2, featuring a 500‑MHz Arm Cortex‑M7 core and 28 KB–128 KB of memory, can deliver the performance necessary to render sensors truly intelligent.

Even at this cost, such microcontrollers incorporate robust security features (AES‑128), support multiple external memory types, Ethernet, USB, SPI, and interfaces for a range of sensors, including Bluetooth, Wi‑Fi, SPDIF, and I²C audio. A modest price increase typically yields a 1‑GHz Cortex‑M7, 400‑MHz Cortex‑M4, 2 MB of RAM, and graphics acceleration, all while drawing only a few milliamps from a 3.3 V supply.

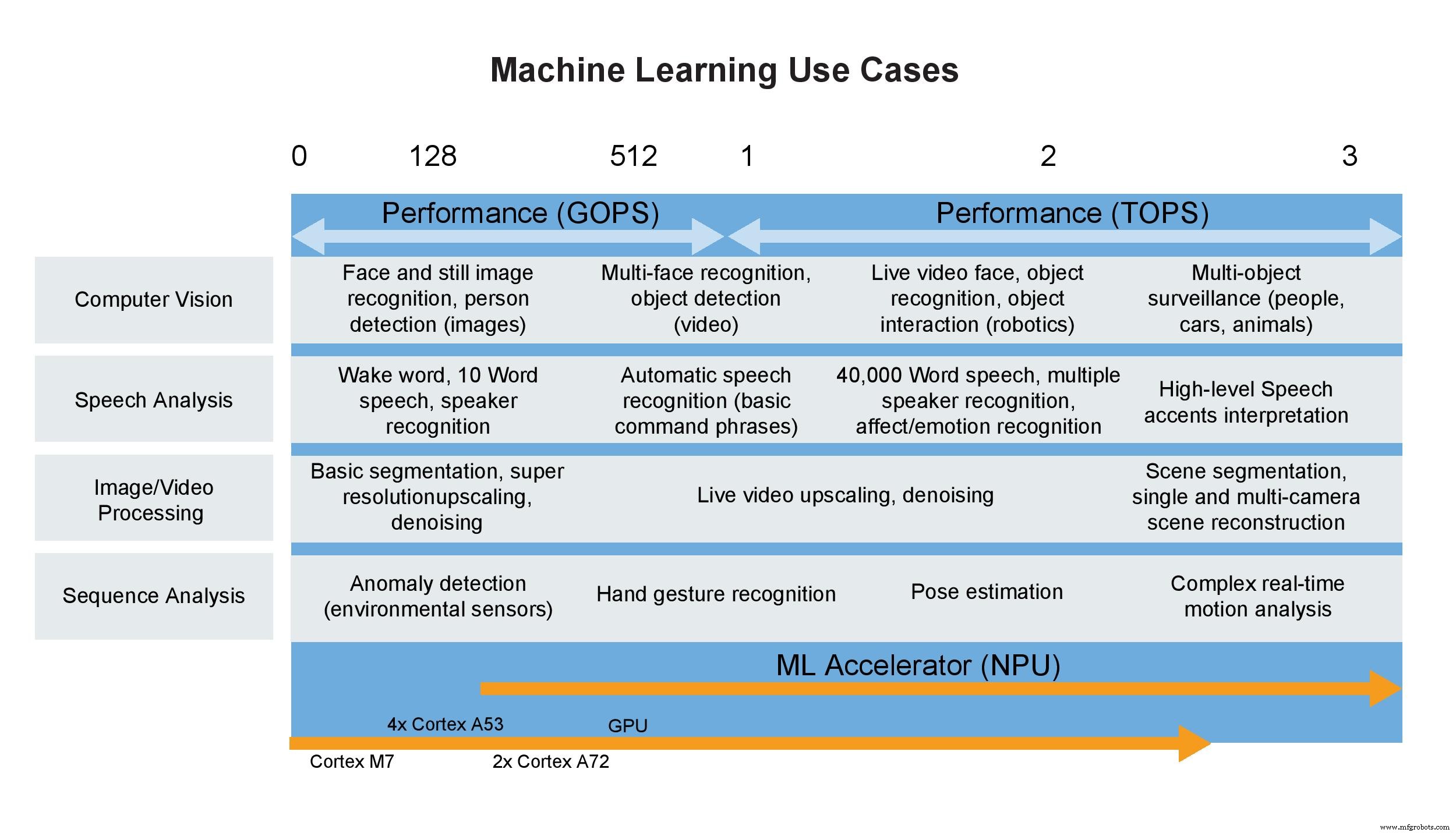

Machine learning use cases (Image: NXP)

Manufacturers often benchmark neural‑network accelerators using a single metric—trillions of operations per second (TOPS). While attractive, this figure can be misleading. It reflects raw computational capacity in a controlled lab setting and ignores practical constraints such as memory bandwidth, CPU overhead, and data pre‑ and post‑processing. In real deployments, the achievable throughput can be 50–60 % of the advertised TOPS value.

Moreover, for microcontrollers, which typically offer only 1–3 TOPS, the metric is even less informative. The real power of these tiny devices lies in their tightly integrated Arm Cortex processors, support for integer and floating‑point operations, and the ability to run inference engines like TensorFlow Lite with minimal footprint.

ConclusionThe push to run inference directly on or adjacent to sensors—whether still or video cameras—is accelerating as the IoT ecosystem moves toward edge‑centric processing. Rapid advances in microcontroller‑level neural‑network accelerators are consolidating AI functionality with application processors without a dramatic rise in power consumption or size. Models trained on powerful CPUs or GPUs can be slimmed with TensorFlow Lite and deployed to microcontrollers, scaling effortlessly to meet growing ML demands. Soon, even training will be feasible on these devices, positioning microcontrollers as formidable competitors to larger, costlier systems.

>> This article was originally published on our sister site, EE Times.

Embedded

- How Embedded Systems Drive Modern Vehicle Innovation

- Vapor‑Chamber Cooling: A Growing Solution for High‑Heat Electronics

- Embedded Vision Drives Innovation Across Industries

- Edge AI Gains Momentum: Cloud Leaders AWS and Microsoft Show Integrated Inference & Management Solutions

- RF Energy Harvesting Drives the Expansion of AI‑Powered Wireless Applications

- Edge AI Chips: Driving the Future of On-Device Intelligence

- USB‑C’s Growing Role in Power Management for Wearables and Mobile Devices

- Edge AI: Accelerating On-Device Intelligence and Transforming Consumer and Enterprise Markets

- Digital Car Keys: Beyond Unlocking to Secure Authentication

- How Edge Computing Revolutionizes Commercial IoT Deployments