Fuzz Testing: A Key Tool for Securing IoT Devices

Fuzz testing is a proven technique that engineers use to uncover hidden weaknesses in embedded systems, making it an essential part of any IoT security strategy.

As the number of connected devices grows, so does the attack surface. Serial ports, radio interfaces, and even programming/debugging ports can be leveraged by attackers. Yet many embedded developers historically overlook device‑layer security, missing critical bugs that can compromise the entire product.

What Is Fuzz Testing?

Think of fuzz testing as a systematic, high‑volume “million‑monkey” experiment—except we inject a large variety of random byte strings, called fuzz vectors, into a target interface and monitor the system’s response. If a vector triggers abnormal behavior—such as a crash, reset, or memory corruption—a potential vulnerability is flagged for review.

Numerous commercial and open‑source fuzzers exist. They generate thousands of vectors, submit them at a high rate, and record any deviation from expected behavior. Because the space of possible inputs is infinite, the goal is to maximize the *quality* and *quantity* of tests while keeping execution time practical.

Adapting Fuzzing to Embedded Environments

Many fuzzers were originally designed for desktop applications, so the simplest path is to compile the embedded firmware to run as a native PC binary. This yields a huge performance boost, but introduces two challenges:

- PC CPUs behave differently from microcontrollers, which can mask timing‑related bugs.

- Hardware‑specific code (e.g., peripheral drivers) must be stubbed or simulated.

Despite these trade‑offs, the benefits of rapid test cycles and richer instrumentation often outweigh the drawbacks. If native execution is infeasible, consider building a dedicated test image with additional RAM, flash, or an external debugging interface.

Detecting Bugs Beyond Crashes

Crashes are the easiest signals, but many exploitable bugs—such as out‑of‑bounds writes or dangling pointer dereferences—do not manifest as a crash or reset. Modern compilers (GCC, Clang) provide memory sanitization to flag illegal memory accesses, but the extra overhead makes it unsuitable for most embedded runs. Instead, target a subset of critical code paths, run the fuzzers on a resource‑rich build, or use a PC target.

Code coverage metrics help gauge test effectiveness. Coverage libraries inject “bread‑crumb” calls into each function; when a crumb executes, a counter increments. However, coverage numbers can be misleading for embedded systems because large portions of firmware—such as device‑driver loops that never interact with the fuzzed interface—remain untested. In practice, only 20%–30% of the code is reachable via fuzz vectors.

Reporting and Triage

When a fuzz vector produces undesired behavior, detailed diagnostics are crucial: the exact fault location, stack snapshot (if available), and bug type. These details accelerate triage and enable engineers to prioritize fixes. Embedded devices often reset silently, erasing state, so reproducible vectors and debugging sessions are mandatory to pinpoint the root cause.

Because a single bug can generate thousands of seemingly unique crash vectors, it is essential to group them by underlying fault. A consistent crash address or pattern usually indicates the same vulnerability.

Continuous Fuzzing in the Release Life‑Cycle

Given its stochastic nature, fuzz testing benefits from extended run times. An efficient workflow starts fuzzing on a dedicated branch after the main release. New bugs are fixed in this branch, allowing the fuzzing loop to continue unhindered. At each release, previously discovered issues are reassessed and, if critical, merged into the next version. Finally, the validated vectors are incorporated into the standard QA pipeline to guard against regression.

Fuzz tests should cover all relevant device states: device type, network connectivity, firmware version, and any other configuration that could affect the interface logic. While exhaustive coverage is unrealistic, systematically varying one dimension at a time yields meaningful insights.

Fuzzing Architectures

There are two dominant fuzzing paradigms:

- Directed fuzzing—engineers predefine input patterns based on protocol specifications.

- Coverage‑guided fuzzing—the fuzzer mutates seeds and steers new vectors toward uncovered code paths.

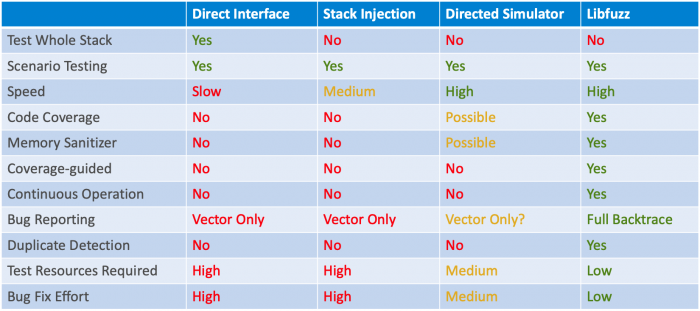

When embedded code cannot run on a PC, a lightweight simulator may be built, but this adds complexity. The following table summarizes four practical approaches:

- Direct interface testing on production hardware (real device, live packets)

- Packet‑stack injection (calling packet handlers directly in a host environment)

- Directed fuzzing with a PC simulator (pre‑built model of the firmware)

- Coverage‑guided fuzzing with a simulator (e.g., LibFuzzer on a host build)

Leveraging Multiple Testers

After securing the debug interface with lock and secure boot, expose the device’s public interfaces to a variety of fuzzing tools. Coverage‑guided fuzzers are ideal for ongoing testing, whereas directed fuzzers can quickly validate specific protocol edge cases on actual hardware. Combining multiple testbeds maximizes code coverage and, consequently, security resilience.

>> This article was originally published on our sister site, EDN.

Internet of Things Technology

- How IoT Is Mitigating Security Risks in the Oil & Gas Industry

- Secure IoT: Best Practices for Building Trustworthy Connected Products

- Essential Security & Testing Practices for IoT Devices

- SRAM PUF: The Ultimate Root of Trust for Secure IoT Devices

- STMicroelectronics’ STSafe‑A100 Evaluation Kit: Accelerate Secure IoT Development

- Prevent Enterprise IoT Security Breaches: The Comprehensive Checklist

- Understanding How IoT Devices Work: A Practical Guide for Product Managers

- Securing Your IoT Product: A Practical Guide to Preventing Hacker Attacks

- NIST Releases Draft Guidelines to Strengthen IoT Device Security for Manufacturers

- Google's $450M Investment in ADT Accelerates IoT Security Adoption