Edge AI Chip Eliminates Traditional MAC Arrays, Achieves 55 TOPS/W Efficiency

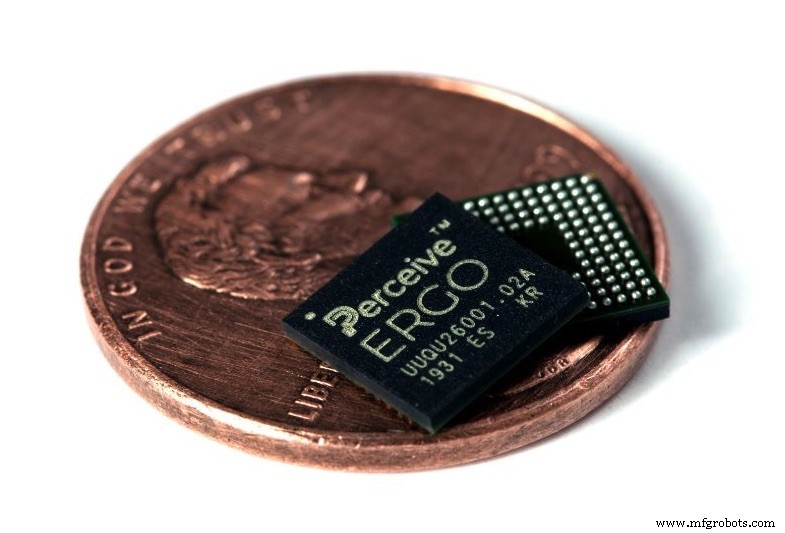

A Silicon Valley startup has reimagined neural network mathematics, delivering an edge AI chip that bypasses the conventional multiply‑accumulate (MAC) array. The result is a 7×7 mm chip, named Ergo, that delivers 4 TOPS of performance while consuming a mere 20 mW—yielding an impressive 55 TOPS/W. According to Perceive, the chip can run data‑center‑grade inference workloads such as YOLOv3 at 30 fps on less than 20 mW.

Perceive, founded in San Jose two years ago, emerged from a stealth phase and is wholly funded by its parent company, Xperi. The 41‑member core team is complemented by a similar number of Xperi engineers focused on applications for Ergo. Steve Teig, the founder and CEO of Perceive and also Xperi’s CTO, brings a deep history in chip design and AI, having previously led 3D programmable logic startup Tabula and served as CTO at Cadence.

Teig explains that the journey began with a simple question: “What computations do neural networks actually perform?” By applying information‑theoretic principles, the team identified a more efficient representation of network operations, which in turn informed the architecture of Ergo. This new approach eliminates the bulk of conventional MAC arrays, resulting in a chip that is 20‑ to 100‑times more power‑efficient than competing solutions.

Ergo’s architecture features four compute clusters, each with dedicated memory. Unlike typical accelerators that rely on MAC arrays for dot‑product calculations, Ergo’s clusters execute operations in a novel, highly parallel manner. This design supports a wide range of network topologies—including CNNs, RNNs, and even multiple heterogeneous models running concurrently—without the need for external RAM.

In practical terms, Perceive has demonstrated the simultaneous execution of YOLOv3 (60–70 M parameters) or M2Det alongside ResNet‑28 and an LSTM for speech processing. This capability enables real‑time vision and audio inference on the same edge device.

Power efficiency is further enhanced by aggressive power‑gating and clock‑gating techniques that leverage the deterministic nature of neural network workloads. Because inference code lacks branching, timing is predictable, allowing the chip to stay virtually off until triggered by a motion sensor or analog microphone. Once activated, Ergo can load a full data‑center‑grade model and start inference in roughly 50 ms, including decryption.

Beyond computation, Ergo incorporates a hardware image‑processing pipeline capable of de‑warping fisheye lenses, gamma correction, white‑balance, and cropping. An equivalent audio processing block supports beam‑forming across multiple microphones. A Synopsis ARC microprocessor with a DSP core handles additional pre‑processing and runs encrypted firmware, while a dedicated security block encrypts all data paths and network weights to safeguard IoT deployments.

Ergo connects to external Flash memory and supports over‑the‑air updates, allowing on‑device neural network re‑training or swapping of models as needed. The chip and its reference board are currently in sampling; mass production is slated for Q2 2024.

Steve Teig (Image: Perceive)

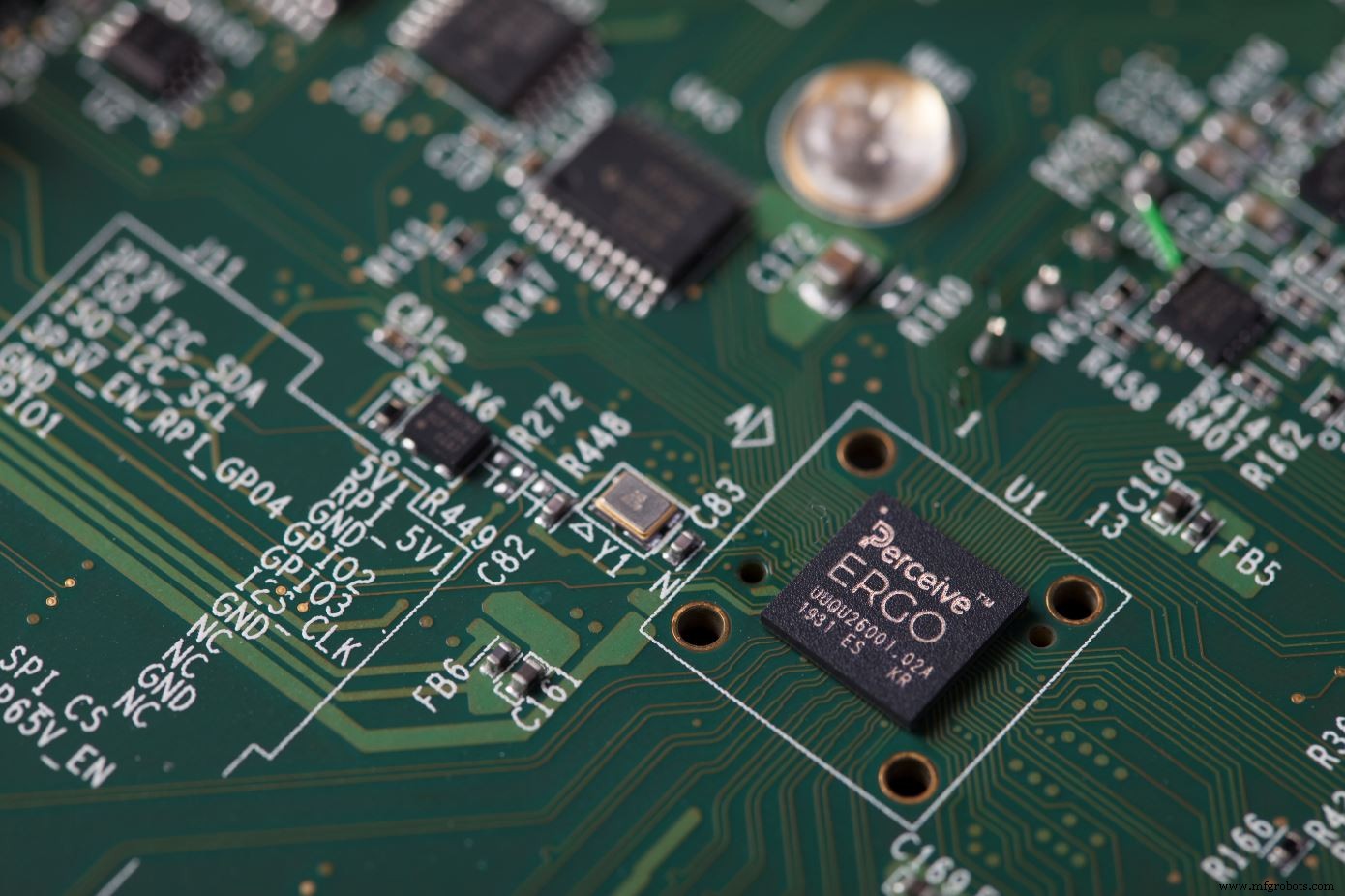

Perceive claims its Ergo chip’s efficiency is up to 55 TOPS/W, running YOLOv3 at 30fps with just 20mW (Image: Perceive)

Perceive’s technology is based on reinventing neural network maths using techniques from information theory (Image: Perceive)

Embedded

- Bluetooth Mesh Design Choices: Module vs. Discrete Device

- Edge AI Chips: Driving the Future of On-Device Intelligence

- Microcontrollers Power the Next Wave of Edge AI

- NVIDIA GTC Unveils Jetson AGX Orin: 200 TOPS in the Smallest Edge AI Supercomputer

- Nvidia Launches Drive AGX Orin, 200‑TOPS AI Chip with 3× Efficiency for Autonomous Vehicles

- Edge AI: Accelerating On-Device Intelligence and Transforming Consumer and Enterprise Markets

- Memory Technologies Powering Edge AI: Challenges and Opportunities

- Qualcomm Unveils Cloud AI 100: A Power‑Efficient Edge AI Accelerator for 5G and Beyond

- Kneron Unveils KL720 AI SoC, Boosting Edge Device Power Efficiency

- Forrester Forecasts 2022 IT Shift: Chip Shortage, Edge Computing, and IoT Driving Sustainability and Security Trends