Esperanto Unveils 1,093‑Core RISC‑V AI Accelerator for Data‑Center Inference

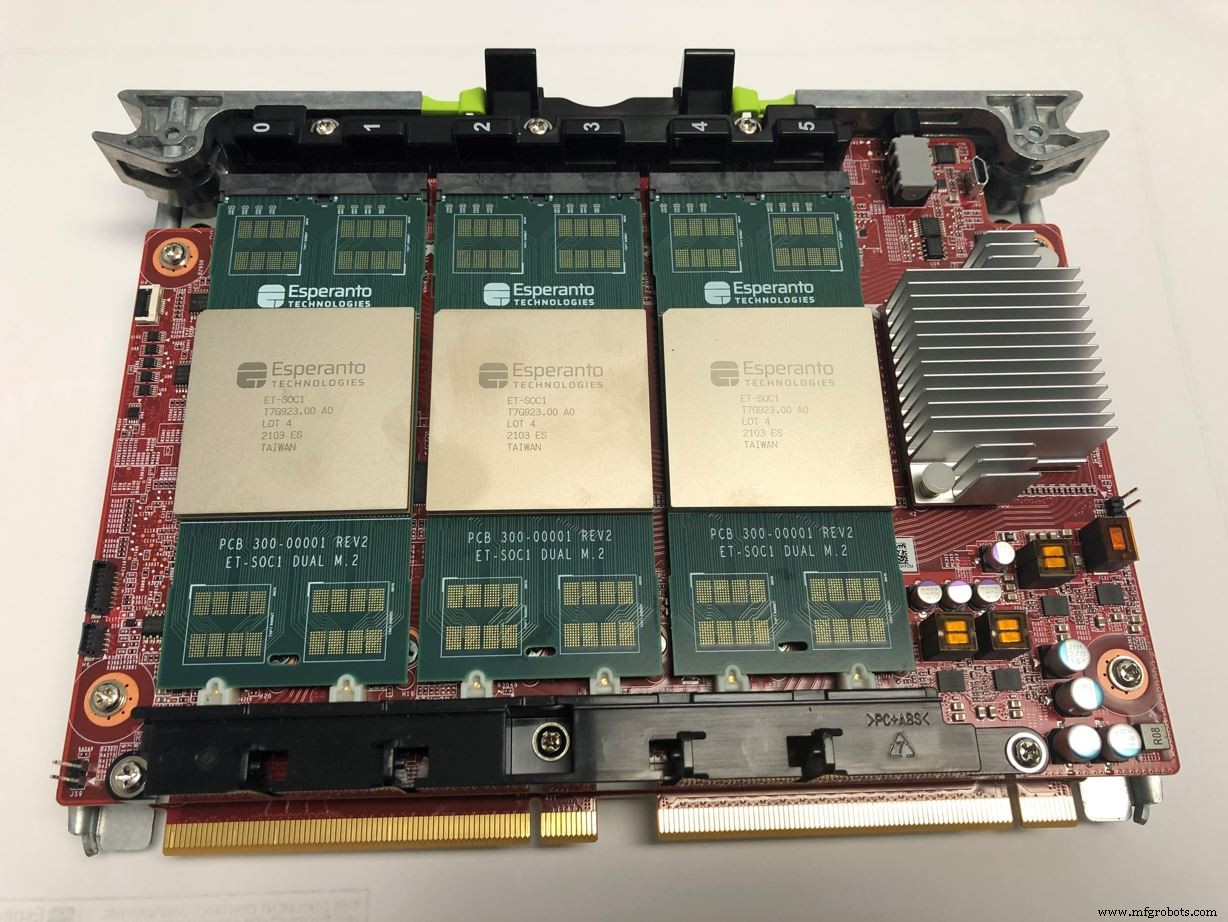

The startup Esperanto has announced the ET-SoC-1, a 1,093‑core RISC‑V AI accelerator designed for M.2 accelerator sockets in data‑center environments. Debuting at the Hot Chips conference, the chip offers 800 TOPS of performance across six modules on a single Glacier Point card while staying within a 120‑W power envelope.

Design Highlights

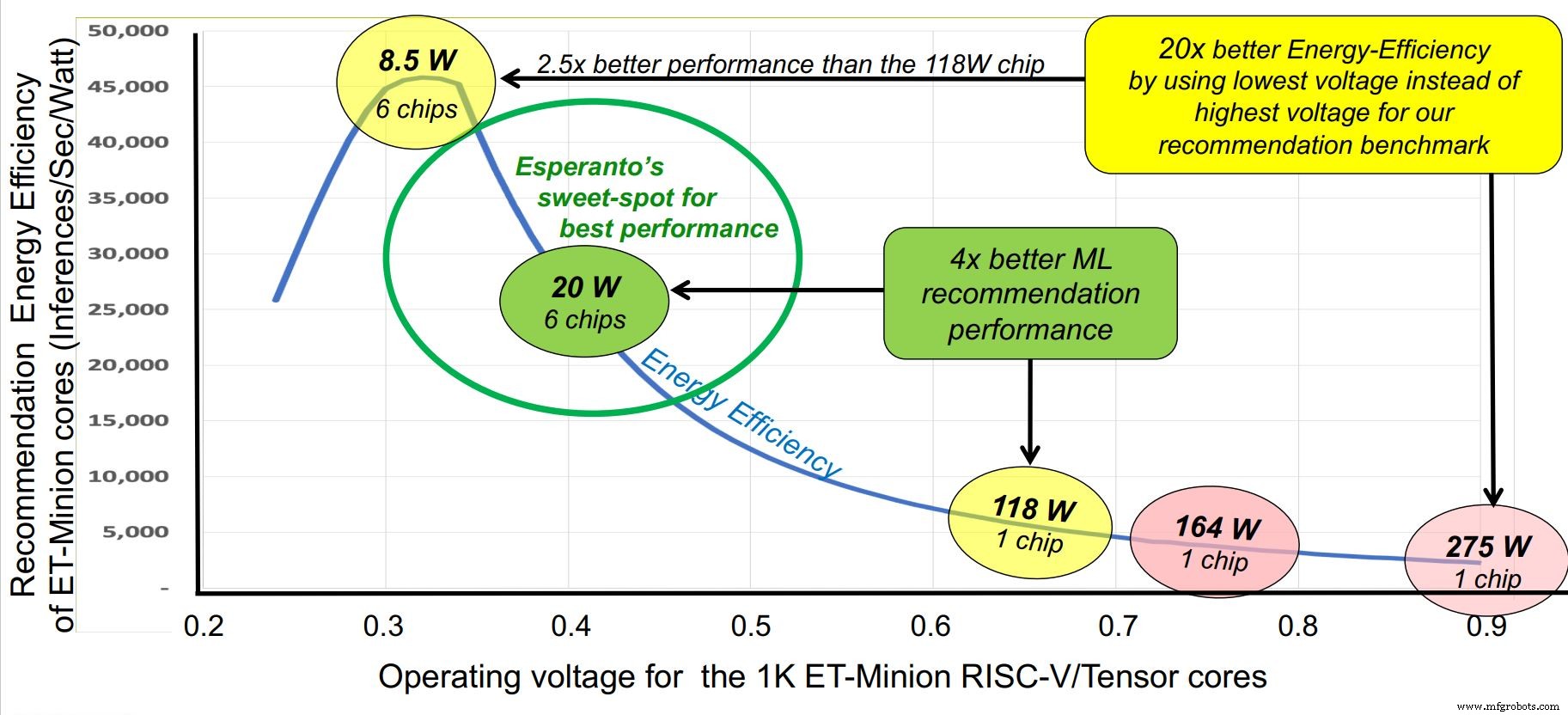

ET-SoC‑1 contains 1,088 ET‑Minion cores that execute AI workloads, four high‑performance ET‑Maxion cores and a RISC‑V service processor. The architecture is built around energy efficiency: a 1 GHz clock, 0.4 V supply and a 7 nm TSMC process give the chip a “sweet spot” of 20 W per unit, with flexibility to operate between 10 and 60 W.

Target Use‑Case: Recommendation Inference

Hyper‑scale operators require inference latencies of 10 ms or less for recommendation engines. The chip addresses this with 100 MB of on‑chip memory and 100 GB of off‑chip capacity, all on the accelerator card to avoid PCIe bottlenecks. Support for INT8, FP16 and FP32 keeps precision where it matters.

Core Architecture

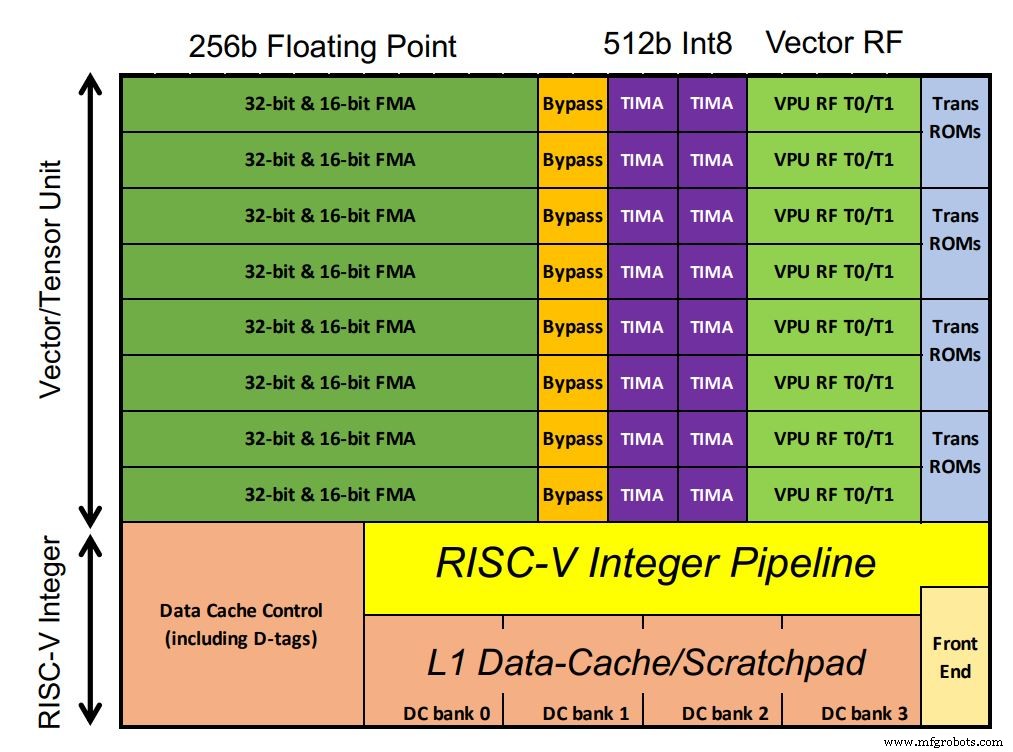

Each 64‑bit, in‑order core runs an AI‑optimised vector and tensor unit that delivers 128 GOPS per GHz. A custom multi‑cycle tensor instruction can execute more than 64,000 operations per cycle, reducing instruction traffic. Eight cores form a “neighbourhood” that shares an L2 cache and enables cooperative loads, while four neighbourhoods make up a “Minion Shire.” The chip offers 1,088 cores (or 1,024 for higher yield) and four ET‑Maxion cores comparable to an Arm A‑72.

Memory System

Four 64‑bit DDR interfaces (each comprising four 16‑bit channels) deliver 96×16‑bit bandwidth. The chip uses LPDDR4x, achieving energy per bit comparable to HBM, while the six‑chip stack reaches a 100‑GB off‑chip cache.

Competitive Positioning

Unlike the large, power‑hungry TPU or Inferentia, Esperanto’s low‑power, multi‑chip approach leverages the open‑compute ecosystem. Hyper‑scale operators looking for standardized, cost‑effective accelerators find Esperanto’s solution attractive.

Future Outlook

Future iterations may target edge use‑cases with a scaled‑down ET-SoC-1. The current version is slated for launch within the next couple of months.

Source: Esperanto & EE Times interview with Dave Ditzel, co‑author of the 1980 RISC paper.

Embedded

- Revolver: From Origins to Modern Manufacturing

- RISC‑V Summit 2020: Key Sessions & Highlights

- When a DSP Outperforms a Hardware Accelerator

- Choosing Between Hardware Accelerators and DSPs: A Practical Guide

- Bluetooth Mesh Design Choices: Module vs. Discrete Device

- Ensuring Trustworthiness in Processor Design: Best Practices for Secure and Reliable ICs

- Nvidia Launches Drive AGX Orin, 200‑TOPS AI Chip with 3× Efficiency for Autonomous Vehicles

- Embedding AI in Microcontrollers: Unlocking TinyML’s Potential

- SamurAI: Low‑Power AI Chip Sets New Benchmark for Image Recognition in IoT

- EnSilica Unveils eSi‑MediSense: First Single‑Chip Medical Sensor with Integrated ML Accelerator