Neuromorphic Vision Drives Breakthroughs from Healthcare to Space

Prophesee’s event‑based vision sensors are already reshaping a wide range of fields—from restoring sight in patients to spotting space debris at night. With a global community of 2,200 inventors, the company’s technology is proving that fast, low‑power vision can deliver tangible results in real‑world applications.

Restoring Vision for a Blind Patient

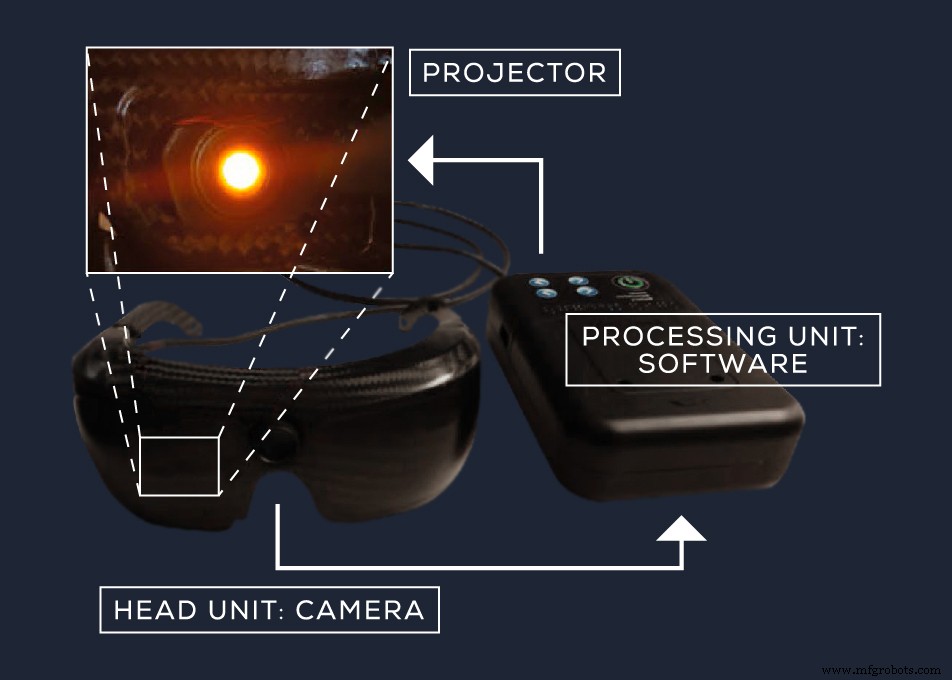

Gensight Biologics partnered with Prophesee to create goggles that convert visual information into amber light (590 nm). The light activates photosensitive proteins delivered to a patient’s retina, enabling the eye to interpret the signal. After seven months of use, the former blind patient could identify a pedestrian crosswalk, spot a notebook on a table 92 % of the time, and detect a pencil case 36 % of the time. He also managed to grasp and count objects. The study, published in Nature Medicine in collaboration with the University of Basel‑UPMC, demonstrates the sensor’s ability to deliver rapid, continuous visual data without the overhead of image re‑encoding.

Gensight Biologics goggles translate images into amber light, allowing modified retinal cells to perceive the world. (Source: Gensight Biologics / Prophesee)

Accelerating Cell‑Therapy Manufacturing

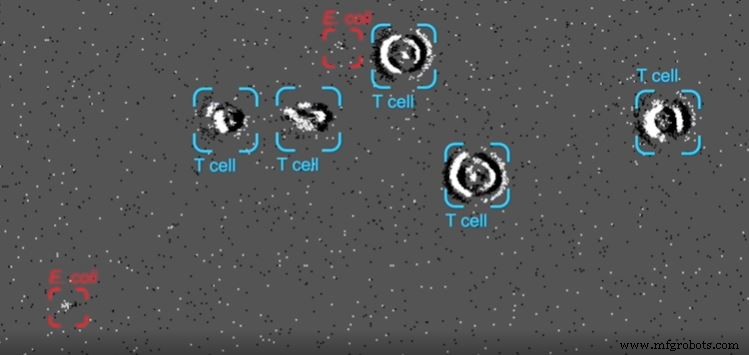

Manufacturing patient‑specific cell therapies is time‑consuming and costly—an average dose can cost $475,000. Cambridge Consultants used Prophesee’s sensor to monitor cell cultures in real time, detecting bacterial contaminants in under 18 ms versus the traditional 7‑14 day incubation. The PureSentry system can identify, track, and classify pathogens like E. coli while operating in low light and high flow rates, enabling a non‑destructive, automated sterility test that reduces the need for highly skilled technicians.

PureSentry combines Prophesee vision with AI to detect contaminants by size, shape, and motion. (Source: Cambridge Consultants)

Microfluidic Particle Tracking

A consortium of universities (Glasgow, Heriot‑Watt, Strathclyde) leveraged Prophesee’s sensor to capture 1 µm particles moving at 1.54 m/s with a temporal resolution of 20,000 frames per second. The setup, which pairs the sensor with a standard fluorescence microscope, promises faster, cheaper microfluidic analysis.

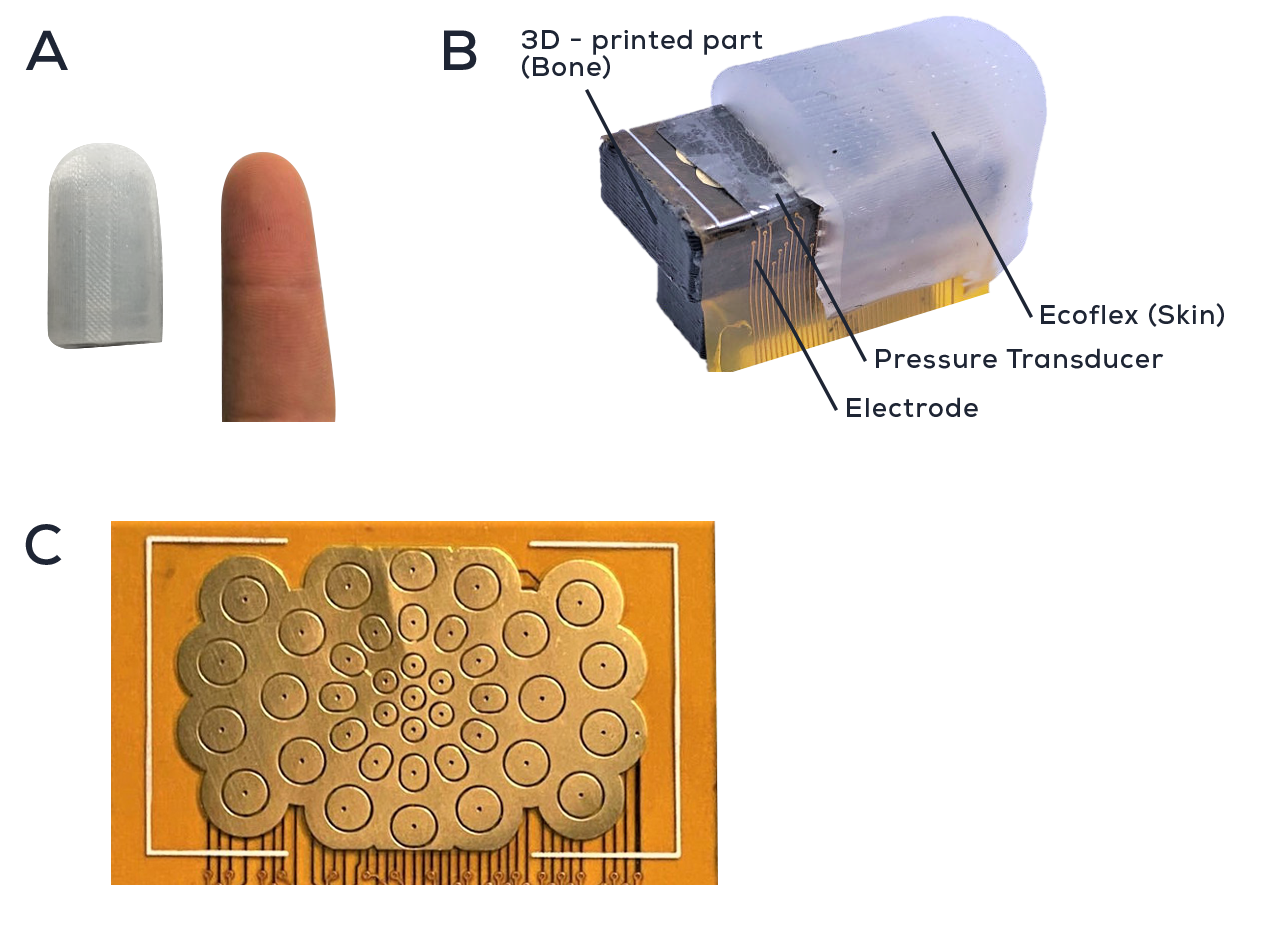

Robotic Tactile Vision

Researchers at the National University of Singapore combined Prophesee’s event‑based vision with a novel touch array—NeuTouch—to give robotic arms a sense of touch. The 39‑pixel “tactel” array, sized like a human fingertip, produces spike signals that an Intel Loihi neuromorphic processor interprets alongside visual data. The robot learned to pick up containers of varying liquid content, determining both type and weight by observing container deformation. In a slip‑test, the combined sensor suite detected slip 1,000× faster than human touch, identifying rotational slip in just 0.08 s.

NeuTouch: a fingertip‑sized touch sensor array integrated with Prophesee vision for robotic perception. (Source: NUS / Prophesee)

Tracking Space Debris

Western Sydney University’s Astrosite mobile observatory couples Prophesee sensors with telescopes to monitor orbiting debris. With 4,850 satellites orbiting Earth and only ~40 % active, passive debris tracking is essential. Conventional cameras produce excessive data and struggle in daylight. Astrosite’s event‑based approach generates 10‑ to 1,000‑fold less data, enabling continuous, microsecond‑resolution monitoring at low power—both day and night.

Astrosite observatories ready for deployment. (Source: Western Sydney University)

These diverse projects illustrate how event‑based vision delivers speed, efficiency, and robustness across industries, from healthcare to space exploration.

Related Topics

- Neuromorphic AI chips for spiking neural networks debut

- Sensor fusion enables robotic grip

- Stacked sensor architecture brings advanced vision capabilities

- Machine learning algorithm exploits ReRAM variability

- Tools Move up the Value Chain to Take the Mystery Out of Vision AI

For more Embedded content, subscribe to Embedded’s weekly email newsletter.

Embedded

- Visualizing Sensor Data with RTI Connext DDS Micro and Admin Console

- Arduino Sensors: Types, Applications, and Real‑World Projects

- Fingerprint Sensor Technology: Working Principles, Applications, and Arduino Integration

- Vibration Sensors: Principles, Types, and Industrial Applications

- How Oxygen Sensors Work and Their Key Applications in Automotive and Industrial Systems

- Prophesee & Sony Unveil Stacked Event‑Based Vision Sensor with Record HDR and Ultra‑Small Pixels

- TI Introduces TMAG5170: A High‑Precision 3D Hall‑Effect Sensor Empowering Real‑Time Control in Factory Automation

- Discover How Vision‑Guided Picking Optimizes Your Production: Free Webinar

- Seven Proven Ways Computer Vision Drives Efficiency & Saves Costs

- Split‑Ring Torque Sensor: NASA’s Optical Torque Measurement Innovation